What if intelligence didn't need to evolve? What if it was always there, waiting in the mathematics of complexity itself?

Blaise Agüera y Arcas, in a recent exploration of artificial life experiments, asks a question that cuts to the heart of how we think about intelligence, consciousness, and life itself. Not whether we can build intelligent machines, but whether intelligence is something built at all - or something that emerges inevitably from certain kinds of systems.

The Accident We Call Evolution

We tend to think of intelligence as the product of billions of years of biological evolution. A happy accident of chemistry and natural selection. DNA, neurons, consciousness - each step a contingent development that could easily have gone differently.

But what if that's the wrong frame? What if intelligence isn't a biological accident but a computational inevitability?

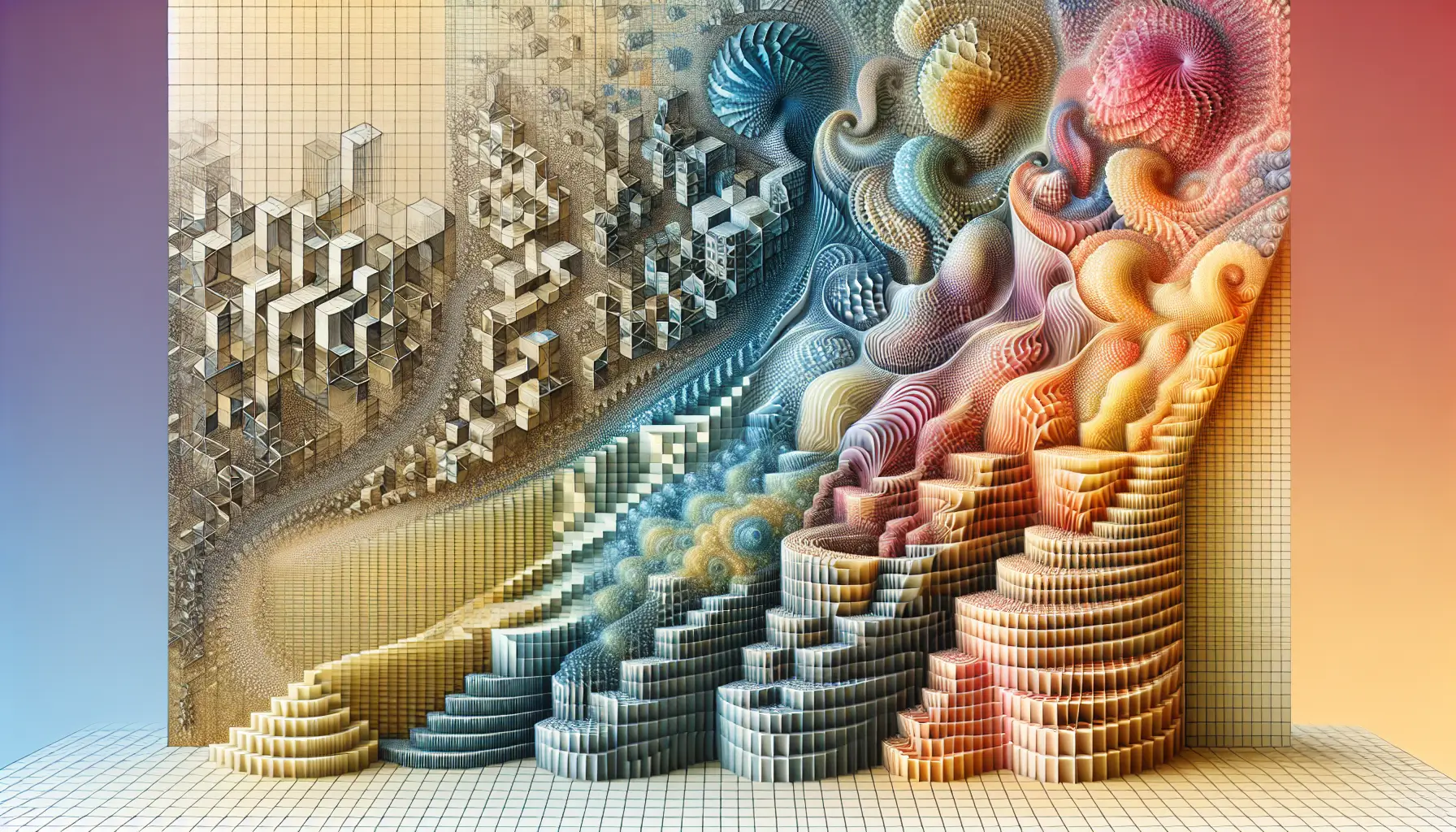

Agüera y Arcas explores this through artificial life experiments - simulated environments where simple rules give rise to complex behaviours. No explicit programming for survival, reproduction, or adaptation. Just basic computational principles and time.

And what emerges looks strikingly like life. Entities that compete, cooperate, adapt. Patterns that persist and evolve. Not because they were designed to, but because the underlying mathematics makes certain outcomes more stable than others.

Computation as Substrate

The traditional view separates the biological from the computational. Life is carbon-based, wet, evolutionary. Computation is silicon, abstract, designed.

But if Agüera y Arcas is right, that distinction misses something fundamental. Both might be expressions of the same underlying principles. Certain kinds of systems - whether they're made of neurons or transistors - naturally tend towards complexity, self-organisation, and what we might call intelligence.

This isn't mysticism. It's mathematics. Systems with feedback loops, competition for resources, and enough computational depth will develop strategies, adaptations, emergent behaviours that look remarkably like life.

The question becomes: at what point does emergent complexity become intelligence? At what point does pattern-matching become understanding?

What This Means for AI Development

If intelligence is emergent rather than designed, that changes how we should think about building AI systems. Perhaps the goal isn't to programme intelligence but to create the conditions where intelligence can emerge naturally.

We see hints of this already. Large language models weren't explicitly taught to reason or understand context. They were given enough computational depth, enough training data, enough feedback loops - and reasoning emerged. Not perfectly, not completely, but recognisably.

The implications are both exciting and unsettling. Exciting because it suggests intelligence might be more accessible than we thought - not requiring billions of years of evolution but potentially arising from the right computational substrate.

Unsettling because if intelligence is emergent, we might not fully control or understand what emerges. The gap between input (training data, reward functions) and output (behaviour, reasoning) becomes less predictable.

The Bigger Picture

Agüera y Arcas is asking us to reconsider what makes something alive, intelligent, conscious. Not as properties that need to be built in, but as natural outcomes of certain kinds of complexity.

For developers and researchers, this reframe matters. It suggests we should focus less on explicitly programming capabilities and more on creating environments where useful behaviours can emerge. Less engineering, more cultivation.

For the rest of us, it raises deeper questions. If intelligence arises naturally from computational complexity, what does that say about our own intelligence? Are we special because we're biological, or are we just one expression of a more fundamental pattern?

The experiments continue. The questions remain open. But the possibility that intelligence is woven into the fabric of computation itself - that's worth sitting with.