Most AI agent tutorials end where the real work begins. They show you a demo that works once, then leave you to figure out why it fails in production. n8n's new technical guide does something different: it maps the failure modes first, then shows you the patterns that handle them.

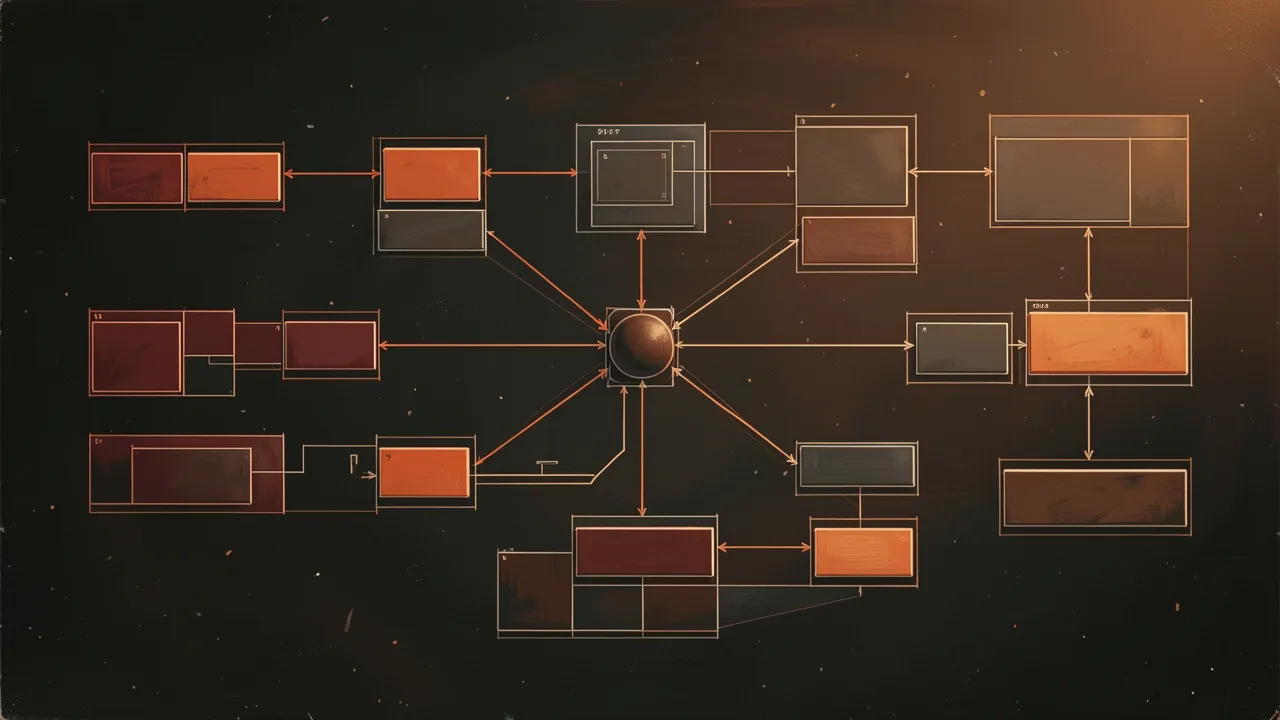

Two categories of patterns matter. Behavioral patterns - how the agent thinks and acts. Topological patterns - how multiple agents coordinate. Get the first wrong and your agent makes bad decisions. Get the second wrong and your system doesn't scale.

Behavioral Patterns: How Agents Think

Tool Use is the simplest pattern. Agent has access to functions, decides when to call them, processes results. Works great until the agent calls the wrong tool or misinterprets the output. The failure mode is silent errors - the agent thinks it succeeded but gave you garbage data.

ReAct (Reasoning + Acting) adds explicit reasoning steps. Agent states its plan, executes an action, observes the result, then reasons about what to do next. This makes failures visible. You can see where the logic broke. But it's slower - every action requires a reasoning step. Use it when correctness matters more than speed.

Reflection patterns let agents critique their own outputs. Generate a response, evaluate it against criteria, regenerate if needed. This catches obvious mistakes but adds latency and token cost. The guide includes a decision matrix: use reflection for high-stakes outputs where a bad result has real consequences. Skip it for draft generation or exploratory tasks.

Planning patterns have the agent outline steps before executing. Good for complex multi-step tasks. Terrible for dynamic environments where conditions change. The plan becomes obsolete before execution finishes. The trick is knowing when you need a plan and when you need to react.

Topological Patterns: How Agents Coordinate

Orchestrator-Executor is the bread and butter of production systems. One agent routes tasks to specialist agents. Each specialist does one thing well. The orchestrator handles the complexity. This scales because you can improve individual specialists without touching the routing logic.

The failure mode: the orchestrator becomes a bottleneck. If it's deciding every action, you're back to a single point of failure. The solution is clear routing rules. The orchestrator should route, not think. Push decision-making into the specialist agents.

Sequential Chain patterns pass outputs from one agent to the next. Research agent gathers information, summarization agent condenses it, writing agent produces the final output. Simple to reason about. Fails catastrophically if any agent in the chain produces bad output - garbage in, garbage out, amplified at each step.

Add validation between chain steps. Each agent verifies its input before processing. This catches errors early instead of propagating them through the entire chain. Costs tokens but saves you from shipping broken outputs.

Parallel Fan-Out for Speed

When tasks are independent, run them in parallel. One agent spawns multiple specialist agents, each working on a different subtask. They all report back to the coordinator, which synthesizes results. This is how you get 10x speed improvements - not better models, just better orchestration.

The catch: results need to be mergeable. If you're gathering information from five sources and they contradict each other, someone has to resolve conflicts. That someone is usually another agent, which means another LLM call. Budget for it.

Hierarchical Patterns for Complex Tasks

Hierarchical architectures are orchestrator-executor patterns nested inside each other. Top-level orchestrator breaks a project into phases. Each phase has its own orchestrator managing specialist agents. This scales to genuinely complex work - the kind that takes hours, not seconds.

The guide's insight: hierarchical patterns are over-engineering for most use cases. They add complexity and failure points. Use them only when you've proven simpler patterns won't work. Start simple, add hierarchy when you have evidence you need it.

The Selection Matrix That Matters

The guide includes decision matrices for pattern selection. Task complexity on one axis, reliability requirements on the other. Low complexity + low reliability needs? Tool use pattern. High complexity + high reliability? Hierarchical with reflection.

This is what's been missing from agent tutorials. Not just "here's how to build this", but "here's when to build this instead of that". The patterns aren't good or bad. They're appropriate or inappropriate for your constraints.

For builders shipping AI products, this matters immediately. You can stop guessing which architecture to use and start selecting based on actual trade-offs. Speed vs accuracy. Simplicity vs capability. Cost vs reliability. The patterns give you a vocabulary for those decisions.

The difference between a prototype and production is knowing what breaks and how to prevent it. These patterns are the accumulated scar tissue of teams who shipped agents and learned the hard way. Now you don't have to.