Free ChatGPT isn't actually free. Every conversation you have costs OpenAI money - quite a lot of it, in fact. And as the service grows, those costs are becoming harder to ignore.

The Real Price of Convenience

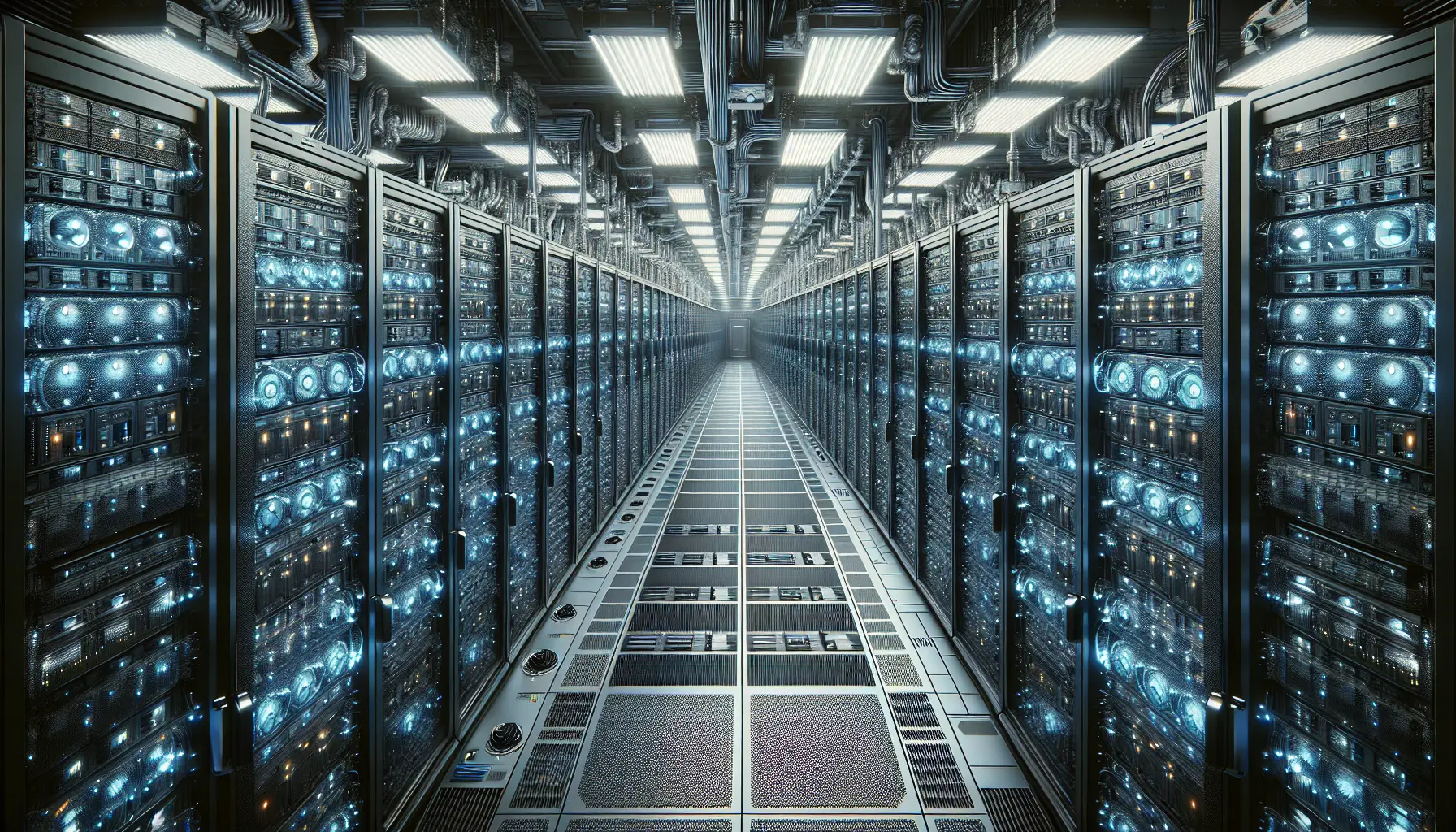

When you fire up ChatGPT and ask it to draft an email or explain quantum physics, you're tapping into a vast infrastructure of data centres, GPUs, and cooling systems running 24/7. OpenAI doesn't publish exact figures, but industry analysts estimate each conversation costs them between a few cents to several dollars, depending on length and complexity.

Multiply that by hundreds of millions of users globally, and you start to see the problem. According to TechRadar, OpenAI is now exploring multiple revenue strategies to keep the lights on - from advertising to new pricing tiers that sit between the current free and premium offerings.

The maths is brutal. Unlike traditional software that costs roughly the same to serve one user or a million, AI models scale linearly with usage. More users means proportionally more compute, more electricity, more infrastructure. There's no magic efficiency curve that makes it cheaper at scale.

Why This Matters Now

OpenAI built ChatGPT assuming they'd figure out the economics later. That 'later' has arrived. The company reportedly spends more on compute than it earns from subscriptions, even with millions of paying users. Something has to give.

The first obvious move is advertising - the Silicon Valley playbook for 'free' services that aren't really free. But AI conversations are different from search queries or social feeds. Where do you place an ad in the middle of someone asking for medical advice or debugging code? It's not impossible, but it's delicate.

More likely is what the industry calls 'tiering' - multiple subscription levels offering different capabilities. Free users might get access during off-peak hours or slower response times. Mid-tier users get priority. Premium users get the full experience with no throttling. It's how cloud services have worked for years.

The Bigger Picture

Here's what really matters: the economics of AI fundamentally don't work like traditional software. You can't just 'throw more servers at it' and watch costs drop. Every query burns real energy and real compute in real time.

This affects more than just ChatGPT. Every company building AI features into their products is facing the same equation. The promise of AI everywhere runs straight into the reality of AI costs. Some applications will justify the expense - a medical diagnostic tool that saves lives is worth running, even if it's expensive. A chatbot that slightly improves customer service? Maybe not.

For builders and businesses, the lesson is clear: factor in ongoing operational costs from day one. That brilliant AI feature you're planning might cost more to run per month than your entire current infrastructure. And unlike traditional software where costs stabilise, AI costs scale with success.

OpenAI's struggle isn't a failure - it's a preview. The free era of AI was never sustainable. What comes next will be more honest about costs, more creative about business models, and probably less generous with compute. That's not cynicism; it's physics.