A developer just finished building a production AI pipeline that processes Twitter/X data daily. It ran for months. Some parts worked brilliantly. Others failed in ways they didn't expect. Their writeup on Dev.to is worth reading because it's honest about both.

The Setup

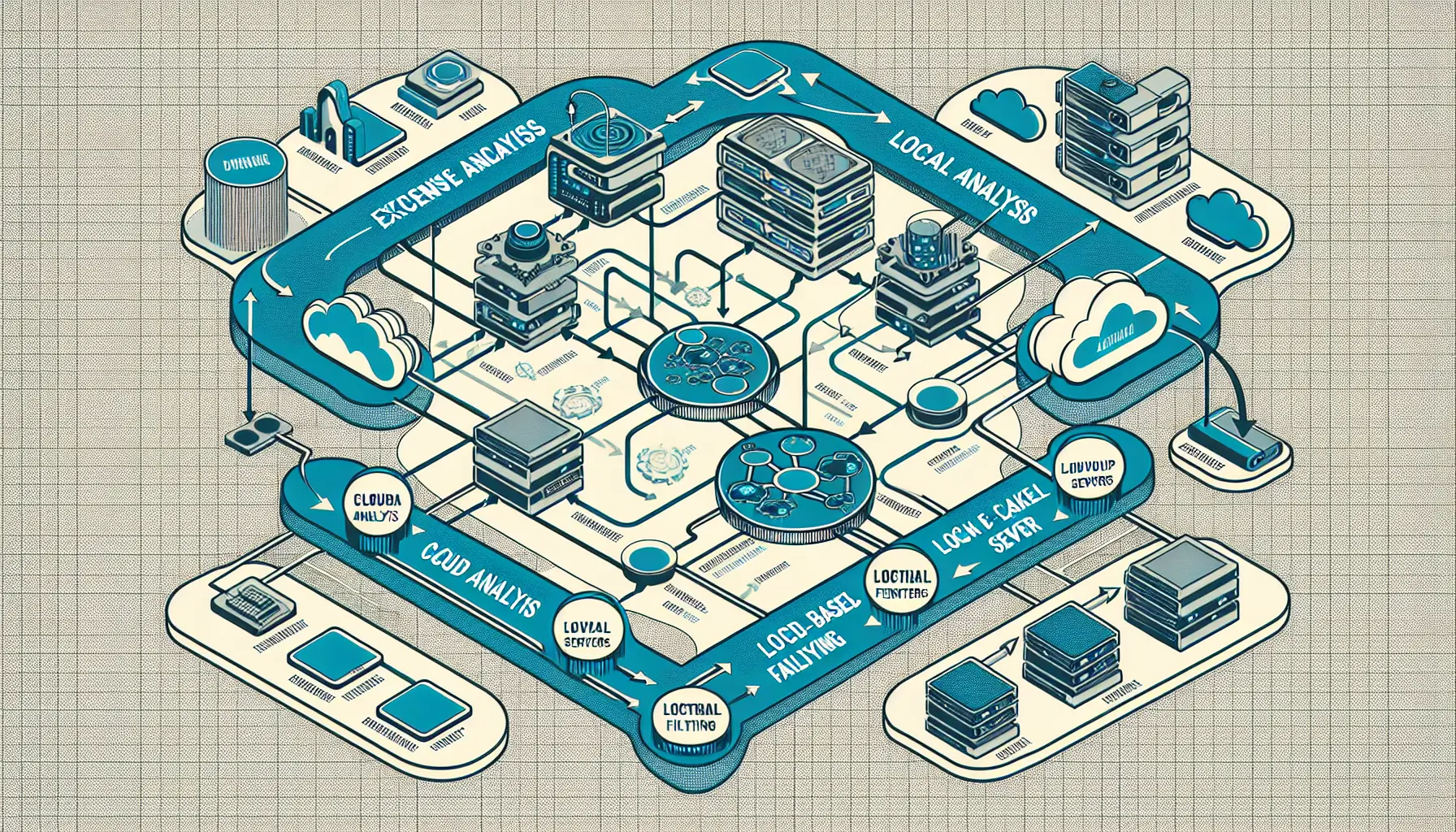

The project was straightforward in concept: pull daily Twitter/X content, run it through AI models for analysis and summarisation, publish the results. The architecture used both cloud-based models (Gemini) for heavy lifting and local models (Ollama) for simpler tasks. This hybrid approach turned out to be one of the smartest decisions.

Running everything through cloud APIs would have been expensive - potentially hundreds of pounds per month at scale. Running everything locally would have been slow and limited the sophistication of analysis. The hybrid model let them route simple tasks (filtering, basic classification) to local models while sending complex analysis (nuanced summarisation, entity extraction) to Gemini.

What Worked

Cost management through smart routing proved essential. By processing the bulk of content locally and only sending filtered, relevant data to paid APIs, monthly costs stayed under £20. That's sustainable - the difference between a side project that runs indefinitely and one that gets shut down when the credit card bill arrives.

The agentic architecture also delivered. Rather than a monolithic pipeline, they built separate agents for different tasks - one for data collection, another for filtering, another for analysis. When one component failed, the others kept running. When they needed to adjust how filtering worked, they could modify that agent without touching the rest of the system.

Local models (specifically Llama 3.2) handled routine classification surprisingly well. The writeup notes they were faster and more consistent than expected for simple yes/no decisions and basic categorisation. Not every task needs GPT-4.

What Didn't Work

Prompt engineering remains more art than science. What worked one day would drift the next as upstream models changed. The developer spent significant time building prompt templates that were robust across different types of content and edge cases. It's tedious, unglamorous work - but there's no shortcut.

Rate limiting and API quotas proved harder to predict than anticipated. Even with careful cost monitoring, unexpected spikes in data volume would hit limits they didn't know existed. The solution? Defensive architecture with retries, queues, and graceful degradation when services are unavailable.

Data quality problems compounded downstream. Twitter/X content is messy - threads break, context disappears, spam proliferates. The AI models don't magically fix that. Building good filters and validation layers upfront saved enormous headaches later.

Lessons for Builders

The biggest takeaway: start with the simplest thing that could possibly work, then add complexity only when you hit actual limitations. The developer's initial architecture was far more complex than needed. Simplifying it made the system more reliable, not less.

For non-developers or those new to AI pipelines, this writeup demonstrates something crucial - you don't need a PhD in machine learning to build production AI systems. But you do need to think carefully about costs, failure modes, and data quality from day one. The engineering matters as much as the AI.

The hybrid approach - mixing cloud and local models - is likely the pattern that makes sense for most applications. Pure cloud is expensive. Pure local is limited. Thoughtfully combining them gives you flexibility and keeps costs manageable.

The Honest Bit

What makes this writeup valuable is the willingness to show what failed. Most case studies present polished success stories. This one shows the messy reality - the unexpected costs, the prompt adjustments, the rate limits, the data quality issues. That's useful.

If you're building anything with AI right now, particularly production pipelines that run continuously, this retrospective offers a realistic view of what to expect. The technology works, but it requires careful engineering and constant adjustment. There's no 'set it and forget it' with agentic AI systems - at least not yet.