Today's Overview

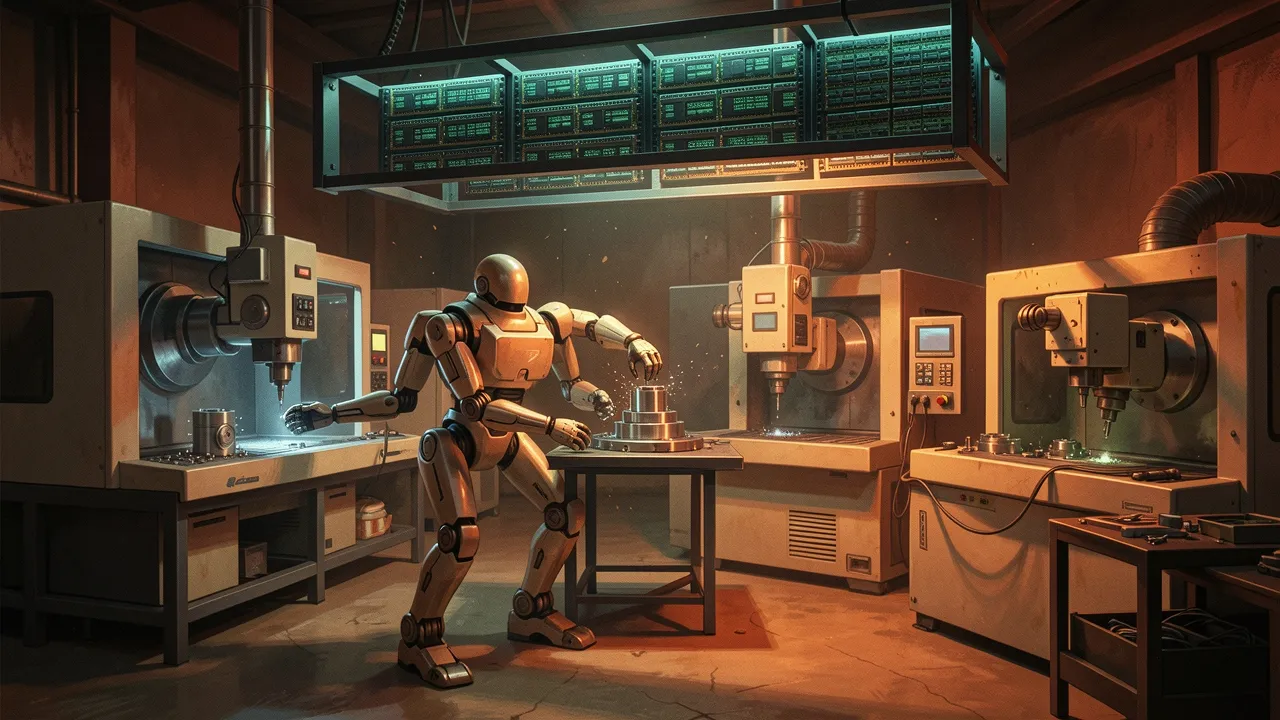

Schaeffler is deploying 1,000 humanoid robots across its factories by 2032-and this isn't vapourware. The company completed a successful pilot with Hexagon's AEON robots, which used their wheel-based legs and sensor suite to handle multi-machine manufacturing tasks: loading, unloading, inspecting parts. The difference between this announcement and the endless hype cycle is clear: they have pilot data, a supply partnership for custom actuators, and a deployment timeline. This is what happens when robotics moves from research to operations.

The inference inflection is real-and it's changing the hardware story

Forget training. The conversation around AI compute has fundamentally shifted. OpenAI's Sam Altman said it plainly: "We have to become an AI inference company now." This matters because it rewires the entire economics of AI infrastructure. Inference-the act of running a model to produce output-now consumes vastly more compute than training ever did. Jensen Huang's GTC keynote put a number on it: the compute demand for inference has increased roughly 10,000 times in the last two years. CPU compute, which had been starved of investment for budget-constrained cloud providers, is suddenly critical again. Long-context reasoning, tool calls, agentic loops-they all burn tokens. And tokens are what you pay for.

This has cascading effects. Prefill/decode disaggregation is now standard practice. vLLM achieved 230 tokens/second on DeepSeek V3.2 using Blackwell GPUs. Amazon's bet on Trainium (inference chips, not training) is paying off. Intel sees CPU refresh demand returning after two years of drought. The infrastructure market is reshaping itself around a simple truth: if you could just get more inference capacity, you could ship faster, serve more users, and grow revenue immediately. This isn't abstract economics-it's a constraint every startup building on AI is hitting right now.

Agents are becoming platforms. Coding agents especially.

OpenAI's Codex has shifted from a coding tool to a general work surface. The product evolution is instructive: persistent context, integrations (Supabase, Figma), app-server mode, team rollout. Cursor launched an SDK to let you embed its runtime into CI/CD pipelines and custom applications. VS Code shipped semantic indexing and cross-repo search. The throughline is clear: the bottleneck isn't model intelligence anymore-it's harness quality, memory management, tool orchestration, and execution reliability. n8n built an MCP server that generates working workflows from natural language prompts, then tests and iterates on them autonomously. You describe what you want; Claude or ChatGPT builds it, validates it, runs it, fixes it. No copy-paste. No context-switching between tools.

This matters for builders because it suggests the next layer of productivity gain isn't coming from bigger models-it's coming from smarter systems around models. The companies that win will be the ones that solve state management, tool binding, error recovery, and observability at scale. Google's Gemini Live API integrated into Agora's real-time voice infrastructure, letting you build voice agents with sub-second latency and tool calls wired to physical hardware (they demoed a humanoid robot responding to voice commands). This is the kind of applied work that compounds: each improvement to the harness makes agents more reliable, which makes them useful for harder problems, which justifies more investment.

The robotics and inference stories intersect here. A humanoid robot in a factory needs to reason about what it's looking at, make decisions, and execute tasks-all in real time. That's multiple inference loops per second. You need both the physical platform and the computational infrastructure. Schaeffler and Hexagon aren't just deploying robots; they're solving the full stack: actuators, sensors, AI, and operational data loops. That's the pattern worth watching across the entire industry right now.