Agents Now Deploy Code. Validation Gets Smarter. The Trial Continues.

Today's Overview

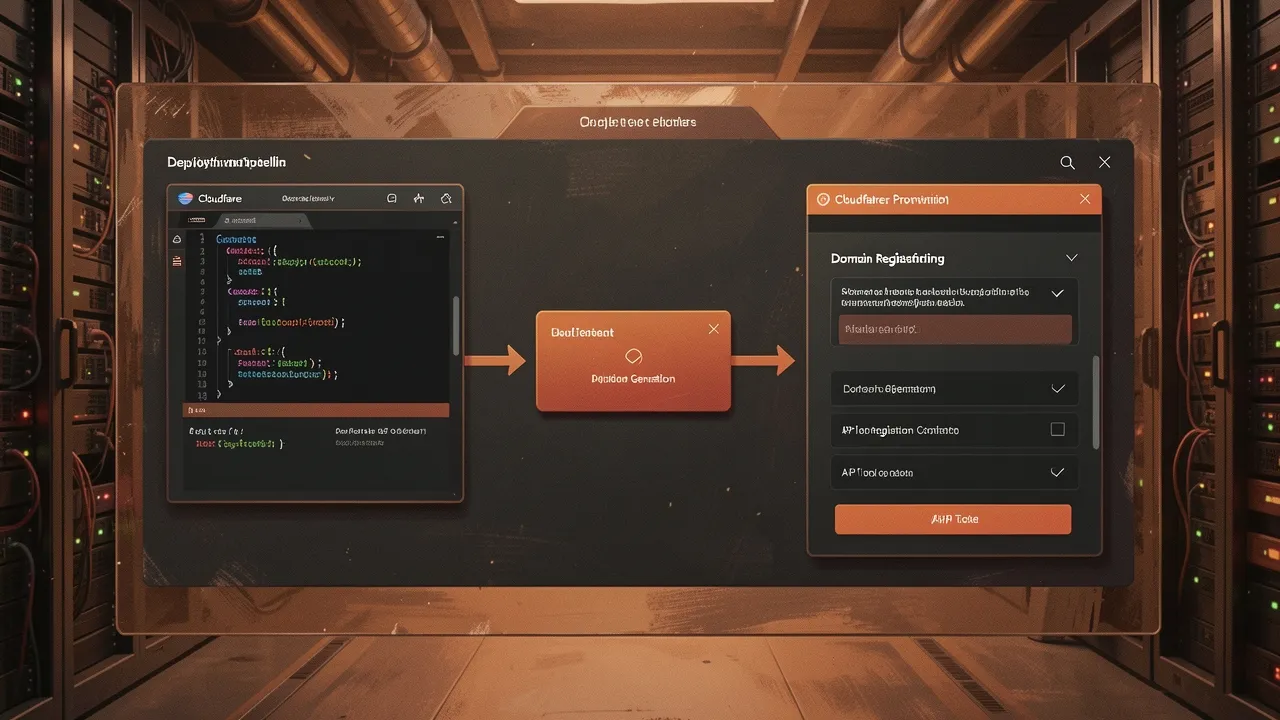

If you've been watching agent capability creep, yesterday's announcement from Cloudflare crossed a real threshold: AI agents can now create Cloudflare accounts, buy domains, and deploy code to production without human intervention. Built in partnership with Stripe, the system lets agents handle every step from account creation through API key generation to domain registration. Humans still gate the permission-they have to approve terms of service and set a budget cap-but after that, there's no dashboard login, no copy-pasting tokens, no entering credit card details. The agent just builds and ships. What matters isn't the convenience; it's that this standardises a protocol other platforms can adopt. Any service with signed-in users can now let agents provision infrastructure on their behalf. That's the infrastructure story for agentic systems.

Validation Moves Into the Lab

Away from deployment, someone's thinking harder about what agents should validate. MIT researchers unveiled WRING, a debiasing technique for vision-language models that avoids the "Whac-A-Mole dilemma"-the problem where fixing one bias accidentally amplifies others. The technique rotates coordinates in a model's embedding space so it can no longer distinguish between demographic groups on a specific concept, while leaving other relationships intact. It's post-processing, so it works on pre-trained models without retraining. The immediate application: dermatology AI that classifies skin lesions accurately across all skin tones. But the pattern matters more than the problem domain. This is what responsible scaling looks like: not faster models, but models that don't fail certain populations.

Separately, a Google Cloud Next concept explored agentic AI validating mission-critical systems-specifically, a simulated kidney dialysis machine. The idea: instead of humans running each test protocol, an agent observes telemetry, injects faults, verifies safety responses, and reasons through whether the system's interlocks worked. Still theoretical, but it hints at where validation infrastructure goes when human time is the scarce resource.

The Valuation and the Trial

On the financing side, Anthropic could raise $50 billion at a $900 billion valuation, according to sources. Multiple pre-emptive offers have landed in the $850-900 billion range. Meanwhile, the Musk-OpenAI trial entered its third day with cross-examination heating up; Musk accused lawyers of trying to "trick" him while defending his position that OpenAI abandoned its non-profit mission. Microsoft, meanwhile, announced a $900 million charge for a voluntary retirement program-about 7% of eligible US employees can take it if their age and service add up to 70 or more. The company is simultaneously burning record capital on data centers (over $40 billion next quarter) while reshaping its workforce for "pace and agility."

The pattern across all three stories is the same: AI systems are moving from answering questions to making decisions that touch infrastructure, medicine, and money. The constraint shifting from capability to governance. Not "can we build this" but "can we build it right."