There's a brilliant post on DEV.to about over-engineering. A developer built a RAG pipeline - retrieval-augmented generation, the architecture that lets AI models access specific knowledge - with a separate vector database for embeddings. It was technically correct. It followed best practices. And it created a data-sync nightmare that nearly killed the project.

So they deleted it. Moved everything into Postgres with pgvector. Problem solved.

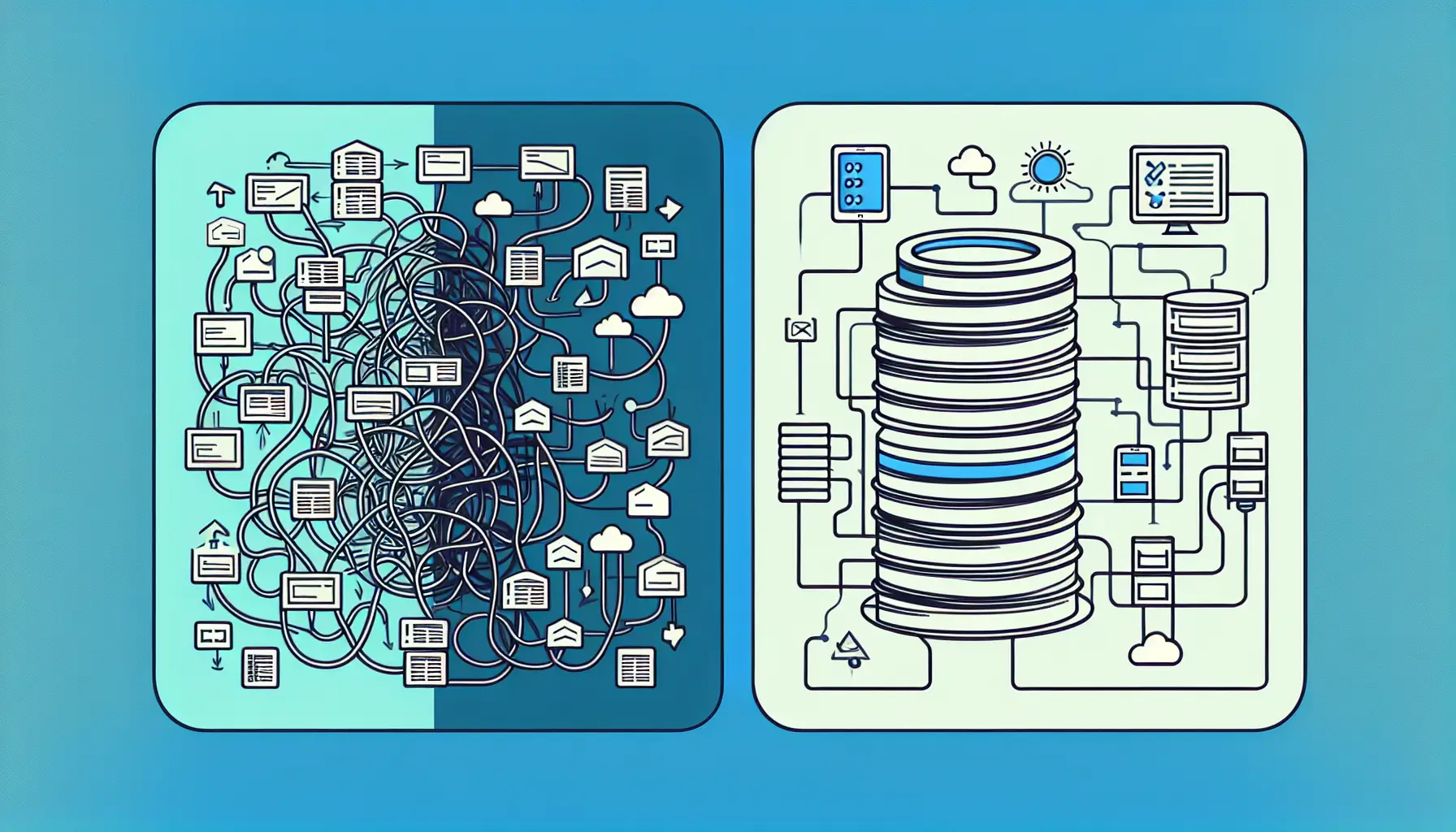

The original architecture

The setup made sense on paper: application database in Postgres, vector embeddings in a purpose-built vector database like Pinecone or Weaviate, microservice handling the AI logic, API layer coordinating everything. Clean separation of concerns. Scalable. Modern.

In practice: data living in two places, sync jobs trying to keep them consistent, edge cases where embeddings referenced deleted records, deployment complexity, debugging across services, and costs mounting faster than expected. The architecture was correct. It just wasn't useful.

The Postgres solution

Postgres with pgvector does vector similarity search directly in your existing database. Same transactions, same backup strategy, same operational knowledge. One source of truth. When you update a record, the embedding updates atomically. No sync jobs. No eventual consistency nightmares.

For most applications - and this is crucial - Postgres performance is fine. Vector search on hundreds of thousands of records with pgvector is fast enough. You don't need microsecond query times for a typical RAG application. You need correctness, consistency, and simplicity.

The cost savings were significant too. One database to run and maintain instead of two. No separate vector database subscription. Simpler infrastructure means smaller bills and fewer things to break at 2am.

The lesson about complexity

This story matters because it's a pattern. AI development encourages architectural complexity. Every tutorial shows microservices. Every vendor pitch includes their specialised infrastructure. The default assumption is that AI workloads need special treatment.

Sometimes they do. If you're Anthropic or OpenAI, you need custom infrastructure. If you're building a consumer app with 100,000 users doing semantic search over their personal documents, you probably don't. Postgres works. It's boring. It's reliable. It's one less thing to learn and maintain.

The question to ask: what problem does this complexity solve? If the answer is "best practices" or "scalability we might need someday", you're probably over-engineering. If the answer is "this specific performance requirement we measured", then maybe the complexity is worth it.

When to choose simple

Start simple. Prove the concept works. Measure where the bottlenecks actually are - not where you think they'll be. Then add complexity only where it solves a measured problem.

For RAG applications specifically: try pgvector first. If you hit genuine performance or scale issues, you'll know exactly what they are and can choose a solution that addresses them. But most applications never hit those issues. They struggle with data consistency, operational complexity, and costs instead.

The developer who wrote this post saved their project by deleting code. That's a rare and valuable skill - knowing when to simplify rather than add. In an industry that rewards complexity and sophistication, choosing the boring solution takes courage. But boring solutions ship. They stay running. They get maintained by the next developer who inherits the codebase.

Sometimes the best architecture decision is the one that lets you focus on building the thing people actually need, rather than the infrastructure underneath it.