A robot walked seven blind people through a building this week and narrated the journey as it went. Not with beeps or pre-recorded instructions - with full sentences, generated in real time, describing what it could see.

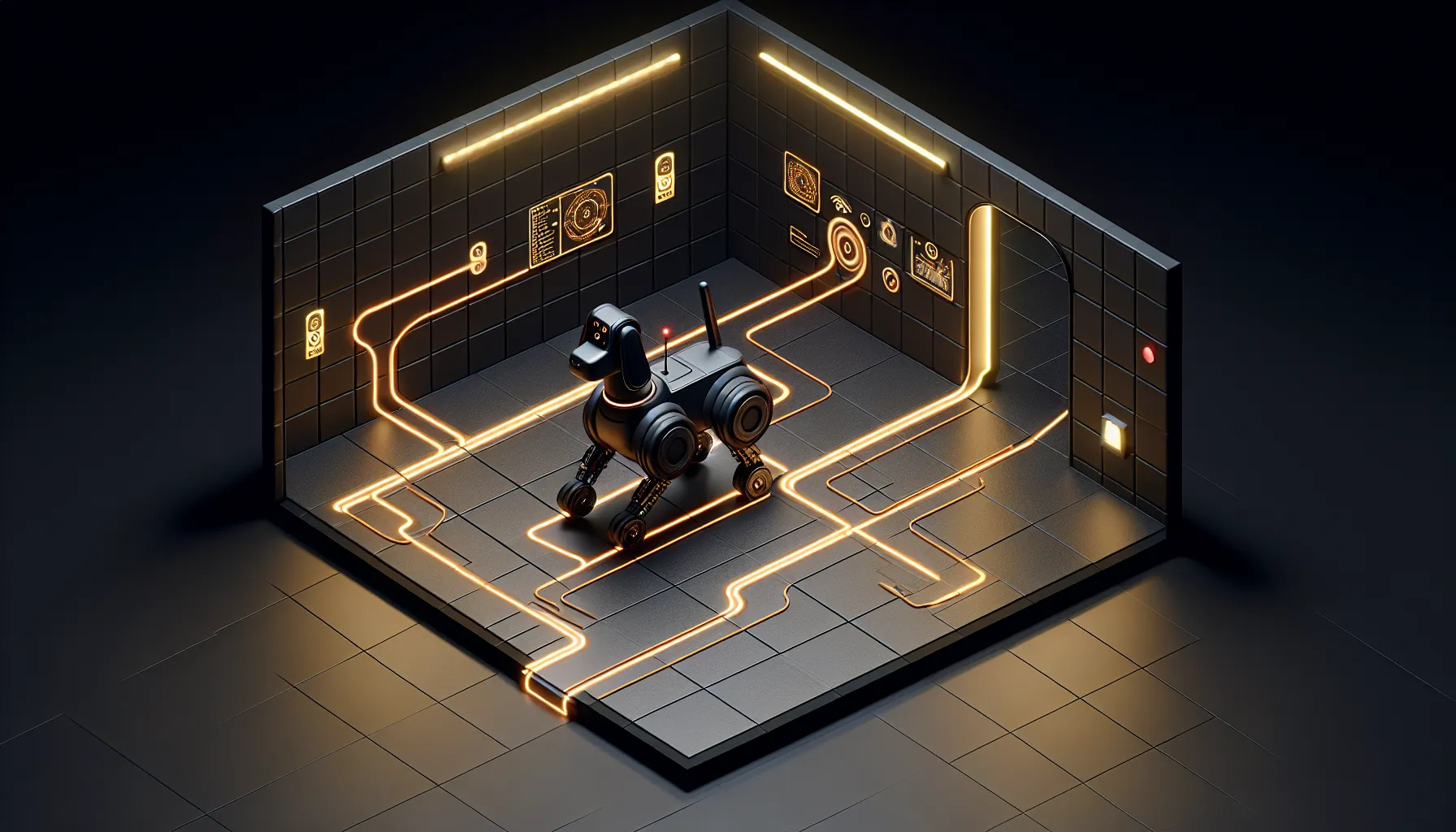

Researchers at Binghamton University built a robotic guide dog that uses large language models to communicate with visually impaired users. The system doesn't just navigate - it explains what it's doing and why, adjusting its commentary based on what the user needs to know.

The Real-Time Narration Problem

Guide dogs are brilliant navigators, but they can't tell you what's ahead. A human guide can say "there's a bin on your left, door coming up on the right" - contextual detail that helps someone build a mental map. Traditional robotic guides haven't managed this. They beep when there's an obstacle. They stop when the path is blocked. But they don't explain.

The Binghamton team used LLMs to bridge that gap. The robot processes visual data from its cameras, identifies objects and obstacles, then generates natural language descriptions of the environment. "We're approaching a corridor. There's a doorway three metres ahead on the left." Real-time narration, adjusted to the user's walking speed and the complexity of the route.

Seven blind participants tested the system navigating a multi-room environment. The study compared three modes: real-time narration only, route planning only (pre-journey description of the path), and both combined. The participants overwhelmingly preferred the combination - knowing the overall route AND getting live updates as they moved.

What Actually Worked

The interesting bit isn't that the robot can talk - it's when it chooses to talk. Too much narration becomes noise. Too little leaves gaps that make people anxious. The LLM has to decide what's relevant: a chair slightly off to the side doesn't need mentioning, but a sudden change in floor surface does.

Participants reported feeling more confident with the combined approach. The route planning gave them a sense of control - they knew where they were going. The real-time narration filled in the details that matter when you're actually moving through a space. "The door is opening now" tells you something "there's a door ahead" doesn't.

This solves a real problem. Traditional guide dogs take 18-24 months to train and cost upwards of £50,000. They're incredible, but there aren't enough of them. Most visually impaired people who need a guide dog don't have one. A robotic alternative - even one that only works indoors - could fill part of that gap.

The Limits of Indoor Navigation

The system was tested in controlled indoor environments. Multi-room buildings, yes. Unpredictable outdoor spaces, not yet. Weather, uneven pavements, cyclists appearing from side streets - these are harder problems than navigating a corridor.

There's also the trust question. A guide dog is a living creature you bond with. A robot is a machine that could malfunction. The participants in this study were testing in a research environment, not relying on the system for daily independence. Real-world deployment means the robot has to be failsafe, not just functional.

But the core insight is sound: LLMs are good at contextual communication, and that's exactly what's missing from assistive robotics. The model doesn't just describe what it sees - it prioritises, filters, and adjusts based on what the user needs to hear. That's a different kind of interface.

What Happens Next

The researchers are refining the system based on feedback. Participants wanted more control over the level of detail - some preferred minimal updates, others wanted constant narration. The LLM can handle that variation, but it needs better user settings.

There's also the question of outdoor capability. Indoor navigation is a controlled problem. Outdoor navigation is not. But if the system works reliably indoors, it's immediately useful for navigating office buildings, shopping centres, hospitals - anywhere with complex indoor layouts.

The bigger shift is this: robotics plus LLMs changes what assistive technology can do. Not just automation, but communication. Not just doing the task, but explaining it as it happens. That's the bit that makes this feel like a step change rather than an incremental improvement.

For more on the research, see the full report from The Robot Report.