A developer called Jenavus posted their monthly LLM API bill: $1,200. Then they built a proxy that routes requests based on complexity, sending simple tasks to cheap models and complex ones to premium models. New monthly bill: $480. Same quality. 60% savings. One line of code change in their application.

The tool is called TokenRouter, and the concept is straightforward: not every request needs GPT-4. Most chatbot queries, customer service responses, and content generation tasks can run on smaller, faster, cheaper models without anyone noticing. But routing intelligently between models is fiddly work - until someone builds middleware that does it automatically.

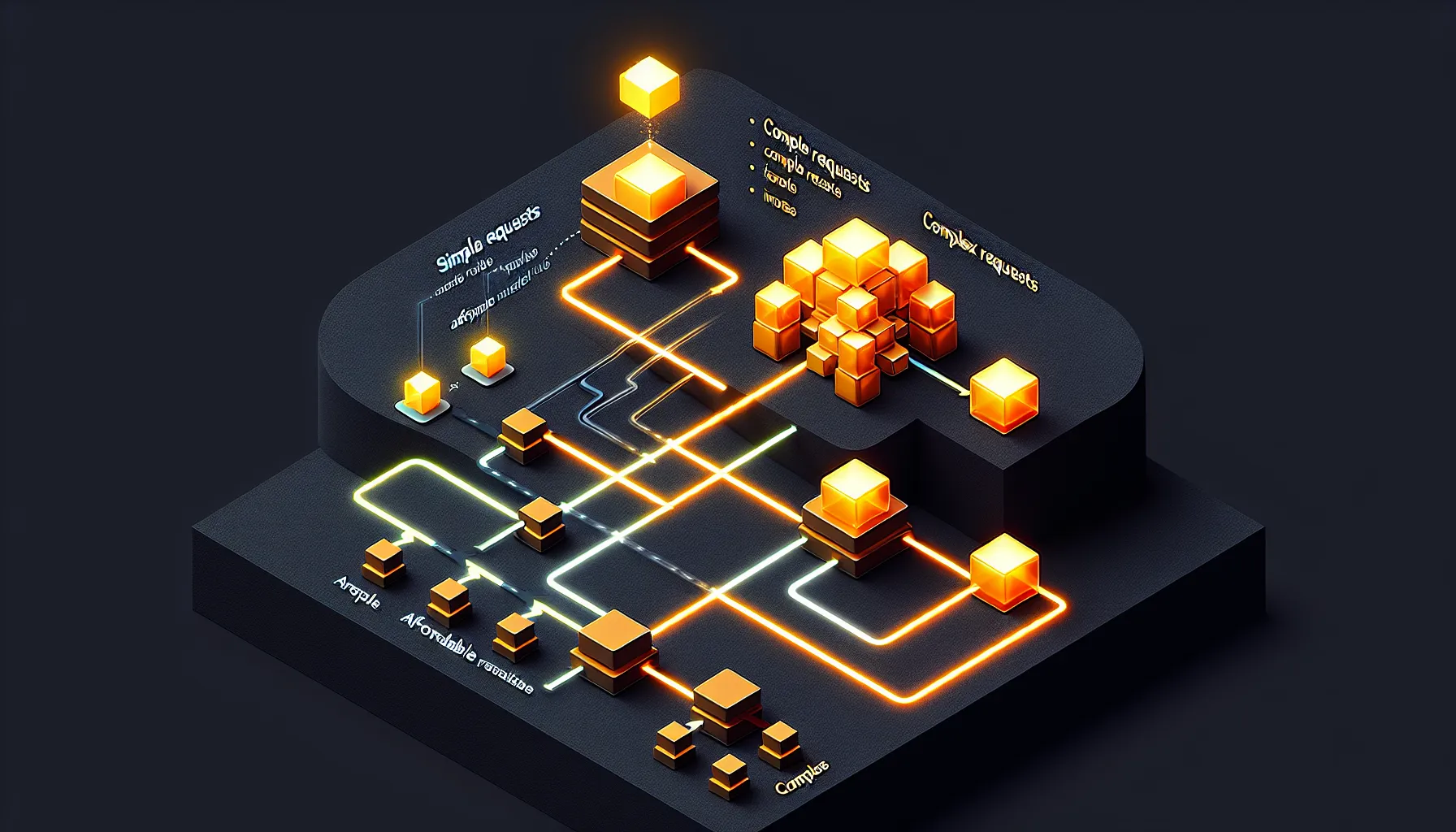

How Smart Routing Works

TokenRouter sits between your application and the model APIs. When a request comes in, it analyses the prompt and classifies it by complexity. Simple query? Route to GPT-3.5 Turbo or Claude Haiku. Complex reasoning task? Send it to GPT-4 or Claude Opus. Multi-step analysis? Maybe Gemini Pro.

The classification happens in milliseconds using a lightweight model that costs almost nothing to run. The savings come from the fact that premium models cost 10-30x more per token than cheaper alternatives. If you can route even 60% of your traffic to cheaper models, the maths changes fast.

Jenavus's numbers show the impact. They were running everything through premium models because that's the safe default - you know it'll work. But safe defaults are expensive. TokenRouter gave them intelligent routing without having to manually categorise every request type or maintain complex logic themselves.

The "one line of code" claim is slightly marketing-speak - you still need to configure routing rules and set up the proxy - but the core point holds. Once it's running, your application doesn't change. You just point your API calls at the proxy instead of directly at OpenAI or Anthropic, and it handles the rest.

Why This Matters Now

LLM costs are the hidden operational expense that's catching everyone by surprise. You build a prototype, costs are negligible. You launch to users, suddenly you're spending four figures monthly. You scale, and that bill becomes five or six figures before you've worked out how to optimise.

Most teams respond by trying to cache responses, reduce context windows, or batch requests. Those help, but they're marginal gains. Intelligent model routing is structural - it changes the cost profile of your entire system without requiring you to rethink your architecture.

The timing matters because model pricing is diverging. Premium models are getting better but not significantly cheaper. Budget models are getting dramatically cheaper AND substantially better. GPT-3.5 Turbo is now absurdly cheap for what it can do. Claude Haiku is fast and capable for most tasks. The gap between "good enough" and "best possible" is narrowing in quality but widening in price.

That creates an arbitrage opportunity. If you can correctly identify which requests need premium models and which don't, you capture most of the value at a fraction of the cost. TokenRouter automates that arbitrage.

The Practical Constraints

Smart routing isn't free lunch. The classification step adds latency - usually 50-150ms - which matters for real-time applications. And the routing decisions are probabilistic, which means occasionally a complex request gets sent to a cheap model and returns a subpar response.

Jenavus mentions monitoring and fallback logic in their implementation. If a cheap model fails or returns low-confidence output, TokenRouter retries with a premium model. That safety net costs tokens but prevents bad user experiences.

There's also a cold-start problem. The router needs data to learn your usage patterns. Initially, it's conservative - routes more traffic to premium models than necessary. Over time, as it learns which requests are genuinely complex, the savings increase. Jenavus's 60% reduction came after a few weeks of tuning.

None of this makes it less useful. It just means you need to implement it thoughtfully, monitor the results, and adjust routing rules based on what you're seeing. Like most infrastructure improvements, the gains come from iterative refinement, not plug-and-play magic.

What This Signals

Intelligent model routing is becoming standard infrastructure, not a clever hack. The same way CDNs became standard for web delivery or load balancers became standard for high-traffic applications, proxy-based model routing is heading toward "table stakes" territory for production LLM applications.

That's good news for builders. It means the tooling is maturing. The early days of LLM applications required you to solve every infrastructure problem yourself. Now, open-source projects and lightweight services are handling the common patterns - routing, caching, rate limiting, cost management - so you can focus on building the actual product.

TokenRouter is one example. There are others emerging. LiteLLM for unified API interfaces. RouteLLM for model selection. Helicone for observability and caching. The middleware layer is getting built, and that's what makes the technology genuinely usable at scale.

For anyone running LLM-powered features in production, the takeaway is simple: if you're sending every request to the same premium model, you're probably overpaying. Intelligent routing costs almost nothing to implement and pays for itself immediately. Jenavus's 60% savings aren't exceptional - they're typical. The only question is whether you're leaving that money on the table or not.