NVIDIA just made a significant move in robotics - not with a single breakthrough product, but with something potentially more valuable: standardised infrastructure. At CES, they released a suite of open physical AI models and frameworks designed to help companies build autonomous systems faster and more safely. This isn't just about making robots smarter. It's about making robot development less fragmented.

The Omniverse Foundation

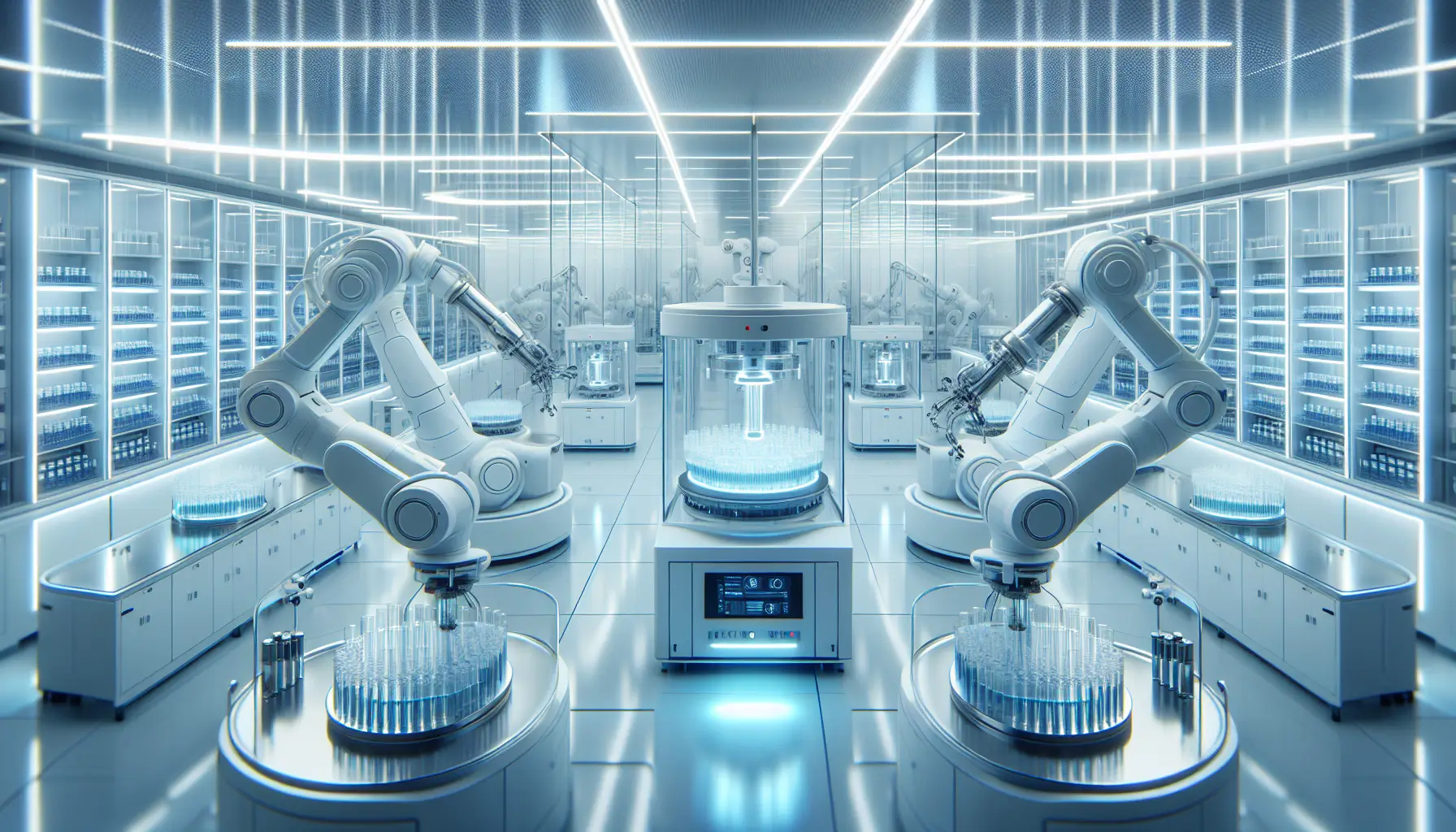

The tools centre around NVIDIA Omniverse, a simulation platform that lets developers test robots in virtual environments before deploying them in the real world. Think of it as a physics-accurate sandbox where you can run thousands of scenarios without risking expensive hardware or human safety. Companies like Caterpillar, LEM Surgical, and NEURA Robotics are already using these frameworks to train autonomous vehicles, surgical systems, and industrial robots.

What makes this interesting is the emphasis on open models. Instead of proprietary black boxes, NVIDIA is providing shared frameworks that different companies can build on. This approach could accelerate development across the industry - when everyone speaks the same technical language, integration becomes simpler and safer.

From Simulation to Edge Deployment

The release spans three key areas: simulation, where robots learn in virtual environments; deployment, where those learned behaviours transfer to real hardware; and edge computing, where robots process data locally rather than relying on cloud connectivity. That last piece matters more than it sounds. A surgical robot or autonomous vehicle can't afford latency or network failures. Edge AI keeps decisions fast and reliable.

LEM Surgical's adoption is particularly telling. Surgical robotics demands precision, safety, and regulatory compliance - exactly the kind of high-stakes environment where standardised tools and rigorous simulation matter most. If these frameworks hold up in surgery, they'll likely work for less critical applications too.

What This Means for Builders

For developers and engineers, this release does something practical: it lowers the barrier to entry for robotics projects. Building autonomous systems has traditionally required deep expertise in hardware, simulation, and AI - often from scratch. NVIDIA's bet is that by providing robust, open infrastructure, more teams can focus on solving specific problems rather than reinventing foundational tools.

The risk, of course, is that open frameworks become the new standard - and NVIDIA's hardware becomes the default choice for running them. But if the trade-off is faster, safer robots reaching real-world deployment, that might be a reasonable bargain.

Worth watching: how quickly adoption spreads beyond early partners, and whether these tools genuinely reduce development time or just shift complexity elsewhere. Standardisation is valuable, but only if it actually makes building easier.