Twenty-five percent more packages moving through the same warehouse. No new robots, no new infrastructure - just a system that learned to think ahead.

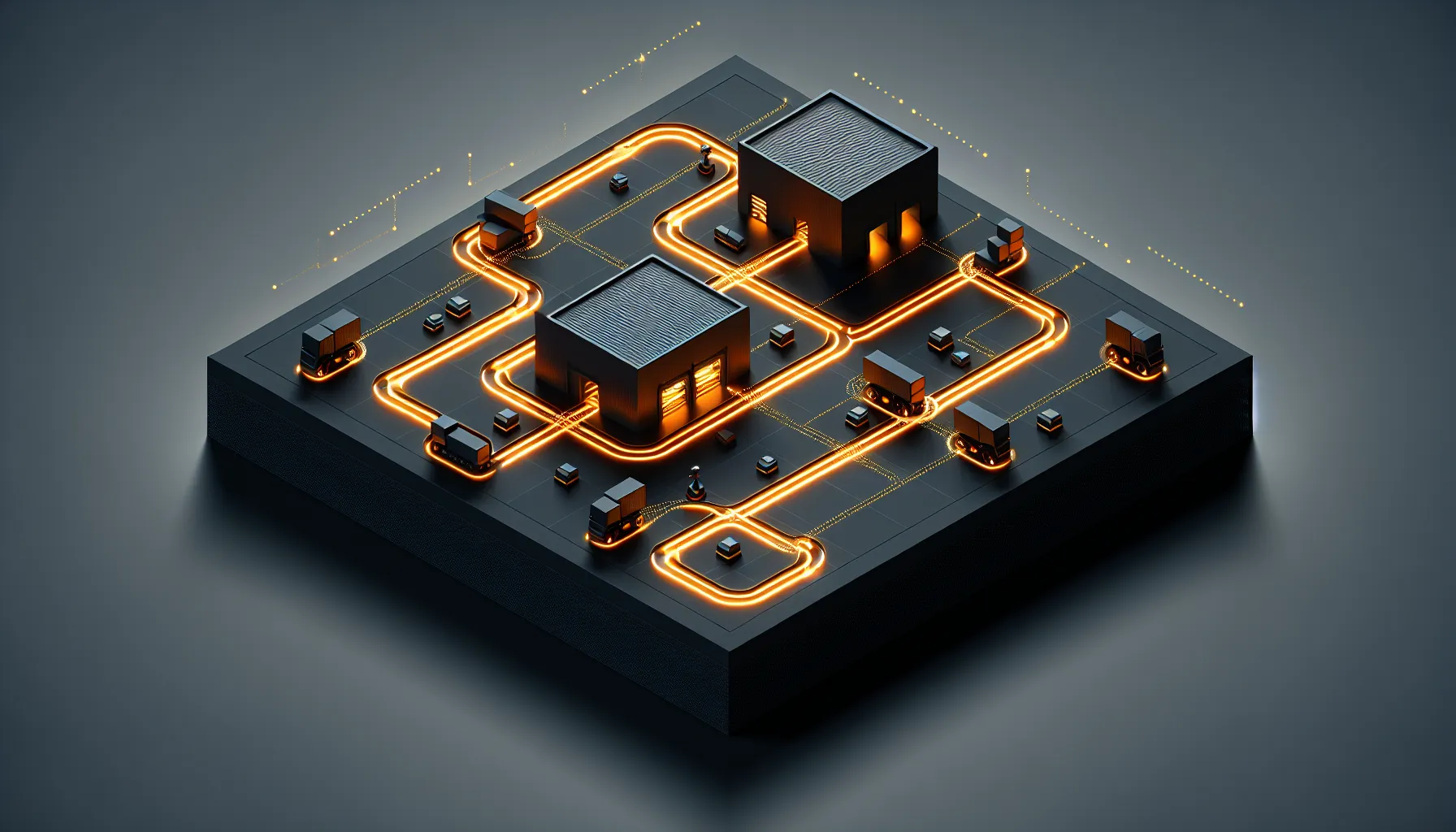

MIT researchers built a hybrid reinforcement learning system that solves the coordination problem in warehouse robotics. Not the picking problem, not the routing problem - the much harder problem of which robot gets priority when paths cross. Their system predicts congestion before it happens and makes split-second decisions about who waits and who goes.

The Problem Nobody Talks About

Warehouse robots are brilliant at following paths. They're terrible at negotiating with each other when those paths intersect. Traditional algorithms use fixed rules - first come first served, or priority based on task type. Both create the same outcome: robots waiting in queues whilst other robots wait for them.

The MIT approach treats priority assignment as a learning problem. Their deep reinforcement learning system watches how traffic flows, notices which decisions create bottlenecks, and learns which robot should yield at each intersection. It's not following rules. It's predicting consequences.

The 25% throughput gain comes from something deceptively simple: the system learned that sometimes the best decision is counter-intuitive. Let the slower robot go first if it prevents three faster robots from queuing later. Delay one pickup to keep the main corridor clear for the next five minutes.

Why This Matters Beyond Warehouses

This is coordination intelligence, not just warehouse optimisation. Any system where multiple autonomous agents share physical space hits the same problem - self-driving cars at intersections, drones in shared airspace, hospital delivery robots in corridors.

The hybrid approach is what makes it work. Pure machine learning can't handle the edge cases. Pure algorithmic rules can't adapt to changing patterns. MIT's system uses reinforcement learning to make the priority calls, but constrains those decisions within safety rules that never get overridden.

What's fascinating is how little the system needs to learn. It doesn't need to understand the entire warehouse operation. It just needs to recognise the pattern: this intersection configuration, at this time, with these robot trajectories, predicts congestion in thirty seconds. Make a different priority call now, prevent the jam later.

The Real-World Test

Twenty-five percent better throughput in simulation is promising. The question is whether it holds in physical deployment, where robots don't move in perfectly predictable lines and sensors occasionally glitch.

The MIT team tested their system against traditional decentralised algorithms and centralised control systems. Decentralised approaches hit congestion fast because no robot has the full picture. Centralised systems work until the coordinator becomes the bottleneck. The hybrid model sidesteps both problems - local decision-making informed by pattern learning across the whole system.

For warehouse operators, this changes the ROI calculation. The cost isn't new hardware. It's the compute to run the learning system and the time to train it on your specific layout. But 25% more throughput from your existing robot fleet? That pays back fast.

The broader implication is about intelligence placement. You don't need smarter robots. You need smarter coordination. The physical agents can stay relatively simple if the system managing their interactions gets more sophisticated. That's a different architecture than most robotics companies are building towards.

This is the pattern worth watching: reinforcement learning not to replace human planning, but to handle the micro-decisions that humans can't process fast enough. Let the algorithm decide who yields at intersection seven. Let the human decide whether to add a third picking zone.