Today's Overview

A curious reversal is happening in engineering: as AI gets better at writing code, the bottleneck has shifted entirely. The engineers winning right now aren't the fastest typists-they're the ones who can write specifications so clear that an AI system has nothing to guess at. One engineer spent five years shipping commerce platforms before realizing the expensive problems were never missing semicolons. They were assumptions that looked like requirements, requirements that shifted between meetings, behaviors that "everyone knew" except the people building the system.

Writing specs that machines can execute

When specifications are ambiguous, every engineer invents their own version of the truth, and every stakeholder assumes the old rules still apply. That same ambiguity creates machine confusion too. The engineers who learned to translate ambiguity into specification-what goes in, what comes out, what happens at the edges-just had their value compounded. They're shipping faster than ever. The ones still fighting assumptions are shipping the same problems, just with a faster typing assistant.

This matters because it reveals what AI is actually bad at. It's brilliant at implementation. It's terrible at the work engineers always pretended was easy: sitting in a meeting with stakeholders, asking the follow-up question that reveals what nobody voiced, pushing back when requirements contradict what another team shipped. That's the real moat now. Not how fast you code. How clearly you think.

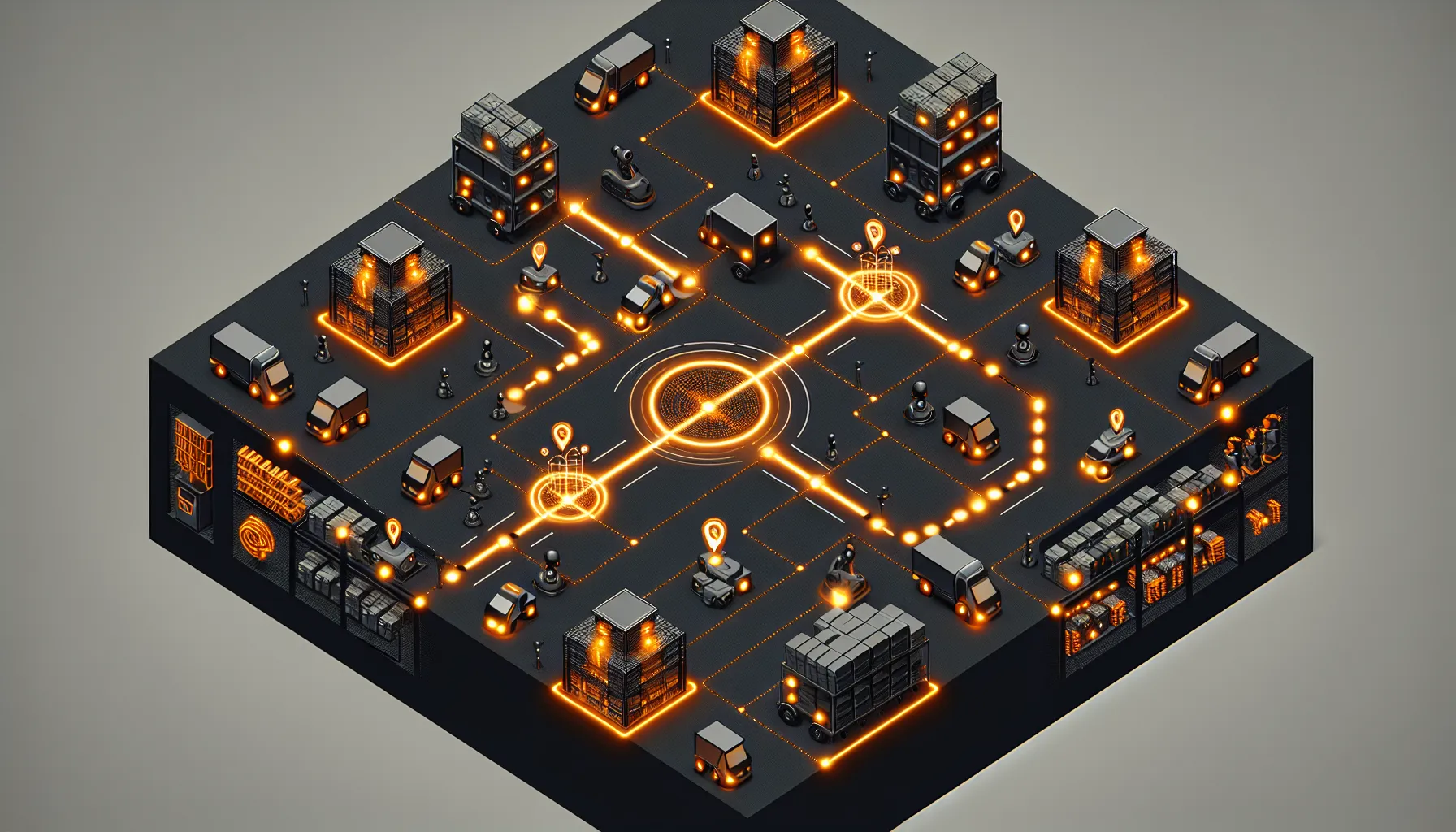

Physical systems learn what humans miss

In warehouses, MIT researchers trained a system to keep hundreds of robots moving smoothly by learning which robots should get priority at each moment. The hybrid approach-deep reinforcement learning deciding priorities, then a fast classical algorithm routing each robot-achieved 25% better throughput than traditional methods. The robots are learning to see congestion patterns that human-designed algorithms never accounted for. In simulations that mirrored real warehouse layouts, the system rerouted robots in advance to avoid bottlenecks entirely. Even small improvements matter: a 2% gain in throughput in a giant warehouse compounds into millions in recovered efficiency.

Separately, a developer benchmarked a custom ROS 2 localization filter against the standard package on real-world data. The custom system achieved 5.5 meters absolute trajectory error. The standard package achieved 23.4 meters-4.2 times worse. The difference wasn't hardware or tuning tricks. It was real-time bias estimation. The standard system trusts every GPS fix equally and uses fixed noise values. The custom system continuously estimates IMU bias and adapts its noise model, so it knows when a measurement doesn't fit and how much to trust it. That's the kind of precision that matters when a robot is trying to navigate terrain it's never seen before.

These systems share something: they're built on clear contracts between what goes in and what should come out. The specification is the foundation. The implementation-whether it's code, training, or calibration-is what makes that specification real.