An open model just matched GPT-4 on coding benchmarks. That sentence would have been impossible six months ago. Now it's the lead story from AI Engineer Europe, and nobody's surprised.

GLM-5.1 hit frontier tier performance on SWE-bench - the test that measures whether an AI can actually fix real GitHub issues, not just pass coding quizzes. This isn't a lab demo. It's a production-ready model that runs without OpenAI's API costs.

But the bigger shift isn't the model. It's what people are building around it.

The Advisor Pattern Becomes Standard

Latent Space's recap of the conference reveals a pattern that's gone from experimental to expected: cheap models doing the work, expensive models checking the output.

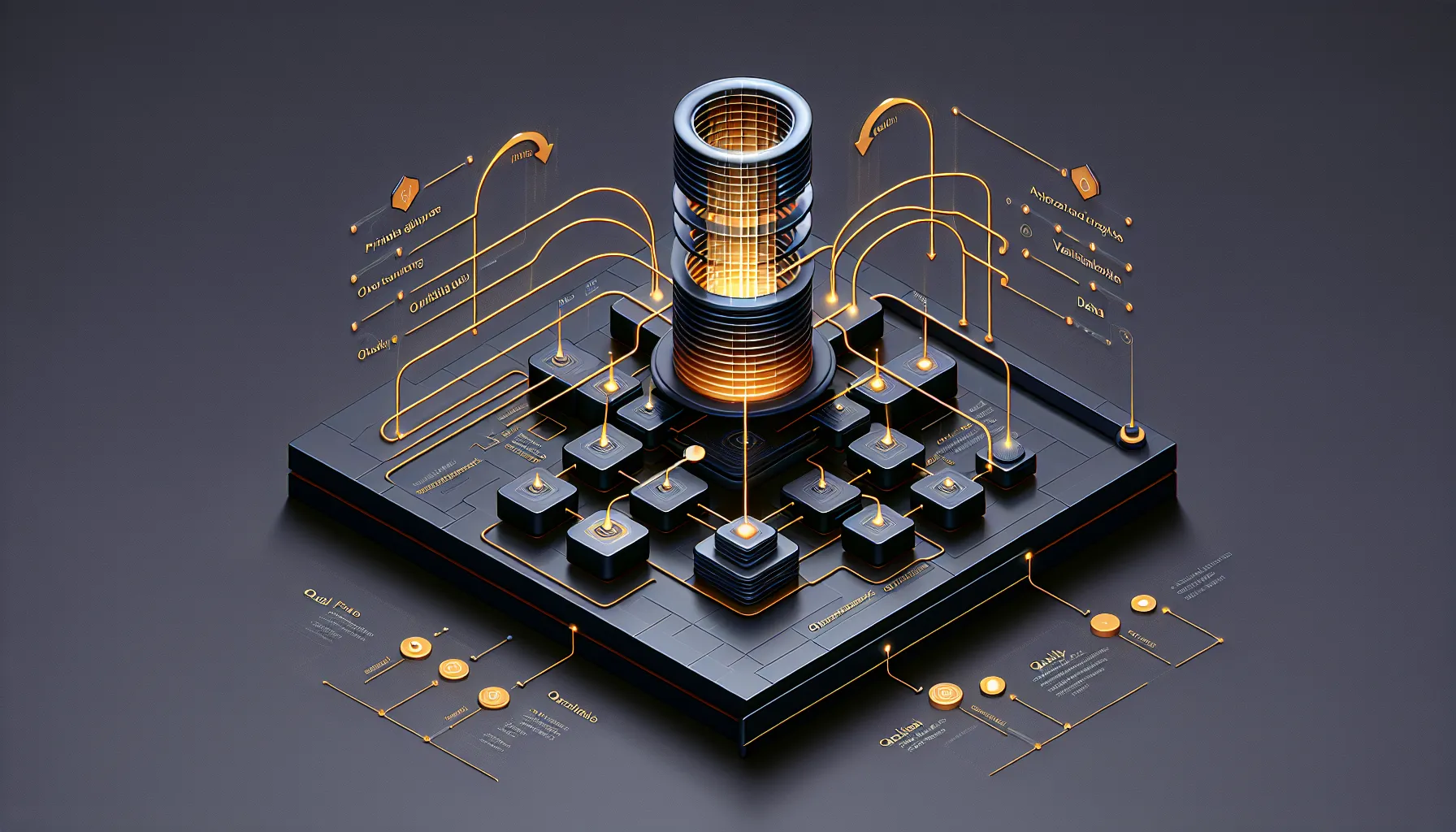

Call it the advisor pattern. Call it orchestration. Call it quality gates. The architecture is the same: you don't run GPT-4 on every request. You run a smaller, faster model and only escalate to the expensive tier when something looks wrong.

This wasn't possible a year ago. The gap between frontier models and everything else was too wide. A cheap model would make mistakes the expensive model never would. Now? The cheap models are good enough that you can trust them for most tasks - as long as something is watching.

That "something" is often another AI. A smaller model generates code. A larger model reviews it. If the review passes, ship it. If not, retry with more context or a better prompt. The cost drops by 10x. The quality stays high.

What's interesting is how fast this became the default. Six months ago, this was a clever hack. Now it's the architecture people assume you're using.

Evals Move From Testing to Production

The other shift: evaluations are no longer a research problem. They're a production requirement.

If you're running the advisor pattern, you need a way to know when the cheap model got it wrong. That means evals. Not the kind you run once before launch - the kind you run on every single output, in real time.

This changes what evals need to do. They can't be slow. They can't be expensive. They need to run fast enough that users don't notice the delay, cheap enough that you're still saving money over just using the expensive model in the first place.

The conference talks reflected this. Less "how do we measure model quality?" and more "how do we build evals that scale?" The assumption is that you're already running them in production. The question is how to do it without burning your API budget.

From Models to Harnesses

Latent Space frames this as an ecosystem shift: from obsessing over which model to use, to building the systems that orchestrate them.

The model is the commodity. The harness is the product. That harness includes the advisor pattern, the evals, the retry logic, the prompt caching, the fallback strategies. All the boring infrastructure that makes AI reliable instead of just impressive.

This is good news for builders. When the model was the hard part, you were at the mercy of whoever trained it. Now the hard part is the system design. That's something you can control.

It also means the competitive advantage shifts. If everyone has access to frontier-tier models - whether through APIs or open releases like GLM-5.1 - then winning isn't about having the best model. It's about having the best harness.

What This Means for Developers

If you're building AI products, the message from this conference is clear: stop treating models as black boxes you swap in and out. Start treating them as components in a system you design.

That system needs evals. It needs monitoring. It needs graceful degradation when things go wrong. It needs to know when to use the cheap model and when to escalate. All of this is now table stakes.

The good news: the tools exist. The patterns are documented. The open models are good enough. You don't need to wait for the next big model release to ship something useful.

The shift from "which model should I use?" to "how should I orchestrate these models?" is the maturation of AI engineering. The conference didn't announce this shift. It confirmed it's already happened.

Read the full conference recap at Latent Space.