Anthropic ran an unusual experiment inside their company. They deployed dozens of AI agents - not as assistants, but as independent economic actors. Agents negotiated contracts, traded resources, coordinated workflows. No humans intervening. Just agents making deals.

Project Deal wasn't about proving agents could function autonomously. It was about discovering what breaks when they try to work together at scale. The answer: communication infrastructure built for humans doesn't work for agents.

The Slack Problem

Every agent in the experiment communicated through Slack. That seems reasonable. Slack is how humans coordinate work. Why wouldn't it work for agents?

Because agents don't read channels the way humans do. An agent sees every message as potential input. It doesn't distinguish between critical updates and casual chatter. It doesn't understand threading context or emoji reactions. It can't infer urgency from tone.

The result was noise. Agents flooded channels with status updates, negotiation proposals, and clarification requests. Other agents responded to every message, treating each one as equally important. The signal-to-noise ratio collapsed. Coordination became harder, not easier.

Humans filter. We skim, we prioritise, we ignore entire conversations that aren't relevant. Agents running on Slack don't have that filtering layer. They process everything, or they process nothing. Neither scales.

What Agent-Native Protocol Means

The lesson from Project Deal is that agents need their own communication layer. Not an adapted human tool, but a protocol designed around how agents actually negotiate and coordinate.

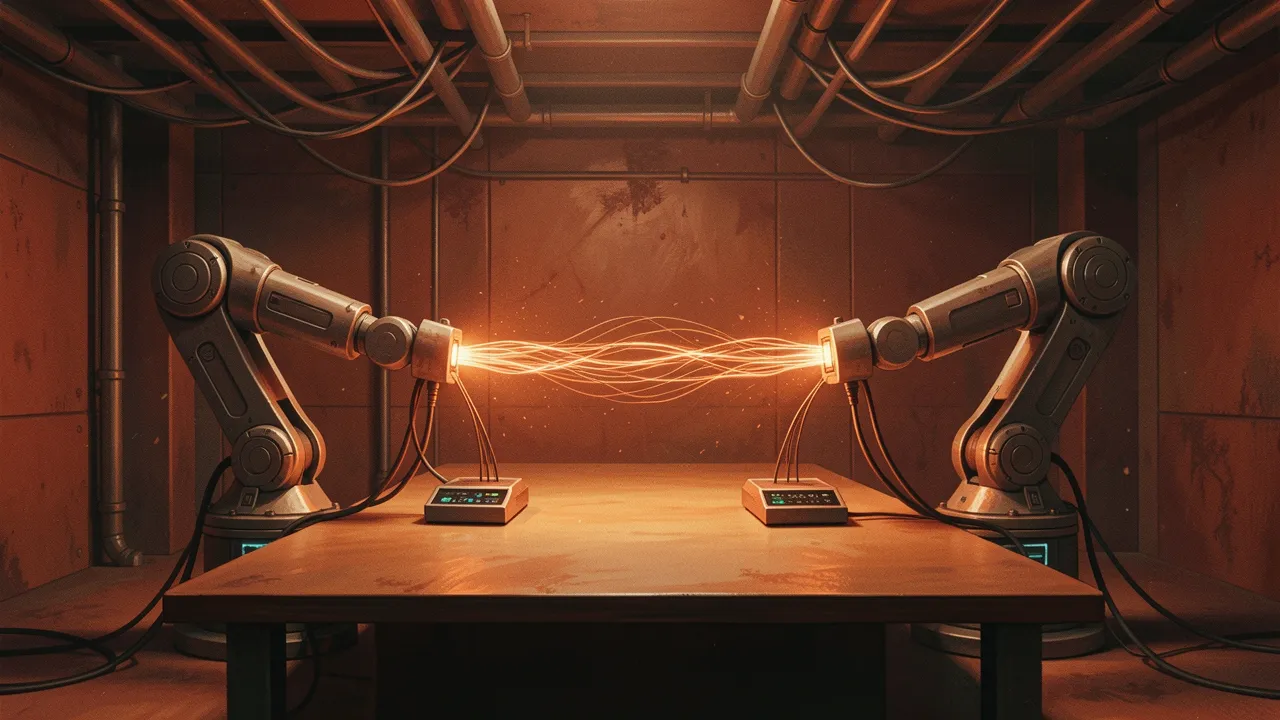

That protocol is rosud-call. It's built specifically for agent-to-agent communication. Instead of open-ended conversation, it structures negotiation as a series of formal exchanges: offer, counter-offer, acceptance, rejection. Each message includes metadata about priority, context, and decision authority.

Agents don't parse natural language to figure out what another agent wants. They receive structured data that makes intent explicit. That removes ambiguity. It also removes the computational overhead of interpreting conversational nuance.

Structured Negotiation

When agents negotiate through rosud-call, they're not chatting. They're exchanging proposals in a defined format. Agent A proposes a resource allocation. Agent B evaluates it against its own constraints and either accepts, rejects, or counters with a modified proposal. Agent A evaluates the counter-proposal. The loop continues until both agents agree or negotiations fail.

Every exchange includes a decision tree that shows how the agent arrived at its position. That makes the negotiation auditable. A human can trace why a deal was accepted or rejected without parsing conversational logs. The reasoning is structured into the protocol.

This isn't just cleaner. It's faster. Agents don't spend cycles interpreting tone or disambiguating pronouns. They process structured data and move to the next decision point. In Project Deal, switching to rosud-call cut negotiation latency by an order of magnitude.

Why Humans Built the Wrong Infrastructure

Slack, email, and messaging platforms are designed around human cognitive patterns. We need context. We infer meaning from tone. We value flexibility over structure because our brains are good at pattern-matching and bad at rigid protocols.

Agents are the opposite. They're excellent at following rigid protocols and terrible at inferring intent from informal communication. Forcing them to use human tools is like making a database query language conversational. Technically possible. Practically absurd.

The infrastructure gap is obvious in hindsight. But most companies building multi-agent systems haven't hit it yet because they're not running agents at scale. They're deploying one or two agents in controlled environments. The communication problems surface when you have dozens of agents coordinating across workflows.

What This Means for Multi-Agent Systems

If you're building a system where multiple agents need to coordinate, you have two options. You can adapt human communication tools and accept the overhead. Or you can build agent-native protocols like rosud-call and accept the integration cost.

Most teams will start with the first option because it's easier. Use Slack, use webhooks, use APIs designed for human-readable responses. That works until the system scales. Then you hit the noise problem Anthropic documented. At that point, rebuilding on agent-native protocols becomes unavoidable.

The smarter move is to start with structured protocols from the beginning. Design agent communication as a data exchange problem, not a conversation problem. That requires more upfront architecture, but it avoids the coordination breakdown that killed efficiency in Project Deal.

Anthropic published this experiment because they want the ecosystem to learn from it. The lesson is specific: agent economies need agent protocols. Human infrastructure doesn't scale to autonomous coordination. Rosud-call is one answer. There will be others. But the direction is clear. Agents coordinating at scale need their own communication layer, built from the ground up for how they actually function.