Today's Overview

Saturday afternoon, and there's something interesting happening at the intersection of physical automation, AI employment, and how we actually build software. Not the flashy breakthroughs, but the practical realities that matter when you're trying to deploy things that work in the real world.

Retail robots are finally earning their safety credentials

Simbe's Tally shelf-scanning robot has just achieved UL 3300 certification - a big deal that most people will gloss over. This standard essentially says: yes, this thing can safely operate in a public space with humans around. Tally passed over 40 safety tests covering real-time detection, protective braking, and reliable performance on actual retail flooring. No simulation gymnastics. Real stores. Real people walking past. For retailers deploying autonomous systems at scale, this certification is the difference between "interesting prototype" and "legally defensible asset." It's the unglamorous work of building trust that actually matters.

AI engineering might be the last job left standing

Meanwhile, the AI jobs conversation is heating up. Latent Space is making a serious argument that "AI Engineer will be the last job" - and they're not joking around anymore. The reasoning goes like this: software engineering has already captured 50% of Claude's use cases. With coding agents improving rapidly, that percentage keeps climbing toward 80%, 90%, beyond. But here's the twist - because AI engineers are the ones building the tools automating everything else, they become the ones keeping themselves employed. Every other profession gets optimized away first. It's Jevons Paradox, but with careers.

The practical takeaway? If you're thinking about your role in an AI-augmented world, being the person who understands how to orchestrate these systems - how to build agents, define their constraints, deploy them safely - is genuinely different from being in a field that's already 70% automatable. It's not hype. It's structural.

Code that lives long requires structure

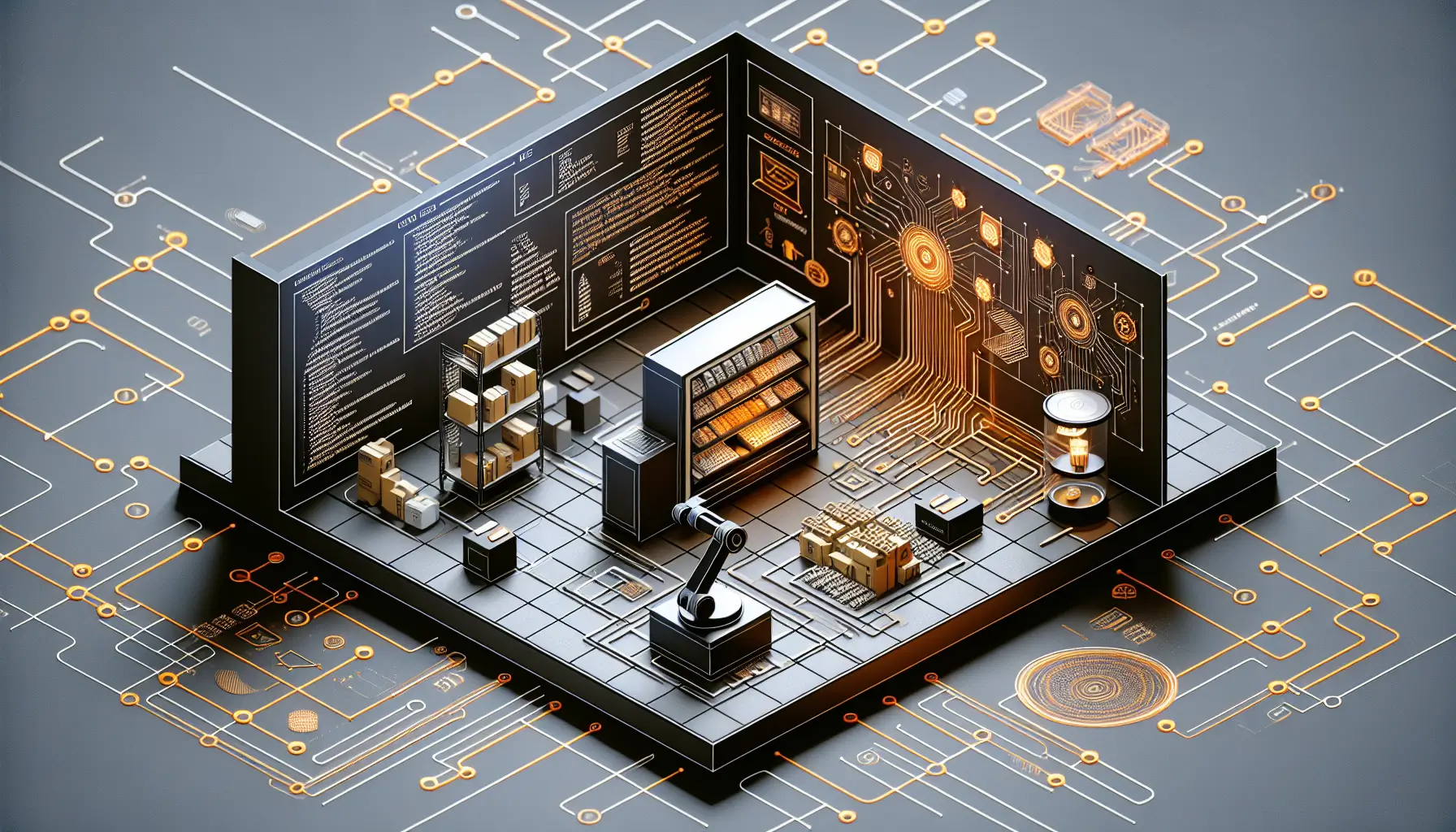

On the building side, there's a growing clarity about why AI-generated code becomes unmaintainable so quickly. Unstructured generation - where an AI writes everything as one cohesive block - couples dependencies together in ways that break the moment you need to change anything. Structured generation, by contrast, decomposes into independent components with explicit, one-directional dependencies. The code lasts. One approach builds things that work for a sprint. The other builds things that survive.

These aren't separate stories. They're connected. Retail automation works because engineers designed safety protocols into every layer. AI employment shifts because the engineering toolchain itself is what's driving the automation. And your code survives because you've thought about its architecture before you asked an AI to build it. Structure, at every level, is what separates the deployable from the discardable.