When AI Agents Hit the Hard Limits of Production

Today's Overview

Three specific cloud operations proved too risky to automate. An AI agent removed a security group rule that looked unused-until a partner's traffic failed through a VPC peering connection the agent couldn't see. Another disabled cross-region replication on an S3 bucket to save $1,200 a month. Turns out that bucket was the disaster-recovery copy of a compliance archive. The third optimised away a cost spike by recommending instance right-sizing, missing that the spike was a deployment that changed the architecture intentionally.

The pattern matters more than the failures. In each case, the agent had all the local data it needed. The missing piece was global context: dependency topology across accounts, recovery requirements, concurrent deployment timing. Adding more enrichment doesn't fix this because the context lives in other systems or other teams' heads. The structural fix isn't more data-it's stopping the agent from executing these three classes unilaterally. Read-and-propose replaces read-write. A human reviews the formatted change in seconds. Trust scores and capability tiers on MCP tools become the enforcement layer.

How to Make Cloud Chargeback Actually Work

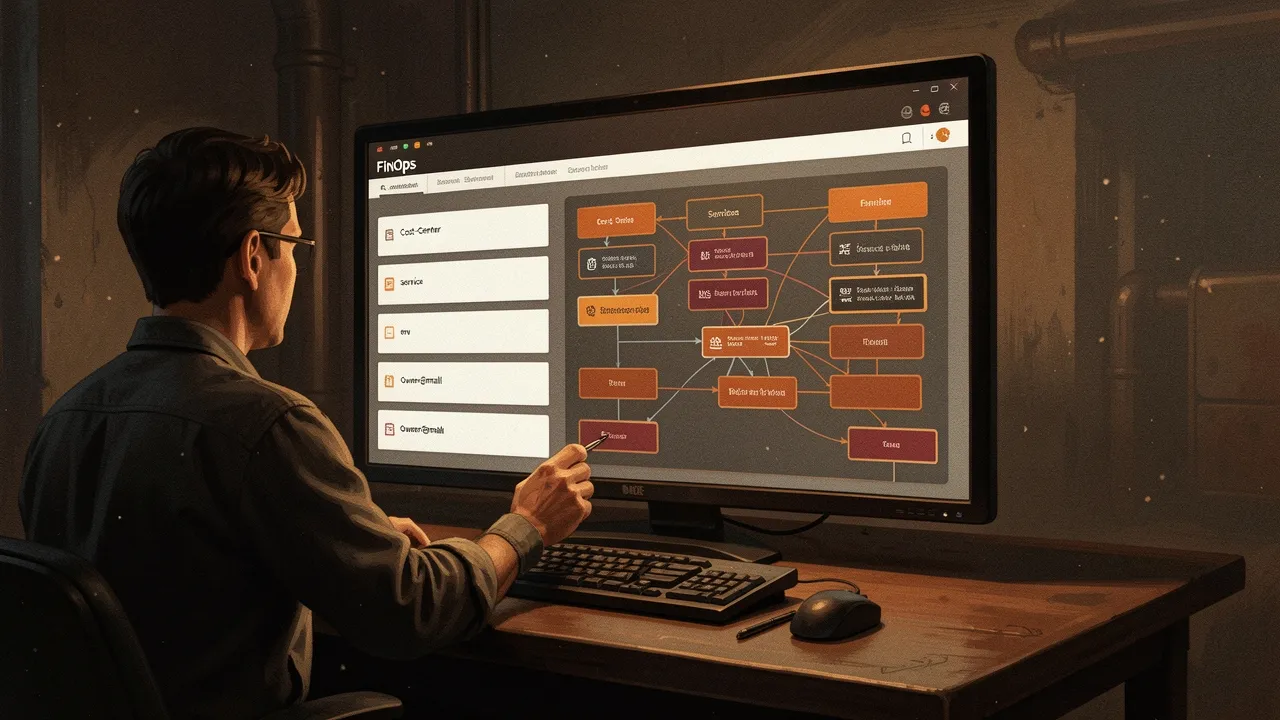

Most organisations hit the same wall: a 200-tag taxonomy for cloud resources. Finance wants cost-center. Engineering adds team, service, environment. Security adds compliance-tier. Procurement adds customer. By year four, the spreadsheet is aspirational fiction-70-80% chargeback accuracy, with 12% of spend sitting in "unattributed." Tag governance doesn't help because engineers don't know what "revenue-stream" means for a CI runner.

The fix is to stop trying to tag perfectly. Instead, absorb tag variance at the cost-record stage. Four fields matter: cost_center, service, env, owner_email. Engineers tag what they remember. A lookup table owned by the FinOps team converts whatever tag soup the resource has into these four canonical fields. When the tag taxonomy changes, only the yaml file changes. Chargeback accuracy jumps from 70-80% to 92-96% in six weeks. The unattributed bucket drops from 12% to under 5%. The 200-tag spreadsheet stops being the source of truth and becomes one input among several. This is the pattern that matters: policy lives where it's owned and maintained, not where it's enforced.

Building in Codebases, Not Playgrounds

Claude's guidance on large codebases cuts through the hype about AI coding. The real work isn't the model-it's the context window, the file selection, the incremental iteration. When you're working in 50,000 lines of production code, you can't paste the whole thing. You have to think like a human developer: what's the minimal surface area to understand the change? What existing patterns does this codebase follow? The agent that understands codebase structure outperforms the agent that just writes code.

This week shows the shape of mature AI integration: agents work best when they're constrained by structure. Cloud operations work when the blast radius is bounded and reversibility is high. Chargeback works when policy is centralised and agents are given clean inputs. Code work works when the agent understands the system it's changing. The pattern across all three is the same: specificity beats capability.