Today's Overview

The way we're building voice agents just shifted. Text-to-speech models used to be a separate problem from language models-you'd generate text, then convert it to audio as an afterthought. That's no longer how it works. Mistral and others have converged on the same architecture: autoregressive transformers generating audio tokens one frame at a time, just like LLMs generate text tokens. Samuel Humeau from Mistral shows why this happened and what it actually enables-voice cloning from a few seconds of reference audio, streaming agents that respond before the full output finishes generating, and systems that feel responsive rather than delayed.

The practical catch is one that keeps coming up: latency. Cascaded systems (speech-to-text, LLM, text-to-speech) inherit 500ms to 4 seconds of delay while humans respond in roughly 200ms. Full-duplex models like Moshi solve the ping-pong problem-the model can listen and talk simultaneously-but they don't solve the usefulness problem. The real blocker isn't architecture anymore. It's cost. TTS at scale burns through budgets. That's why the voice agent moment keeps not arriving, even when the technology works. ElevenLabs shows a simpler path: wrap existing chat agents in a voice layer without rewriting the underlying logic. A few lines add turn-taking, interruption detection, and speech-to-text. That's how voice stops being a research problem and becomes something builders actually ship.

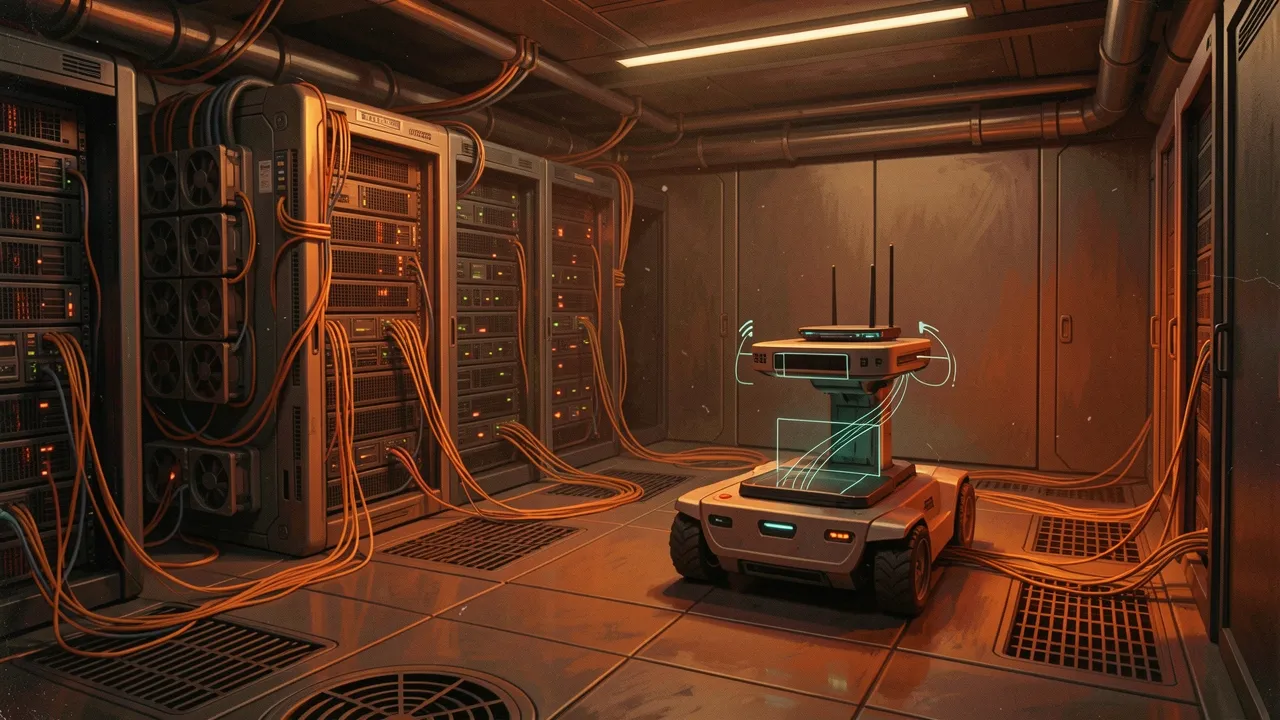

When Robots Can't Trust Their Wi-Fi

Meanwhile, on the robotics side, something quieter but more urgent is happening. Mobile robots running over unreliable Wi-Fi don't just lose packets-they execute "ghost commands." An old motion directive arrives late, after the robot's state has already changed, and gets executed anyway. A ROS developer built ros2_kinematic_guard to solve this. Instead of relying on heartbeat timeouts (which only detect silence), the guard watches a short local window: previous and current commands, previous and current odometry. It computes a kinematic consistency score. When that score spikes-meaning the command no longer makes sense relative to the robot's actual state-the system brakes, flushes the poisoned command window, and waits for a fresh synchronized pair before continuing. On a 20 Hz guard loop, intervention happens in around 50ms. The repo includes a pressure test you can run without hardware. This matters because Wi-Fi collapses are getting more common, not less, and nobody's talking about the motion safety layer between the network and the controller.

Anthropic's Visible Hand

Anthropic's infrastructure bets are now impossible to miss. The company just anchored itself to SpaceX's Colossus One compute cluster, doubled Claude Code rate limits as a direct result, committed to spending $200 billion on Google Cloud chips, and is apparently fundraising at a near-trillion-dollar valuation. What makes this worth watching isn't the numbers-it's the pattern. Anthropic is no longer betting on being the best model company. It's betting on being the company that can actually run the models at scale. That's a different game. It means hiring changes (more people who build compute factories, fewer pure researchers), it means tolerating cash burn to lock in capacity before the next acceleration, and it means becoming a different kind of company than the one that started with constitutional AI. The Manhattan Project model of AI development-genius researchers and elegant algorithms-is giving way to something closer to infrastructure wars. That's a shift that deserves attention because it changes what "winning" means in AI over the next three years.