Today's Overview

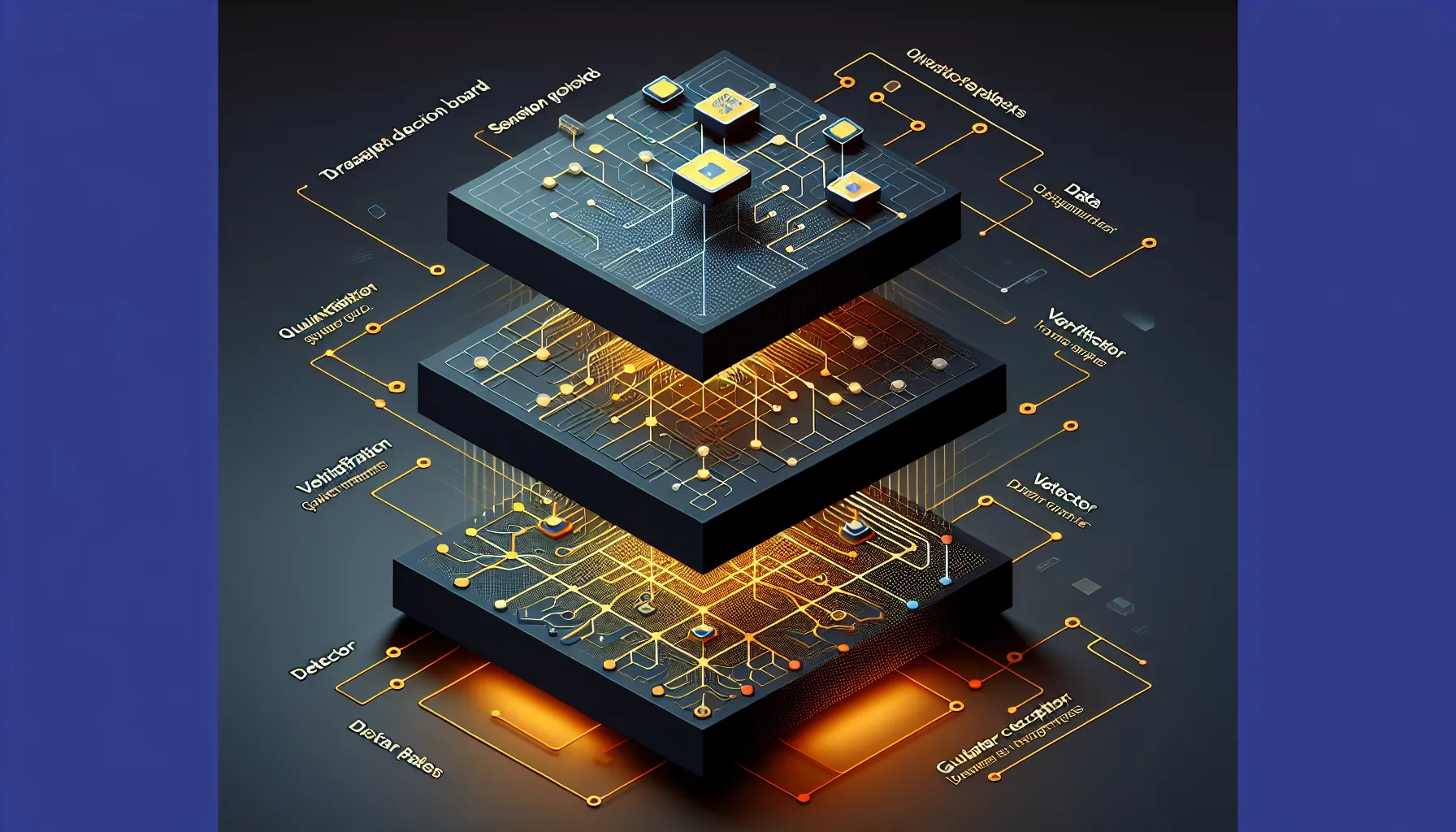

Three distinct stories emerged this morning that all point toward the same underlying shift: the tech world is moving from black-box systems toward verifiable, layered architectures where you can see what's happening and understand why.

The first story is about AI agents becoming readable. A developer built an AI agent that stores its entire decision-making process in Notion-not as a log after the fact, but as the actual operating environment. Before the agent takes a risky action, it writes a proposal to a Notion database as "pending_approval". Users can read the reasoning, edit the decision, approve or reject directly in Notion. The agent waits. This is a small project, but it's structurally important: it inverts the trust model. Instead of "trust the AI black box," it says "verify the AI's work in a tool you already use." The tech stack (React, Node.js, Anthropic Claude, Notion MCP) is deliberately accessible. No custom platforms. No proprietary audit logs.

The second story is about replacing a fundamental web protocol. WebTransport, presented at FOSDEM 2026, challenges WebSocket's 14-year reign. The problem isn't that WebSocket is broken-it's that applications like live financial data, cloud gaming, and collaborative editing need lower latency and better handling of network switching (think: moving from WiFi to mobile). WebTransport sits on top of QUIC, the same underlying protocol that powers HTTP/3, which means it inherits transparent congestion control and faster connection establishment. For builders, this matters because migration isn't a rewrite. Both coexist. You can test WebTransport on a subset of traffic while keeping WebSocket as the fallback.

The quantum research continuing in the background shows that error correction, which has been theoretical for years, is finally encountering real decoder constraints. Three new papers from arxiv tackle the practical gap: how do you measure threshold in quantum computers when different decoder algorithms give different results? The methodological rigor here-running identical experiments with unified reporting-is the unglamorous work that actually makes quantum engineering possible.

What ties these together is that they're all pushing against systems that worked but concealed their inner workings. Agents that you can only trust after deployment. Protocols optimized for a use case that no longer matches reality. Quantum systems measured without accounting for the measurement tool itself. The pattern isn't significant. It's engineering: make the invisible visible, measure what you claim, iterate on evidence.