Today's Overview

There's something worth noticing happening across three different corners of the tech landscape this week. Medtronic just won FDA clearance for its Stealth AXiS spinal surgery system, Anthropic shipped Claude Sonnet 4.6 with some genuinely useful improvements, and Ben Thompson published a piece arguing that AI is making thin clients (dumb devices connected to powerful servers) dominant again. These aren't the same story, but they're connected in ways that matter for how we build and deploy technology.

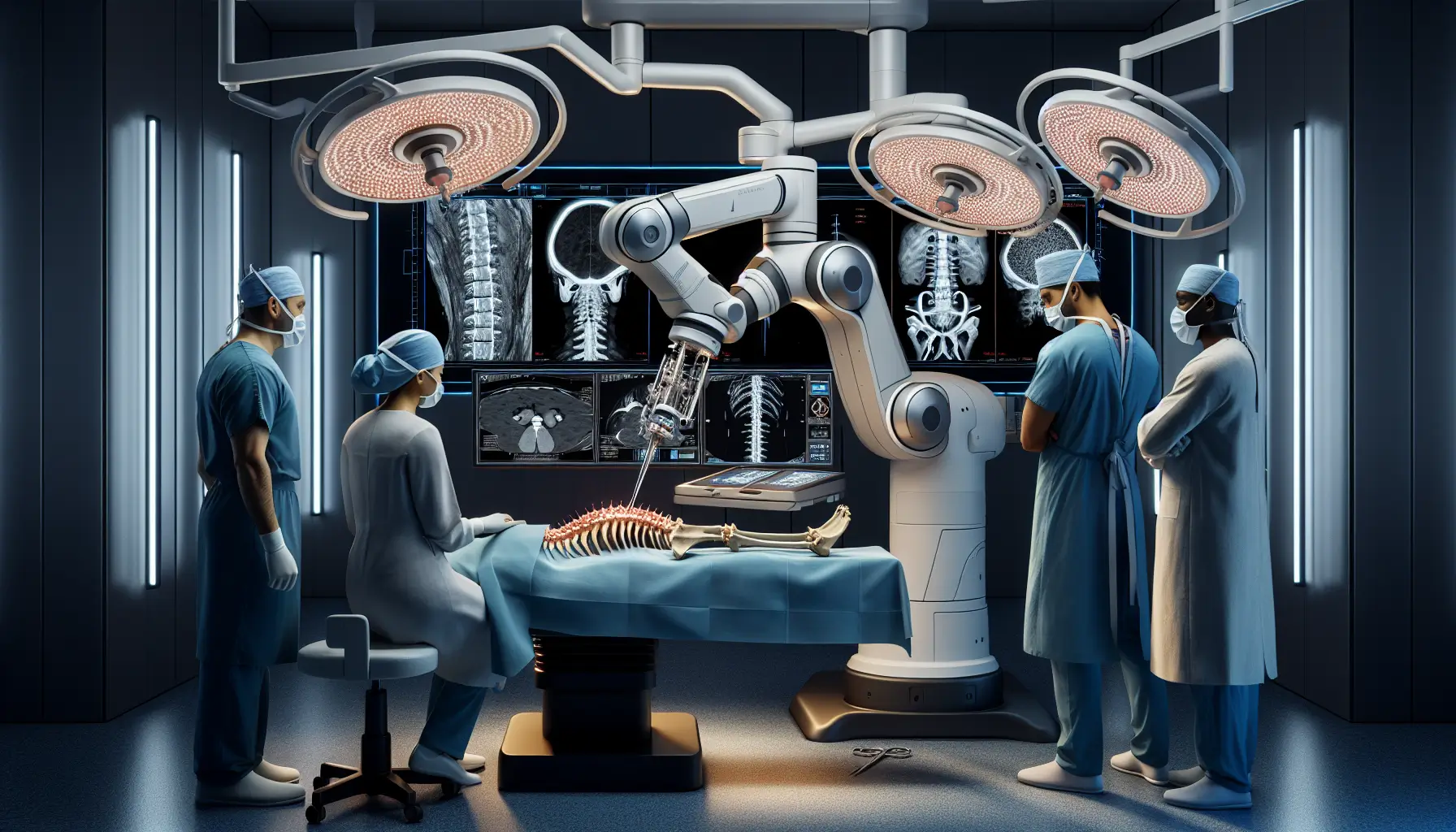

The robotics moment is getting real

Stealth AXiS is instructive because it's not hype. It's a platform that brings planning, navigation, and actual robotic execution into one system for spine surgery. The key bit: LiveAlign segmental tracking lets surgeons see what's happening to the patient's spine in real time during the procedure, without repeated imaging. This solves a genuine problem - spine surgery is complex, anatomy moves, and surgeons have traditionally had to interrupt their workflow to figure out what's actually happening. The system is designed modular, so hospitals can start with what they need today and expand as their surgical workflows demand it. This is how you introduce complex tech into hospitals. The International Federation of Robotics released a position paper this month that catches the broader mood: AI is moving robots out of labs into the real world, and the multi-trillion-dollar potential is drawing everyone from Amazon to Tesla to governments like China's. What matters most is that robots are learning to see, navigate, and manipulate through deep learning, reinforcement learning, and now generative AI that can turn natural language instructions into function code. The barrier between research and deployment is thinning.

The model upgrades are good, but the infrastructure shift is bigger

Sonnet 4.6 is a clean upgrade over 4.5 - better coding, better long-context reasoning, and measurably better at computer use (that's AI directly controlling your mouse and keyboard to get things done). It now matches Opus 4.6 on some benchmarks, though it uses more tokens to get there, which means your cost might be higher depending on the task. That's a tradeoff worth understanding. But what's more significant is what Ben Thompson argued: AI is making thin clients inevitable again. When your interface is just a text box and your device doesn't matter (phone, laptop, glasses, whatever), all the compute happens in data centers. You don't need a powerful PC. You don't need a capable phone. You just need connection. This has profound implications - it's already affecting the memory chip market, with AI hoovering up supply that used to go to consumer devices. PlayStation might get delayed because memory is getting expensive and scarce. That's not a minor detail. It tells you where the real investment is flowing.

For builders working on agents and AI systems, this infrastructure reality is worth thinking through. The models keep getting better, the context windows keep getting larger, and the natural place for all of that is in large data centers with specialised chips and enormous amounts of memory. Local inference is possible but always running behind on performance and capability. The path dependency is building faster than alternatives can catch up.

What connects these threads

In robotics, we're seeing physical AI move from concept to deployment - systems that can see, reason, and act in the real world. In infrastructure, we're seeing the economic logic favour centralised compute. And in models, we're seeing the capabilities needed to make that centralised compute worthwhile keep expanding. The effect is that whoever can build and operate systems at scale - whether that's surgical robots, data centres, or the models running inside them - is winning. It's not a bad time to pay attention to how these pieces are fitting together.