Today's Overview

There's something quietly important happening in three corners of the tech world this afternoon. We've got robotics teams wrestling with how to keep humans safe around machines. We've got open source maintainers raising genuine concerns about AI's appetite for their work. And we've got engineers figuring out how to search through millions of vectors without melting their infrastructure.

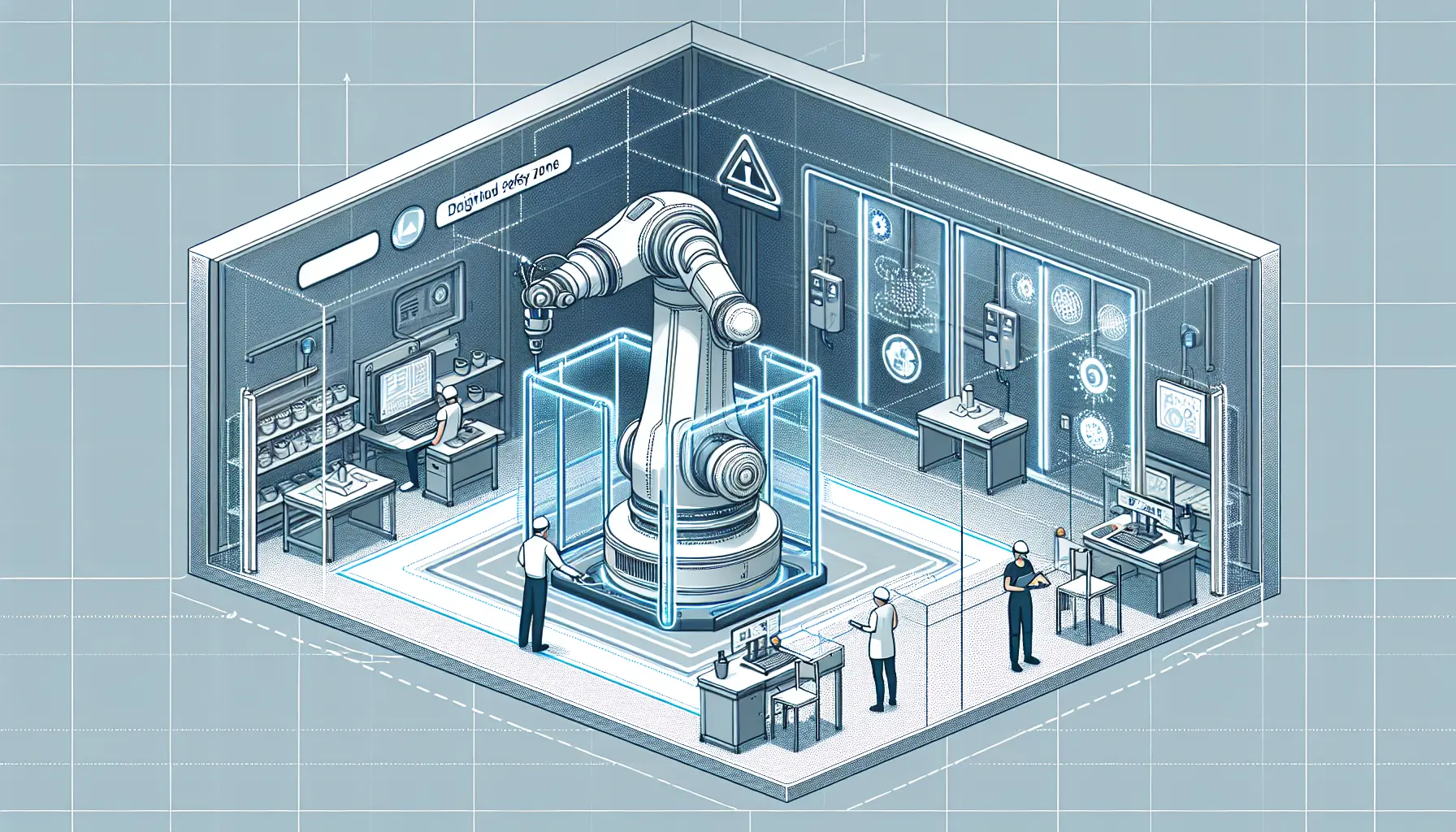

The Real Work of Robot Safety

When you read about collaborative robots, the headlines usually focus on what they can do - pick, place, inspect, transport. What's less discussed is the harder problem: making humans feel genuinely safe working alongside them. Safe cobot workspace design isn't a checkbox exercise. It's about multi-layered safety systems, intuitive interfaces, and ergonomics that don't punish workers for eight hours a day. The companies getting this right - like Toyota with their UR cobots using safety scanners that trigger slowdowns - aren't cutting corners. They're building trust, which is the actual currency of workplace automation.

There's also thoughtful work happening in ROS 2 robotics. A fault manager for robots using just three lines of code might sound trivial, but it's solving a real problem: diagnostic systems that vanish after they stop reporting errors. This one persists fault history with timestamps and occurrence counts. Details like that matter when you're debugging production systems.

Open Source Under Pressure

Jeff Geerling's piece on AI destroying open source has struck a nerve because it's not hyperbole - it's a real structural problem. When AI training vacuums up decades of accumulated knowledge from open source projects without contributing back, it creates a perverse incentive. Maintainers see their freely given work used to train systems that replace them. The concern isn't theoretical; it's about sustainability of the entire ecosystem that underpins modern software.

Making Vector Search Work at Scale

If you're building anything with semantic search, FAISS and approximate nearest neighbor search is where practicality meets performance. Brute force vector comparison breaks down fast - comparing against millions of vectors becomes seconds of latency. The clever bit is trading tiny accuracy losses for massive speed gains. HNSW builds graph shortcuts through vector space. IVF clusters vectors first, then searches only promising clusters. The result: you can search millions of embeddings in milliseconds instead of minutes.

None of this is new science, but it's increasingly essential infrastructure as more systems rely on semantic search and retrieval-augmented generation. Understanding these trade-offs between recall, latency, and memory isn't academic - it's the difference between a product that feels responsive and one that feels broken.