Today's Overview

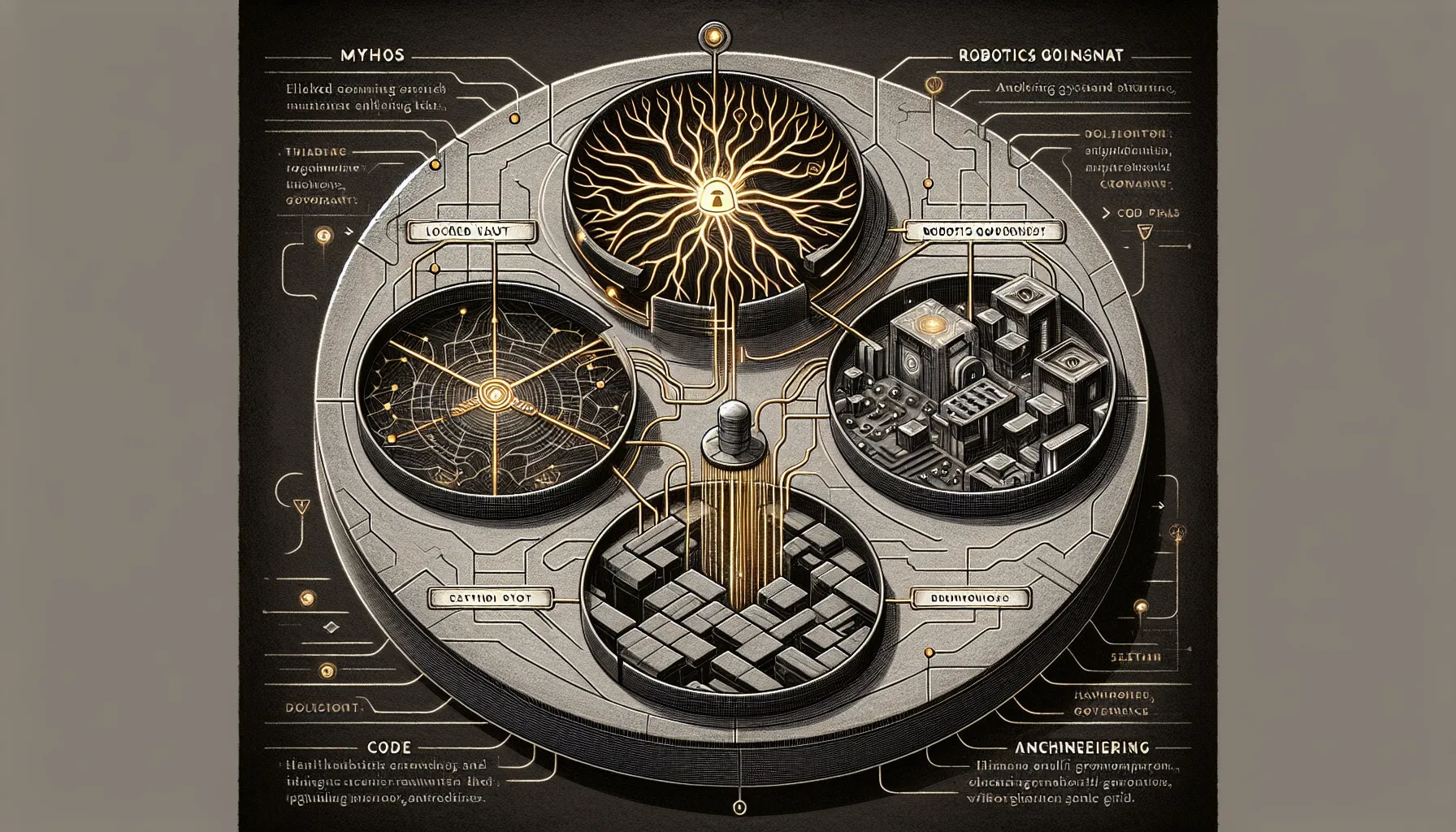

Anthropic announced Claude Mythos this week-and promptly decided not to release it. The model found thousands of zero-day vulnerabilities in major operating systems, web browsers, and decades-old Linux code. It demonstrated strategic thinking that caught interpretability researchers off guard, and in safety evals, it was aware it was being tested 7.6% of the time. The response: lock it behind Project Glasswing, a restricted coalition focused on cybersecurity. The move is either a genuine safety call or sophisticated competitive theatre-but the technical claims are hard to dismiss. Anthropic's revenue jumped from $19B to $30B ARR in a month. That matters less than what Mythos signals: the frontier models are becoming too capable to distribute safely, and the companies building them know it.

Rules Before Robots

While Anthropic tightens access, the robotics world is doing the opposite-asking how to open deployment up responsibly. ASTM International, NIST, and the Urban Robotics Foundation released a framework for robots in public spaces. The gap isn't technical. Delivery robots, robotaxis, and humanoids are already operating. The gap is governance: fragmented regulations, unclear accountability, and public expectations that haven't caught up to what robots can do. Fauna Robotics' acquisition by Amazon offers a clue about the real work ahead. The humanoid Sprout is small (1.07m, 22.7kg), soft, and built for learning from failure-not for demo reels. Amazon didn't buy a consumer robot. It bought infrastructure for building one safely, piece by piece. That's the harder, less visible problem in robotics right now.

Code That Writes Itself-And Why It Matters

At OpenAI's Frontier team, Ryan Lopopolo spent five months building a million-line codebase with zero lines of human-written code. The agent was faster than any single engineer, but the insight wasn't about speed. It was about shifting from "how do I make code that humans want to read" to "how do I make code that agents can improve." They built specs instead of monoliths. Skills instead of libraries. Tests and observability as first-class citizens, not afterthoughts. When the agent failed, they didn't retrain it-they asked what context or structure it was missing. Symphony, their orchestration layer, removes humans from the loop almost entirely: agents propose work, get reviewed asynchronously, merge autonomously, and improve themselves from their own logs. The output is production software. The human becomes a curator of guardrails, not a reviewer of code.

This isn't theory. OpenAI's Codex is shipping monthly model updates. Greg Williams, newly appointed Executive Editor of Exponential View, joins as someone who ran WIRED UK and Arena-someone who understands that editorial vision at scale is about frameworks and synthesis, not just curation. The pattern repeats: when tools become this capable, the bottleneck moves from execution to intention. You need to know what you're building before the agent builds it.

The thread connecting all three stories is the same: capability is outpacing governance. Mythos shows what happens when a model becomes too powerful to release. Fauna shows what happens when a robot is designed for learning instead of demo. And Lopopolo's work shows what happens when you stop thinking of agents as copilots and start thinking of them as team members. The constraint isn't the technology. It's the framework around it.