Today's Overview

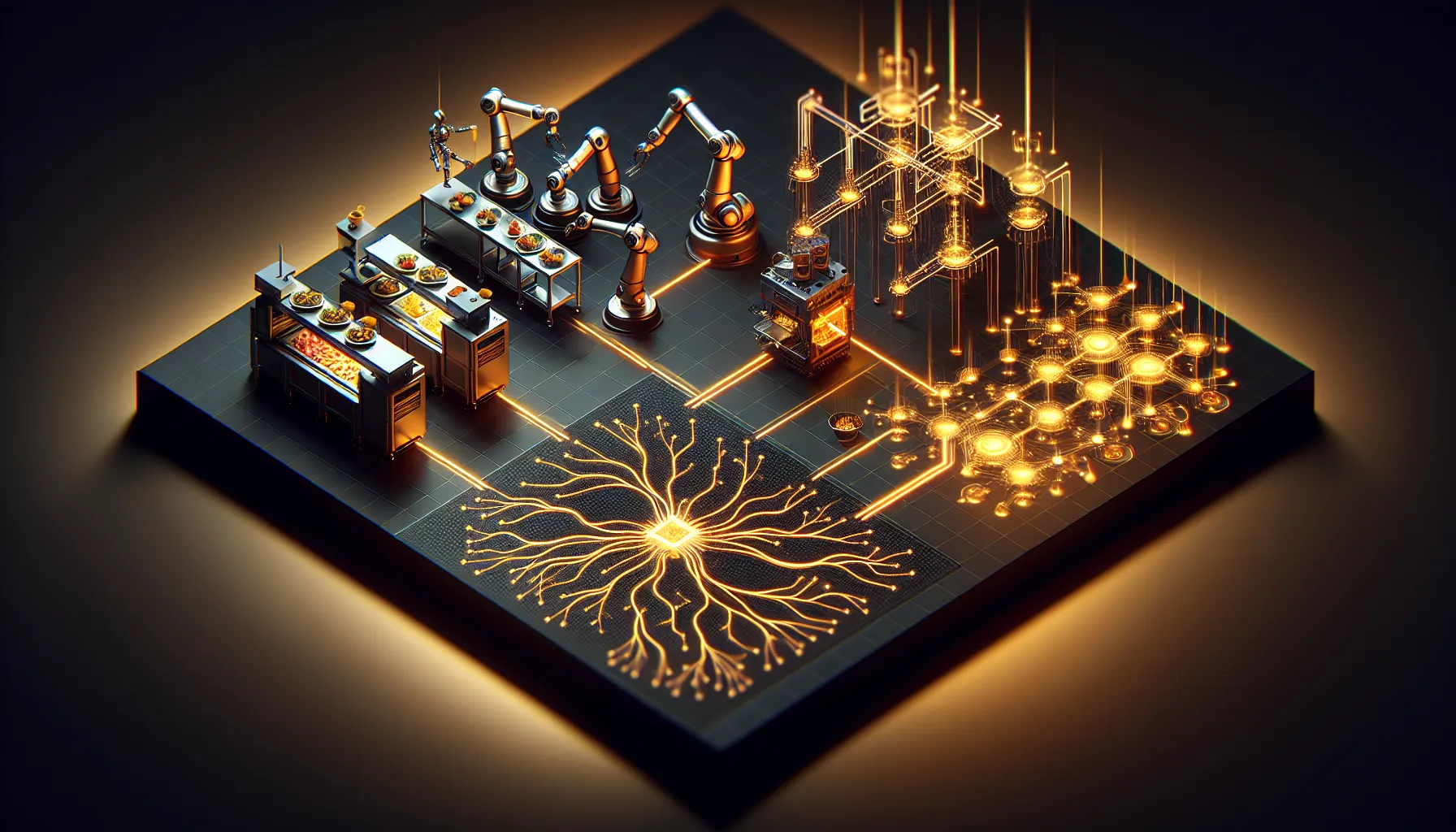

Chef Robotics just passed a milestone that speaks to something fundamental: physical AI is moving from lab demos to real production. Their robots have now prepared 100 million meals across facilities in the US, Canada, and Europe. That's not marketing speak-it's real-world data from customers like Amy's Kitchen. The company doubled its cumulative servings in less than a year, and the flywheel is visible: more deployments generate more real-world training data, which improves models, which enables new customers and use cases.

What matters here isn't just the scale. It's the approach. Chef deliberately started with high-volume, low-complexity tasks (portioning, assembly) rather than trying to automate full kitchens. Food ingredients are deformable and variable-simulation doesn't work. So they built their training entirely on production data from actual customer sites. That's a different engineering philosophy than what we see in most AI companies. And it's working: they've created what they claim is the largest real-world food-manipulation dataset in the world.

The Coding Model Arms Race Accelerates

On the software side, Claude Opus 4.7 landed this week with benchmark jumps that surprised even people expecting an update. It scores 64.3% on SWE-Bench Pro (roughly +11 points over 4.6), and Artificial Analysis ranked it #1 on GDPval-AA with an implied 60% head-to-head win rate against GPT-5.4. Cursor reported an internal benchmark jump from 58% to 70%. But here's the catch: the new tokenizer means the same input can require up to 35% more tokens, though Anthropic claims overall token efficiency is still up 50% versus their former equivalents.

The practical shift matters more than the benchmarks. Users report better instruction-following, stronger self-verification, and a shift in how you should actually use the model-less micromanagement, more delegation. Claude Code now defaults to an "xhigh" reasoning effort. Vision support jumped dramatically: images up to 2,576 pixels on the long edge (3.75 megapixels), versus roughly 960 pixels before. For workflows heavy on screenshots and dense diagrams, that's a real change in capability.

Infrastructure for Agents Is Emerging (and Breaking)

Underneath the model updates, there's a quieter shift happening: agentic AI is pushing toward standardisation, but hitting reliability walls. Amazon is doubling down on the Model Context Protocol (MCP) for connecting agents to external tools. A new protocol called AAIP proposes standard identity and commerce mechanisms for agent-to-agent interactions. But a new arXiv paper warns that small numerical differences in LLMs can cause unpredictable behaviour-a serious problem when agents make consequential decisions. Builders need to assume variance in outputs and plan testing strategies accordingly. WebXSkill, meanwhile, shows promise for teaching agents new skills by combining natural language guidance with executable code-a practical step past brittle browser automation.

The pattern emerging is clear: robotics is proving physical AI can scale in constrained domains (food, surgery), while coding models race toward autonomy-and both are revealing the gap between benchmarks and production reality. The builders paying attention aren't just picking the best model; they're understanding the tradeoffs and building systems that account for instability, cost variance, and the fact that delegating to an agent is fundamentally different from using a pair programmer.

Video Sources

Today's Sources

Start Every Morning Smarter

Luma curates the most important AI, quantum, and tech developments into a 5-minute morning briefing. Free, daily, no spam.

- 8:00 AM Morning digest ready to listen

- 1:00 PM Afternoon edition catches what you missed

- 8:00 PM Daily roundup lands in your inbox