Today's Overview

Something shifted this week. After months of watching AI agents confined to chat windows and controlled workflows, we're seeing a fundamental rethinking of where and how agents actually work. Claude Cowork launching on desktop, Anthropic's partnership vision, and agricultural robotics moving from prototypes to living labs all point to the same insight: agents don't need to be constrained by the systems we built for humans.

Agentic platforms are not workflow automation

There's real confusion in the market right now about what "agentic" means. A workflow engine with an LLM step bolted on isn't an agentic system. An agentic platform is fundamentally different: it gives software a goal and access to tools, then lets it reason about how to proceed at runtime. The difference matters because it changes everything about how you build, deploy, and think about safety. Workflow automation is optimized for predefined paths. Agentic platforms are optimized for runtime judgment-the kind of ambiguous, context-dependent work that humans spend most of their time on.

This matters practically because teams building in this space are making real decisions about what kind of system to invest in. The framework is simple: if the path is known, use workflow automation. If the system has to interpret context, choose tools dynamically, and adapt when the first path doesn't work, you need an agentic platform. Most production systems will use both. But conflating the two has already sunk millions into projects that never shipped.

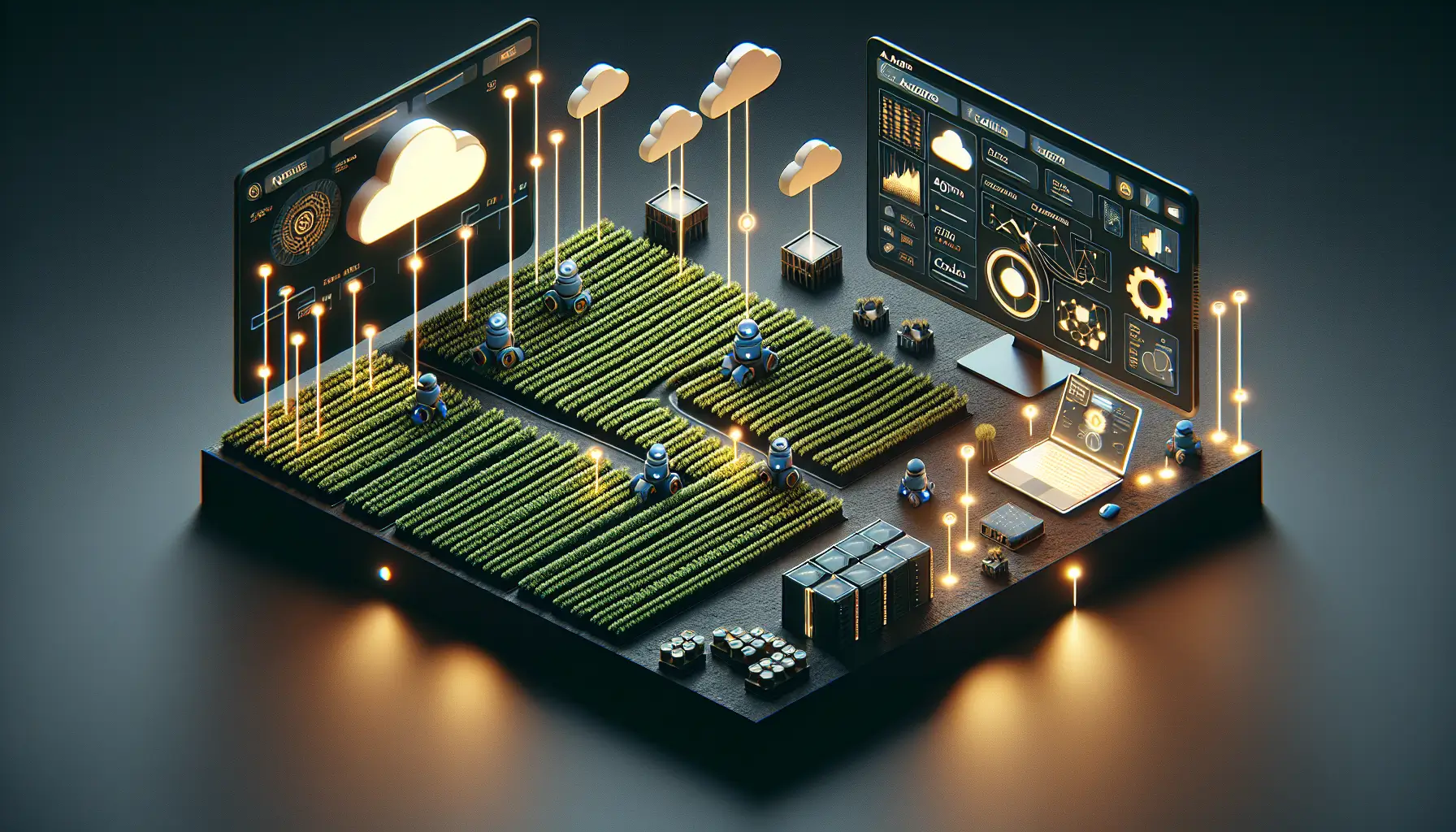

Physical AI is getting infrastructure

In agriculture, we're watching a real-world test of how agentic robotics scales. Reservoir Farms opened its doors this week with 12 startups in residence, each tackling pieces of the farming problem-weeding with electricity (BHF Robotics), precision irrigation (Lumo), embodied AI pruning (Beagle Technology). What's interesting isn't any single robot. It's that the infrastructure exists now. Access to test fields, mentorship, partnerships with John Deere and UC ANR. The "living lab" model compresses years of iteration into weeks. These startups aren't waiting for perfect robots. They're building them against real crop data, real soil, real failure modes.

Meanwhile, Nebius and NVIDIA announced they've solved robotics' "three-computer problem"-the infrastructure fragmentation that wastes 40% of engineering time. They've integrated NVIDIA's Physical AI Data Factory Blueprint into Nebius's cloud platform. The practical effect: teams can now move from simulation to real robot deployment without stitching together incompatible systems. RoboForce cut their pipeline setup time by 70%. That's the speed at which robotics innovation actually accelerates.

What ties these threads together is this: agents work best when they're placed where the work happens. Desktop agents for knowledge work. Simulated agents in the cloud before they touch real systems. Embodied agents in fields making decisions about water and electricity. The abstraction that matters isn't the agent anymore. It's the infrastructure that lets agents reason, act, and improve across these different environments.