Most developers building RAG systems focus on the wrong problem. They obsess over which language model to use, which vector database to deploy, which embedding model performs best. Then they wonder why retrieval quality is mediocre.

The bottleneck is earlier. This guide from Kreuzberg makes the point clearly: ingestion quality determines everything downstream. Feed garbage into your vector database, and no amount of clever retrieval will fix it.

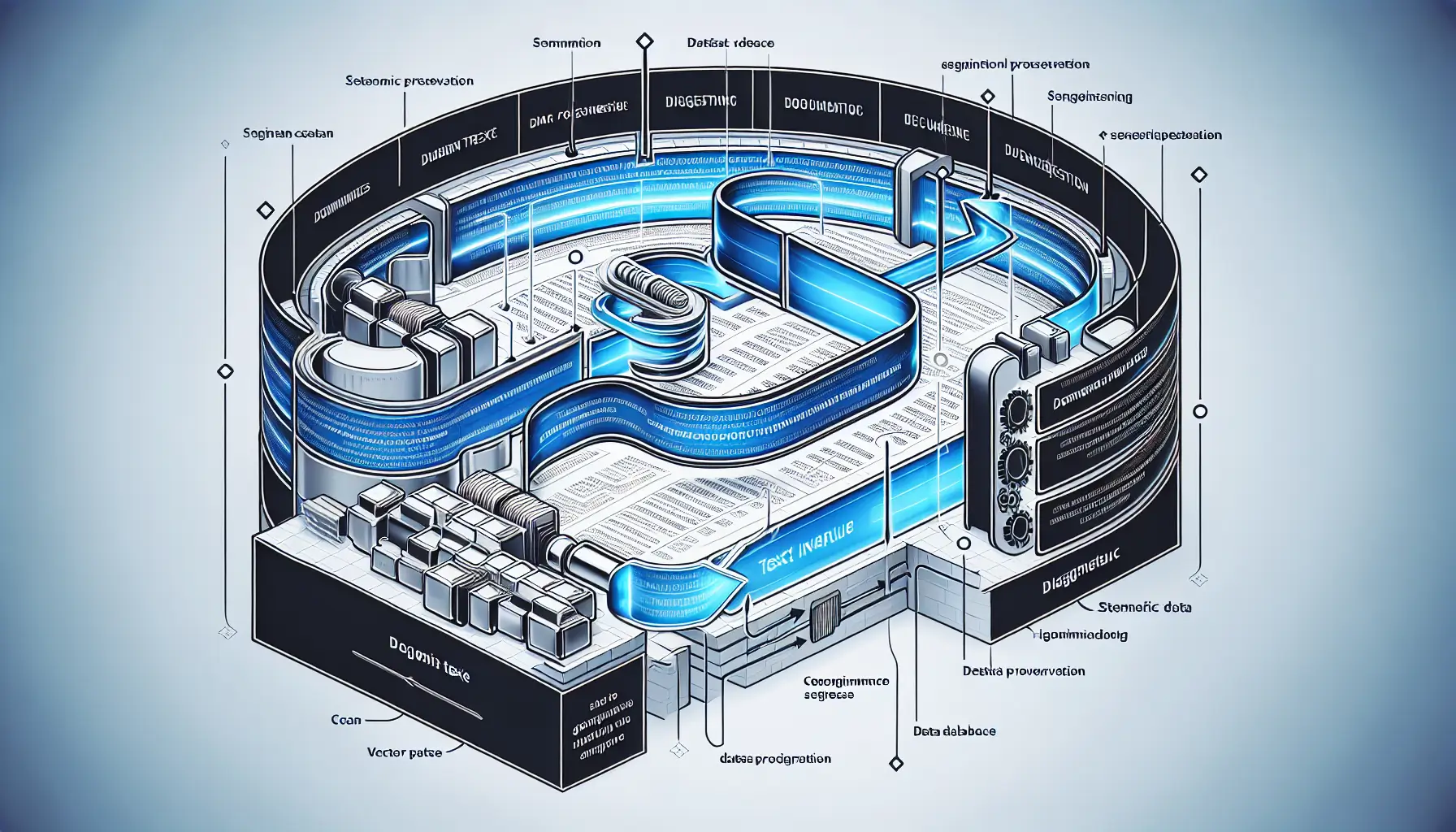

What Ingestion Actually Means

RAG - Retrieval-Augmented Generation - sounds simple. Load documents, split them into chunks, embed the chunks, store them in a vector database, retrieve relevant chunks when someone asks a question. The theory fits in one sentence.

The practice is messier. How do you split a document? By paragraph? By sentence? Fixed character count? Each approach creates different problems. Split too small, and you lose context. Split too large, and you dilute semantic meaning. Split carelessly, and you break ideas mid-thought.

The guide walks through semantic chunking - preserving meaning across splits. Instead of cutting text at arbitrary boundaries, you identify natural breaks in the content. Think section headers, topic shifts, logical transitions. It's more complex than counting characters, but the retrieval quality difference is substantial.

The Database Integration Reality

Vector databases are sold on the promise of semantic search. You embed your chunks, embed your query, find the closest matches in vector space. In theory, it just works.

In practice, you're managing embedding models, configuring similarity metrics, tuning retrieval parameters, and dealing with the fact that semantic similarity doesn't always mean "actually relevant to the user's question".

The guide covers the integration with pgvector and Qdrant, showing both the setup and the gotchas. Port configurations, connection pooling, index optimization. The boring infrastructure work that determines whether your system handles ten queries or ten thousand.

Why Production Differs from Prototypes

Building a demo RAG system takes an afternoon. You load a few documents, run some embeddings, get decent-looking results. Shipping a production system that stays reliable under load is a different problem entirely.

Document format variations break parsers. PDFs with weird encodings, Word documents with embedded images, HTML with inconsistent structure. Your prototype worked because you tested it on clean, well-formatted text. Production data is never that cooperative.

The guide's emphasis on ingestion quality reflects this reality. You can patch around mediocre retrieval with better prompts or more sophisticated ranking. You can't patch around broken ingestion. If your chunks don't make sense, nothing downstream will save you.

The Tooling Landscape

LangChain appears throughout the guide as the orchestration layer. It's become something like the Rails of LLM applications - opinionated, sometimes over-engineered, but solving enough common problems that most teams default to it.

Whether LangChain is the right choice for your project depends on complexity. For straightforward RAG systems, it might be overkill. For anything involving multiple data sources, complex retrieval logic, or production monitoring, the abstractions start earning their keep.

Kreuzberg itself is positioning as a knowledge integration platform. The guide is partly documentation, partly demonstration of their approach. That's worth noting when evaluating the technical recommendations - they're not neutral observations, they're from a team building tools in this space.

What Matters Here

The core message stands regardless of tooling choices. RAG system quality is determined early in the pipeline. Get ingestion right - proper chunking, clean parsing, semantic preservation - and you have a foundation to build on. Ignore it, and you'll spend months debugging retrieval issues that stem from broken input data.

For anyone building a RAG system, particularly in production, this guide provides a practical checklist. Not a silver bullet, not a complete solution, but a clear breakdown of the parts that matter most and the mistakes that cost you later.