Your customer service bot is slow because it's doing everything one step at a time. Sequential LLM calls - check the knowledge base, then verify the customer account, then draft a response - take roughly 12 seconds. Parallel execution drops that to 6.5 seconds. Same work, half the time.

This isn't theoretical optimisation. It's a practical architecture shift that makes conversational AI feel responsive instead of sluggish. A new guide on DEV.to walks through the implementation using LangGraph, complete with production-ready code and state management patterns.

The Problem with Sequential Calls

Most chatbot architectures work like a queue. User sends a message. Bot calls the LLM to understand intent. Waits for response. Calls a database to fetch context. Waits again. Calls the LLM to generate a reply. Waits. Each step blocks the next one.

That's fine for simple queries, but customer service involves multiple data sources. You need order history AND account status AND knowledge base articles. If each lookup takes 2-3 seconds and you're doing them sequentially, you've built a 10-second delay into every interaction.

Users perceive anything over 3 seconds as slow. By 10 seconds, they've assumed the system is broken. Sequential architecture makes fast responses mathematically impossible for complex queries.

How Parallel Sub-Agents Work

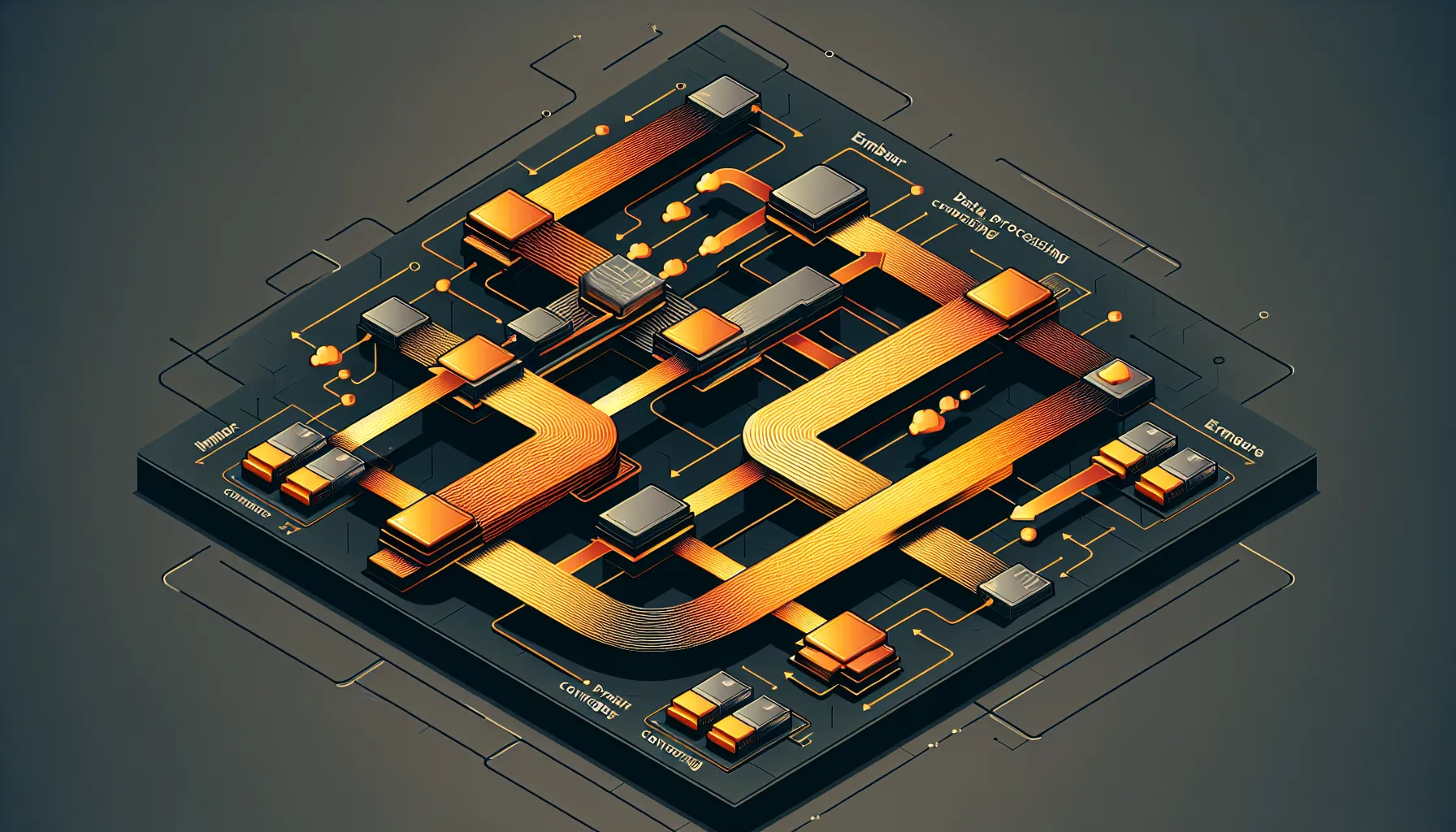

The solution is parallel sub-agent architecture. Instead of one agent doing tasks in sequence, you spawn multiple agents that work simultaneously. One checks the knowledge base while another pulls customer data while a third verifies permissions. They all report back at the same time.

LangGraph makes this manageable. The framework handles spawning concurrent processes, collecting results, and managing state without the usual race condition headaches. The guide includes code for:

Concurrent sub-agent dispatch - launching multiple LLM calls at once without blocking

State aggregation - collecting results from parallel processes safely

Error handling - what happens when one sub-agent fails but others succeed

Evaluation patterns - testing that parallelisation doesn't break logic

The State Management Pitfall

The tricky bit isn't making calls in parallel - it's managing state when multiple processes are writing results simultaneously. If two sub-agents update the same state object at the same time, you get corruption or lost data.

The guide covers this with concrete patterns: isolated state containers for each sub-agent, merge strategies for combining results, and validation steps that catch conflicts before they break the conversation flow. This is production-grade thinking, not just a proof of concept.

Real-World Impact

Cutting response time in half changes user behaviour. People tolerate a 6-second wait. They abandon a 12-second one. For customer service automation, that's the difference between deflecting tickets and frustrating users into calling support.

This applies beyond customer service. Any AI system that needs to gather context from multiple sources - research tools, data analysis bots, workflow automation - benefits from parallel architecture. The pattern is transferable.

For teams building on LLM APIs, this is a practical next step once basic chatbot functionality works. You don't need to rebuild everything - the guide shows how to refactor sequential code into parallel execution incrementally.

Why This Matters Now

LLM latency isn't improving fast enough to solve this with better models. API response times are what they are. The only way to get faster is to stop waiting for one thing to finish before starting the next.

As AI systems get more complex - more tools, more context sources, more verification steps - sequential execution becomes a bottleneck. Parallel architecture isn't optional for production systems at scale. It's the baseline.

The code examples in the guide are worth studying even if you're not using LangGraph. The patterns - isolated state, concurrent dispatch, merge strategies - apply to any framework. It's a mental model shift from procedural to parallel thinking.

If your bot feels slow and you're not running sub-agents in parallel, that's your answer. Same functionality, half the latency, better user experience. The architecture change pays for itself in the first week.