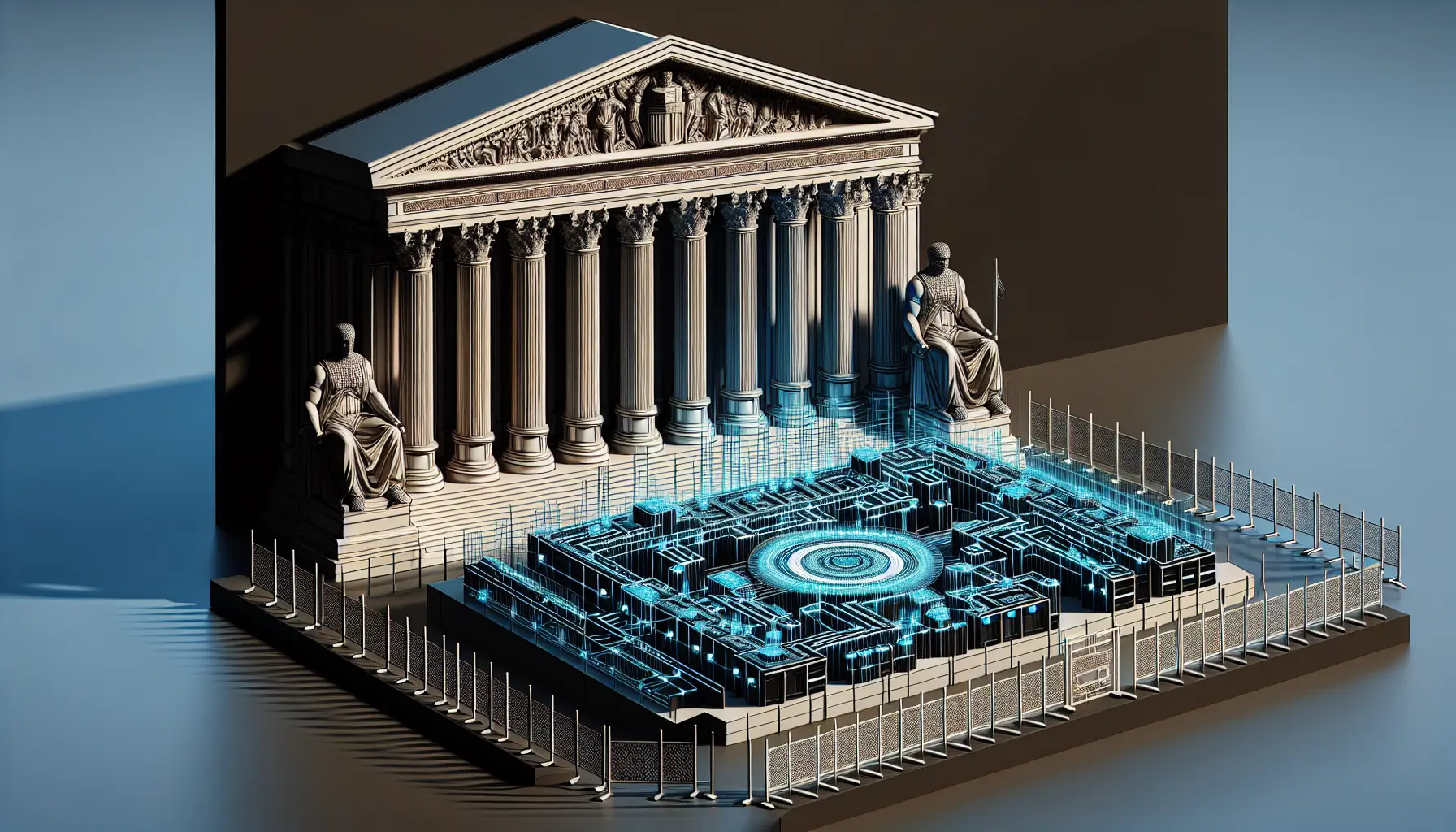

Anthropic - the AI safety company co-founded by former OpenAI researchers - has been designated a supply-chain risk by the Pentagon. The company refused to remove safety guardrails from its models during negotiations over military use, and now faces being locked out of US government systems entirely.

This isn't a cybersecurity vulnerability or a data breach. This is about refusing to compromise on safety principles, even when the customer is the Department of Defence.

What Actually Happened

According to TechCrunch, negotiations between Anthropic and the Pentagon broke down over the company's constitutional AI framework - a set of hard-coded ethical boundaries that govern how Claude responds to certain prompts. The military wanted flexibility. Anthropic said no.

The Trump administration's response was swift: designate the company as a supply-chain risk and order federal agencies to stop using Claude entirely. It's a classification typically reserved for foreign adversaries or companies with serious security flaws. Using it against a US-based AI safety company is unprecedented.

Here's what makes this significant. Anthropic isn't some fringe startup with radical politics. They're deeply embedded in the AI infrastructure - their models power tools used across government, enterprise, and research. Amazon has invested billions. They're one of the most technically rigorous AI labs operating today.

And they just walked away from one of the most lucrative customers in the world because the ask conflicted with their founding principles.

The Safety Guardrails in Question

Constitutional AI isn't a marketing term. It's a technical framework where ethical boundaries are trained into the model during the reinforcement learning phase. Claude doesn't just refuse harmful requests because of a filter sitting on top - the refusal is part of the model's learned behaviour.

In simpler terms: imagine teaching a child right from wrong so deeply that they don't just follow rules because they're watching, but because the principles are part of how they think. That's what Anthropic built. And the Pentagon wanted the option to override it.

The military's position isn't entirely unreasonable. Defence applications have edge cases where standard safety frameworks might be too restrictive. If you're analysing threat intelligence or running simulations, you need a model that can engage with scenarios most commercial systems would refuse.

But Anthropic's position is equally defensible: once you build a backdoor, you can't control who walks through it. A model that can be instructed to ignore its safety training in one context can be manipulated to ignore it in others. The technical challenge of selective guardrail removal isn't trivial.

What This Means for AI Governance

This isn't just a contract dispute. It's a precedent-setting moment for how governments interact with AI companies that prioritise safety over compliance.

If refusing to weaken safety mechanisms can get you classified as a supply-chain risk, what does that mean for other AI labs? Do you build principles into your models knowing it might lock you out of government work? Or do you design flexibility from the start, knowing it creates vulnerabilities?

The timing is also revealing. We're in an era where AI regulation is still being written, and the balance between innovation, safety, and national security is far from settled. This decision signals that safety-first approaches may not align with government priorities - at least not under the current administration.

For business owners and developers watching this unfold, the implications are immediate. If you're building on Claude or planning to integrate Anthropic's API into government-facing tools, you now have a compliance problem. If you're evaluating AI vendors, you have a new risk factor to consider: will this company compromise on safety if pressured?

And if you're thinking about AI governance within your own organisation, this case study is instructive. Principles are easy to state. They're much harder to defend when the cost is this high.

Anthropic just showed us what it looks like to hold the line. Whether that's admirable or reckless depends entirely on where you stand on the question of AI safety - and how much you trust powerful systems to police themselves.