Rafał Gron spent months optimising vector embeddings for ecommerce search. Cosine similarity scores looked good. Semantic relevance improved. But the search still failed on queries like "cheapest laptop under $500" or "shoes but not sneakers".

The problem wasn't the embeddings. It was that embeddings can't parse constraints.

What Embeddings Miss

Vector search excels at semantic similarity. Ask for "comfortable running shoes" and it'll find products described with related concepts - cushioning, support, athletic footwear. But ask for "running shoes under £100, not Nike", and vector search breaks down. Embeddings don't understand price caps. They don't process exclusions. They approximate meaning, but they can't extract structured intent.

Gron's solution: add an LLM parser before the vector search runs. The LLM reads the query, extracts constraints (price range, brand exclusions, sort preferences), and passes clean parameters to the search layer. The vector embeddings then do what they're good at - finding semantically relevant products - but within the boundaries the user actually specified.

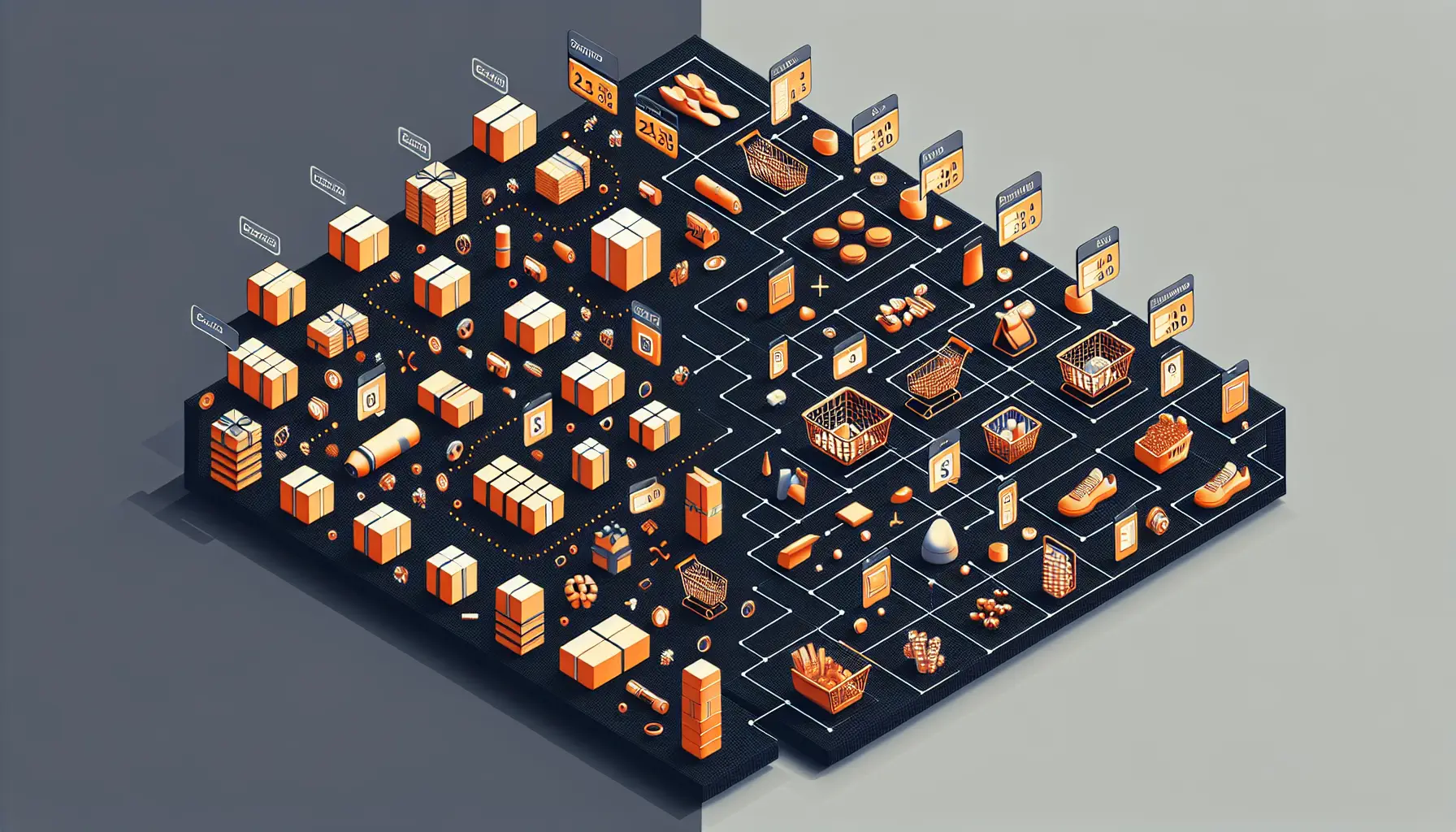

The Architecture That Works

Here's how it flows: user query hits the LLM parser first. The parser identifies structured constraints and converts natural language into searchable parameters. Those constraints filter the product database. Then vector search runs on the filtered set, ranking by semantic relevance.

The results changed dramatically. Queries with price constraints now return products in the right range. Exclusions actually exclude. Sort preferences get respected. More importantly, high-intent buyers convert better - because they're seeing products that match their actual criteria, not just semantic neighbours.

Why This Matters Beyond Ecommerce

The insight here applies anywhere you're mixing semantic search with structured constraints. Customer support systems need to filter by account status before searching help articles. Document retrieval needs to respect access permissions and date ranges. Job search needs to parse salary requirements and location preferences before matching skills semantically.

Vector search became the default solution for "AI-powered search", and in many cases, it works brilliantly. But it's not enough when users have specific constraints. Embeddings approximate meaning. They don't parse logic.

Gron's architecture shows the value of layering approaches instead of replacing them. The LLM doesn't do the search - it interprets intent. The vector embeddings don't parse constraints - they find relevance. Each component handles what it's actually good at, and the combination solves problems neither could handle alone.

For anyone building search systems right now, this is worth studying. Vector search isn't the complete answer. It's one part of a system that needs constraint parsing, filtering logic, and semantic matching working together. The semantic layer finds relevance. The structured layer respects reality.

High-intent buyers don't want approximate matches. They want what they asked for.