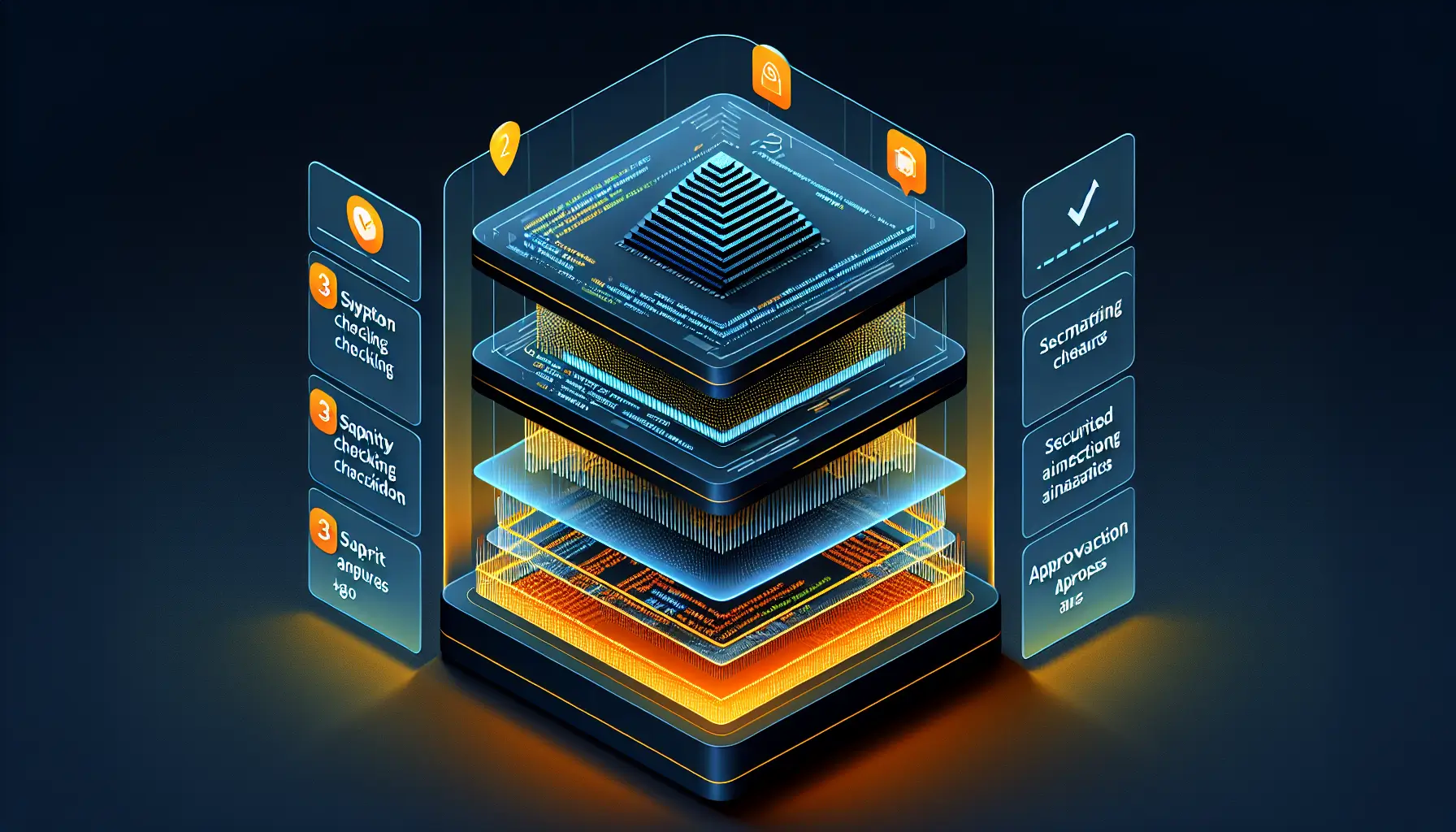

Code review automation isn't new. What's new is that the stack has matured enough that the ROI is undeniable - between 5x and 11x return on investment, according to recent implementation data. But only if you build it properly, in three distinct layers.

Most teams either do nothing (expensive manual reviews catching basic issues) or jump straight to AI-powered review tools (expensive false positives, context misses, and developer frustration). The middle path - the one that actually works - is a layered approach where each level catches different classes of issues.

Layer one: linting and formatting

This is the foundation. Tools like ESLint for JavaScript or Pylint for Python catch syntactic issues, enforce style consistency, and flag common mistakes before code ever reaches a human reviewer. Prettier handles formatting automatically.

Why this matters: roughly 30% of code review comments are about style and formatting. That's wasted human time on issues a machine can fix instantly. Automate this layer completely - run it on pre-commit hooks, fail CI builds if standards aren't met, and never let humans debate whitespace again.

Implementation is straightforward. Pick your standards (Airbnb, Google, whatever fits your team), configure the tools, integrate with your CI pipeline, and enforce it. This should take a week maximum and saves hours every single week thereafter.

Layer two: static analysis and security scanning

Once the syntactic layer is automated, move to semantic analysis. Tools like SonarQube scan for code smells, complexity issues, duplicated logic, and security vulnerabilities. This catches another 20-30% of review feedback - things like SQL injection risks, unused variables, overly complex functions, and common anti-patterns.

The key here is tuning. Out of the box, these tools are noisy. You'll get false positives. You'll get warnings about things that don't matter for your codebase. The implementation strategy is to start permissive, tune rules iteratively, and gradually tighten standards as the team adapts.

SonarQube's strength is its enterprise-grade security scanning. It flags vulnerabilities based on known CVEs, checks dependencies for outdated packages, and integrates with deployment pipelines to block risky code from reaching production. For teams handling sensitive data, this layer isn't optional - it's compliance.

Layer three: AI-powered semantic review

This is where tools like Graphite Reviewer, CodeRabbit, or GitHub Copilot's review features come in. They analyse context, suggest architectural improvements, spot logic errors, and comment on code readability. When tuned properly, they catch issues that static analysis misses - things like "this function works but could be refactored for clarity" or "this edge case isn't handled".

The challenge with AI review tools is managing expectations. They will produce false positives. They will miss nuance. They will sometimes suggest refactors that make things worse. The trick is treating them as junior reviewers - useful for catching obvious issues, but not a replacement for human judgment on architecture or design decisions.

Graphite's approach is particularly practical: their tool comments inline like a human reviewer, integrates with pull request workflows, and learns from feedback. When a developer marks a suggestion as unhelpful, the system adjusts. Over time, the noise decreases and the signal improves.

Implementation strategy: crawl, walk, run

The guide recommends a phased rollout, and this is critical. Don't implement all three layers simultaneously - you'll overwhelm the team and burn trust in automation. Start with linting and formatting. Let the team adapt. Then add static analysis with permissive rules. Tune it. Only then introduce AI-powered review.

Each phase should run for 2-4 weeks before adding the next layer. This gives developers time to adjust workflows, learn what the tools catch, and build confidence that automation is helping, not hindering. Rushed rollouts create backlash and abandoned tools.

The ROI calculations are straightforward: if your team spends 10 hours per week on code review, and automation handles 50% of that workload, you've saved 5 hours weekly. At a fully-loaded developer cost of £400-500 per day, that's roughly £12,000-15,000 annually per developer. The tools cost a fraction of that.

What about false positives?

This is the implementation killer. If your automated review system floods pull requests with irrelevant comments, developers will ignore it - or worse, disable it entirely. The solution is iterative tuning and clear feedback loops.

For static analysis, start with high-confidence rules only. Disable anything that produces more than 10% false positives. Gradually enable stricter rules as the codebase improves. For AI review, mark false positives explicitly and check if the tool learns from feedback. If it doesn't, consider alternatives.

The goal isn't zero false positives - it's a signal-to-noise ratio that makes engagement worthwhile. If 70% of automated comments are valuable, developers will read them. If it's 30%, they won't.

The real benefit: faster feedback loops

The ROI calculations focus on time saved, but the real benefit is speed. Automated review runs immediately on commit. Developers get feedback in minutes, not hours or days. That tightens the learning loop and catches issues when context is still fresh in their heads.

For senior developers, it means less time reviewing junior code for basic issues. For junior developers, it means faster learning - they see patterns and mistakes flagged instantly, without waiting for a human review cycle. That's valuable for team growth, not just efficiency.

Code review automation is finally mature enough to implement widely. The three-layer stack works. The ROI is real. The tools exist. The question isn't whether to do it - it's whether you're willing to invest the 6-8 weeks required to roll it out properly.