Most teams building AI agents are solving the wrong problem. They're burning GPU cycles retraining models when the faster path to improvement sits in a config file.

A new framework from LangChain breaks AI agent learning into three distinct layers - and understanding which layer to optimise changes everything about how you build systems that compound over time.

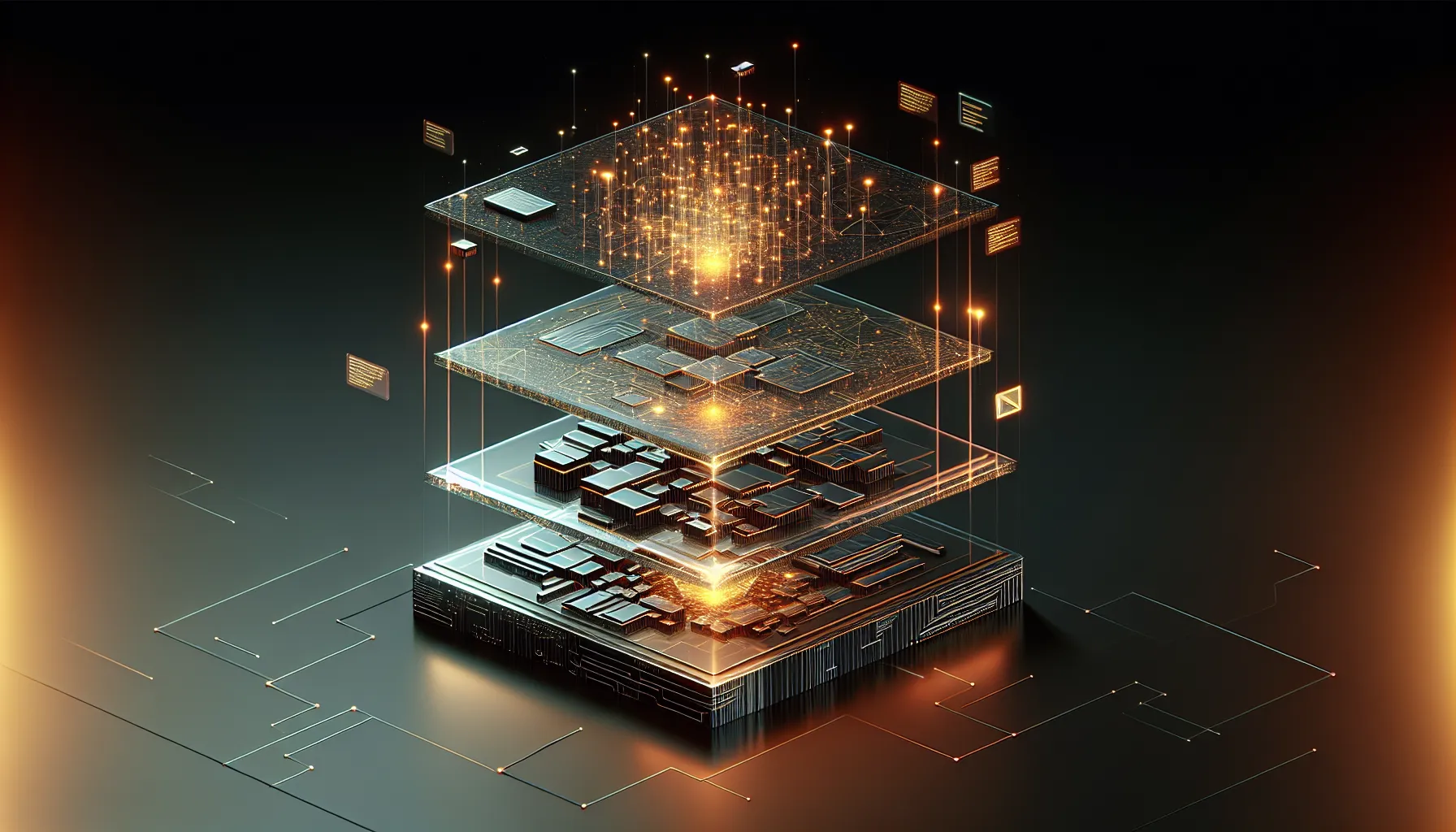

The Three Layers of Agent Learning

Think of an AI agent as a three-part system. At the base sits the model layer - the foundational LLM itself. Above that lives the harness layer - the code that connects the model to tools, APIs, and workflows. At the top sits the context layer - instructions, skills, and memory that guide behaviour.

Here's what matters: those layers improve at completely different speeds and costs.

Retraining a model costs thousands in compute and weeks in calendar time. Updating the harness means changing code - faster than retraining, but still requires engineering time and testing. Updating context? That's editing a prompt, adding a skill to a library, or logging a new memory. Hours, not weeks. Pounds, not thousands.

Yet most teams default to the slowest, most expensive layer first. They see an agent fail and think "we need a better model" when the real answer might be "we need clearer instructions" or "we need to remember what worked last time."

Why Context Compounds Fastest

The context layer is where agents get genuinely better at YOUR specific work. A model knows general patterns. Context knows that your finance team needs invoices formatted a specific way, or that customer support requests from enterprise clients get priority routing, or that code reviews should check for your company's naming conventions.

This layer improves through use. Every successful task can become a logged skill. Every mistake can update instructions. Every interaction can add to memory - what worked, what didn't, what this user prefers.

The beautiful bit: context updates don't require retraining. You're not changing what the model knows - you're changing what it pays attention to. That's the difference between teaching someone everything about plumbing versus handing them a checklist for your specific building.

The Practical Shift

For developers building agent systems, this reframes the entire optimisation strategy. Start with context. When an agent fails, ask: could better instructions have prevented this? Could a logged skill from a previous success have helped? Could memory of past interactions have changed the approach?

Only when you've exhausted context improvements do you move down to the harness layer - changing how the agent connects to tools, refining the workflow logic, adding new integrations. The model layer is last resort territory. You retrain when the fundamental capabilities aren't there, not when the agent just needs better guidance.

This matters for small teams especially. You cannot afford to retrain models every time behaviour needs adjustment. But you absolutely can afford to maintain a growing library of skills, a well-organised memory system, and increasingly precise instructions. That's compound learning that doesn't explode your budget.

What This Changes

The teams building agents that actually get better over time aren't the ones with the biggest training budgets. They're the ones obsessively maintaining their context layer - logging what works, pruning what doesn't, systematically building institutional knowledge into the system.

It's less sexy than fine-tuning a custom model. But it's faster, cheaper, and for most real-world applications, it's where the actual improvement lives. The model gives you potential. Context gives you performance.

Think of it like hiring. You don't retrain a person's entire brain when they make a mistake. You give them feedback, update their instructions, and build on what they already know. Agents work the same way - when you stop treating every problem like it needs a new foundation and start building on the context layer, that's when systems start compounding.

The interesting bit isn't that this framework exists. It's that so few teams are optimising at the right layer. Understanding where learning actually happens changes how you build, how you budget, and how fast your agents improve. Most of the time, the answer isn't a better model. It's better context.