The hype train loves a shiny model launch. GPT-5, Claude Opus 4, Gemini Ultra 2.0 - each one promises to be smarter, faster, more capable. And yet, if you're building AI into a real product, you've probably noticed something: the model is rarely your problem.

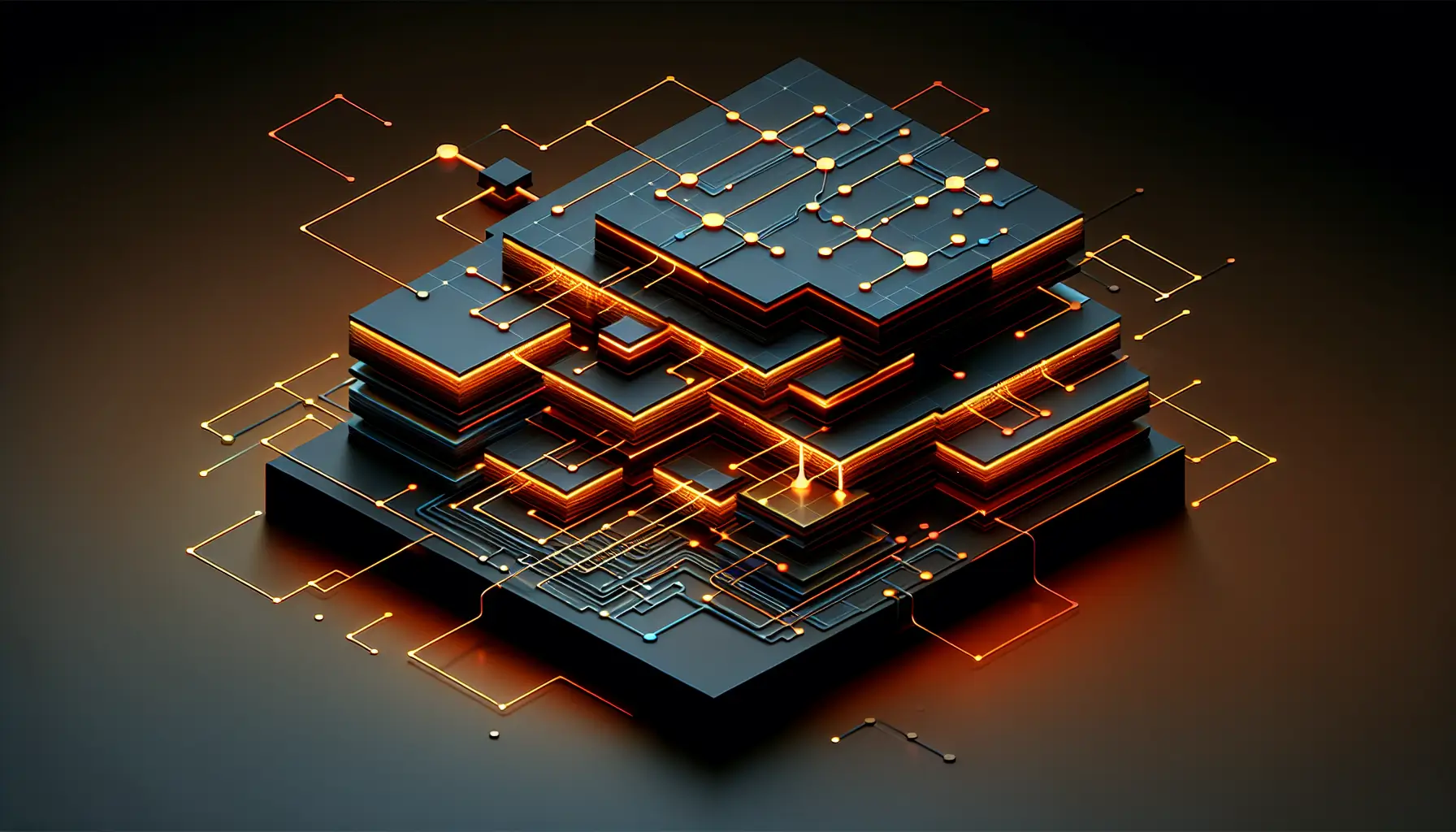

The actual challenge? Everything that sits between the model and your user. The layer nobody photographs for launch videos. The infrastructure that makes AI actually work in production.

The Middleware Moment

There's a pattern emerging across AI development teams. The initial excitement of plugging in an LLM API gives way to a sobering realisation: you need orchestration to route requests intelligently. You need observability to understand what's actually happening. You need guardrails to prevent the model from saying something catastrophically wrong. You need caching because every API call costs money. And you need evaluation frameworks to know if any of this is actually working.

This layer - middleware - is where the real engineering lives. And according to recent analysis from developers building in production, it's also where the infrastructure value is concentrating.

Why? Because models are commoditising faster than anyone expected. GPT-4 launched with a moat. Eighteen months later, you can get comparable performance from half a dozen providers, often cheaper and faster. The differentiation isn't in the model anymore. It's in how you deploy it.

What Middleware Actually Does

Think of middleware as the operating system for AI applications. Your model is the processor - powerful but useless without the software layer that manages it.

Orchestration decides which model gets which request. Maybe your cheap, fast model handles simple queries. Your expensive, capable model tackles complex reasoning. Middleware routes intelligently, balancing cost and quality in real-time.

Observability is your sanity. In production, models fail in weird ways. They hallucinate. They refuse to answer. They produce subtly wrong outputs that look plausible. Middleware captures logs, tracks performance, flags anomalies. Without it, you're debugging blind.

Guardrails stop catastrophic failures before they reach users. Content filters catch unsafe outputs. Fact-checking layers validate responses. Fallback logic catches errors gracefully. This isn't optional refinement - it's the difference between a demo and a product.

Caching saves money. If five users ask the same question, why call the API five times? Middleware stores responses, serves duplicates instantly, and cuts costs by 40-60% in typical applications.

Evaluation tells you if your system is getting better or worse. Middleware tracks accuracy, relevance, user satisfaction. It runs automated tests. It compares model versions. It gives you the data to actually improve.

Why Developers Resist This

Here's the uncomfortable truth: middleware feels boring. It's not the exciting part of AI development. Nobody dreams of building caching layers. The dopamine hit comes from watching a model generate brilliant text, not from implementing retry logic.

But this is exactly why middleware matters. The teams that treat it as first-class architecture - not a bolt-on refinement - ship reliable products. The teams that skip it end up with demos that break in production.

There's also a skill gap. Many developers jumping into AI come from web development or data science. They know models. They know APIs. But middleware requires distributed systems thinking, cost optimisation, reliability engineering. It's a different skill set.

The Business Reality

For business owners watching AI developments, here's what this means practically: if you're evaluating AI tools or hiring developers, ask about the middleware. Anyone can wire up an API call to GPT-4. The companies that will survive are the ones building robust infrastructure around it.

Look for teams talking about error handling, cost per query, monitoring dashboards, automated testing. Those are the signals of production-ready thinking.

And if you're building AI products yourself? Budget time and resources for middleware from day one. It's not glamorous. It won't make a good demo video. But it's the difference between a prototype and a business.

The middleware layer is where AI engineering grows up. It's where the easy part ends and the real work begins. And increasingly, it's where the competitive advantage lives.