Standard clustering treats every data point as independent. Hidden Markov Models know that's wrong.

A new tutorial demonstrates this by applying HMMs to stock market data. The result? Five times fewer regime switches than K-Means clustering, because HMMs respect something K-Means ignores: time.

The difference isn't subtle. K-Means sees a volatile day and immediately flags a regime change. HMMs see the same day, check the recent history, and recognise it as noise within an existing regime. One approach has memory. The other doesn't.

Why Time-Series Clustering Fails

K-Means is a workhorse algorithm. You give it data points, tell it how many clusters you want, and it groups similar points together. It's fast, simple, and works beautifully - until your data has a temporal dimension.

Stock markets don't jump randomly between states. A bull market doesn't become a bear market and flip back in a single day. Market regimes persist. They have momentum. A volatile day during a bull run is still part of the bull run.

K-Means can't see this. It looks at each day's features - volatility, volume, price movement - and assigns it to a cluster based purely on those features. No context. No memory. If today looks like a bear market day, K-Means puts it in the bear market cluster, even if yesterday and the day before were clearly bull market days.

The result? Noisy, unstable regime classifications that flip constantly. Useless for decision-making.

How HMMs Add Memory

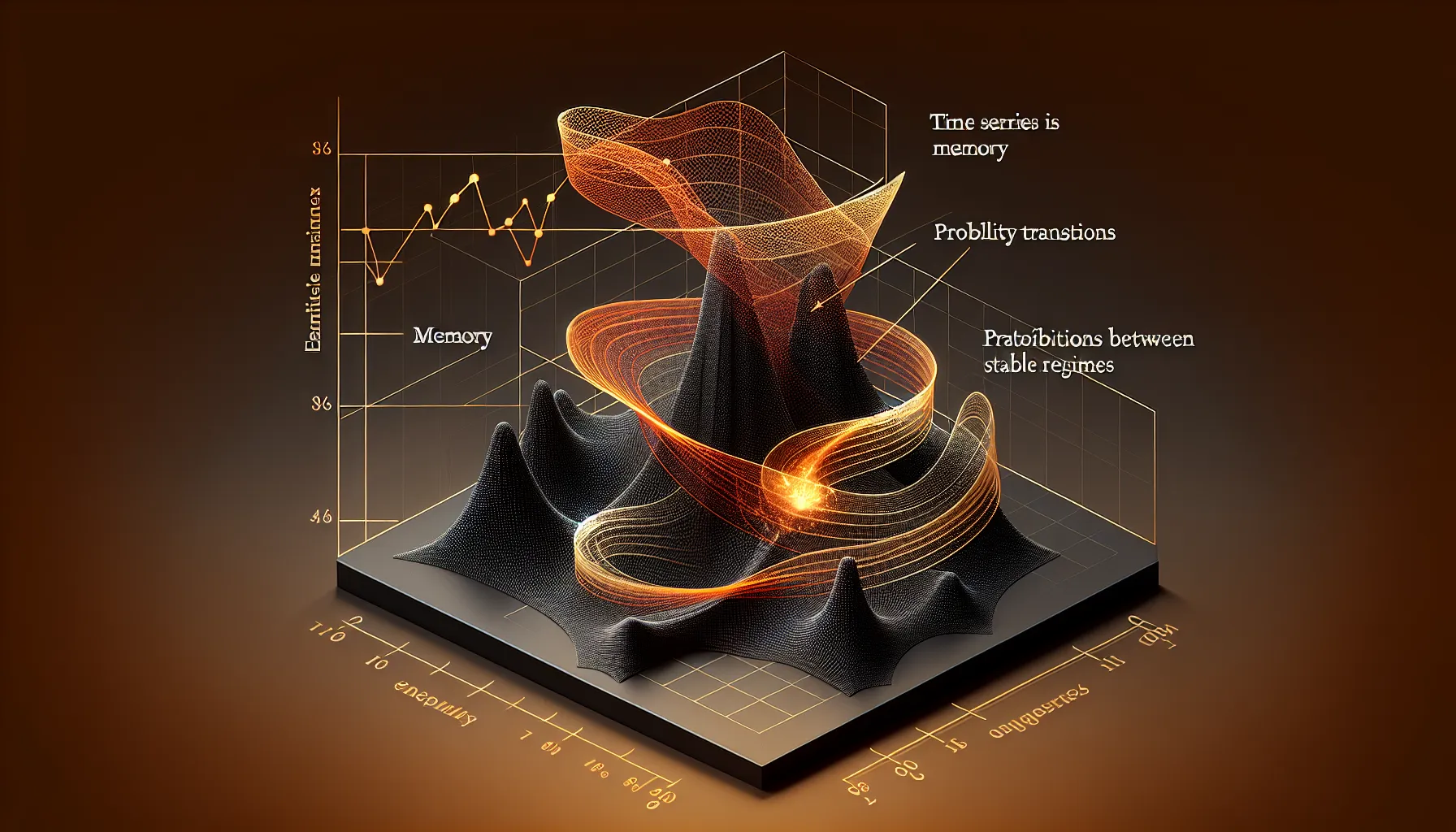

Hidden Markov Models solve this with a simple addition: a transition matrix. This matrix encodes the probability of moving from one state to another. If you're in a bull market today, there's maybe a 95% chance you're still in a bull market tomorrow, and a 5% chance you've switched to a bear market.

These transition probabilities act as inertia. The model doesn't ignore today's data, but it weights it against recent history. A single volatile day won't flip the regime classification unless it's part of a sustained pattern.

The tutorial implements two core HMM algorithms from scratch: the Forward algorithm for computing state probabilities, and the Viterbi algorithm for finding the most likely sequence of states. Both respect temporal dependency. Both deliver stable classifications.

The 5x Reduction in Regime Switches

On the same stock market dataset, K-Means detected regime changes constantly. The HMM detected five times fewer. That's not because the HMM is less sensitive - it's because most of those K-Means switches were false positives, noise misinterpreted as signal.

For anyone trading on regime detection, this matters enormously. False signals are expensive. Every time your model says "the market has changed" and you rebalance your portfolio, you pay transaction costs. If your clustering algorithm is crying wolf five times more often than necessary, those costs compound fast.

The HMM's stability comes from its transition matrix. It learns, from historical data, how sticky each state is. Bull markets tend to persist. Bear markets tend to persist. Transitions happen, but not every day. The model bakes that knowledge into its predictions.

Where Else This Applies

Stock markets are just one use case. HMMs work anywhere you have time-series data with underlying states that change slowly.

Speech recognition was an early application - phonemes don't flip randomly, they follow patterns. Weather modelling uses HMMs because weather systems have persistence. Medical diagnosis benefits from them because patient health states evolve gradually, not chaotically.

The core insight is the same everywhere: clustering without memory is often wrong. If your data has a time dimension, and the states you're trying to detect have any kind of persistence, K-Means will give you noise. HMMs will give you signal.

Implementation from Scratch

The tutorial's strength is that it builds both algorithms - Forward and Viterbi - from first principles. No black-box library imports. You see exactly how the transition matrix updates, how the forward probabilities propagate, how Viterbi backtracks through the most likely state sequence.

This matters because HMMs are conceptually simple but easy to implement wrong. The matrix operations have to happen in the right order. The probability updates need numerical stability tricks to avoid underflow. Seeing it built step-by-step makes the method transparent.

For anyone working with time-series classification, this is worth the hour it takes to work through. Not because you'll hand-roll HMMs in production - you'll use a library - but because understanding how the transition matrix works changes how you think about temporal data.

Memory isn't optional. It's the difference between signal and noise.