MiniMax's M2.5 model costs two cents per API call. Claude Opus 4.5 costs seven. Last week, the cheaper model scored 78.2% on SWE-bench Verified - the hardest coding benchmark there is - while Claude topped out at 73.4%. That shouldn't be possible.

The gap isn't explained by model architecture or parameter count. MiniMax M2.5 isn't secretly larger or more advanced. The difference is what the model sees before it writes code. While most AI coding assistants work from a narrow context window - the file you're editing, maybe a few related imports - this implementation feeds the model a complete map of the codebase first.

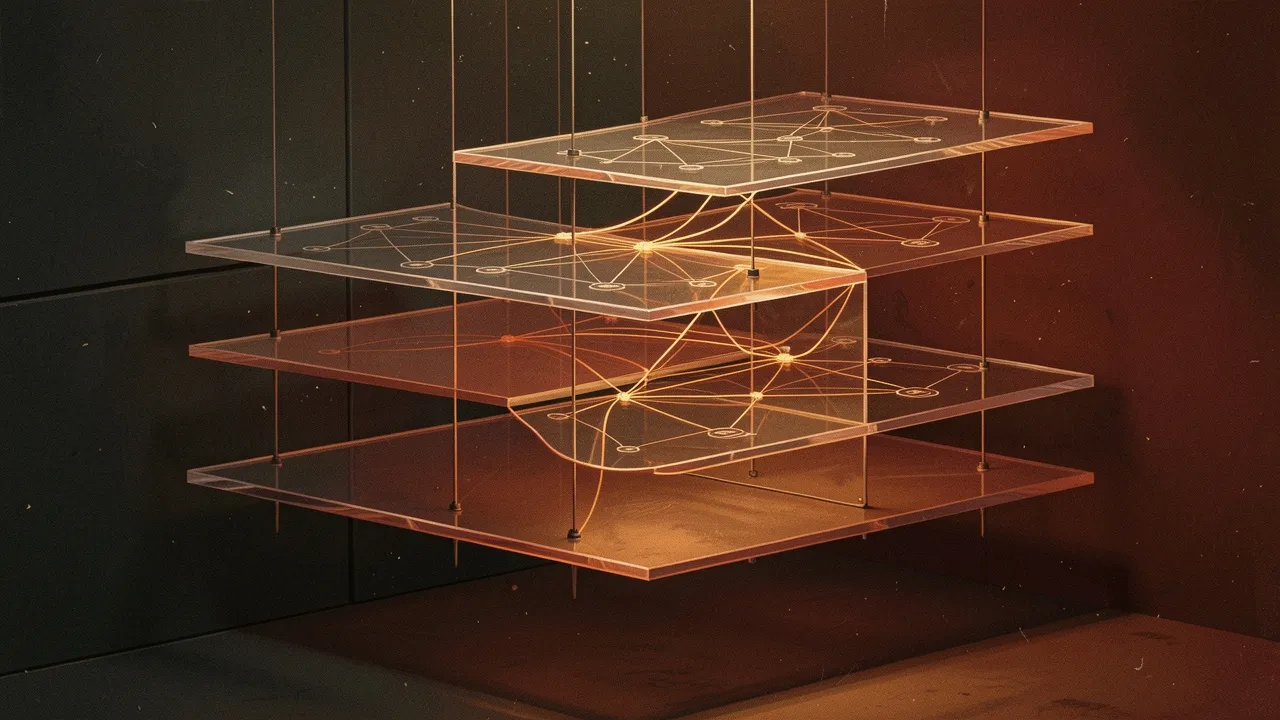

It's called architectural context, and it's delivered through Model Context Protocol (MCP). Before generating a single line of code, the system builds a graph of how the codebase fits together - which modules depend on which, where state flows, what functions call what. Then it hands that graph to the model alongside the task.

What Changes With Full Context

SWE-bench isn't a toy benchmark. It's built from real GitHub issues - the kind where you need to understand how authentication flows through three layers of middleware before you touch a single decorator. Models trained on trillions of tokens still fail these tasks because they're working blind. They see the function. They don't see the system.

With architectural context, M2.5 knows where data comes from before it tries to transform it. It knows which error handlers are upstream. It knows what breaks if you rename a variable. The model isn't smarter - it's better informed. And that information is worth more than raw intelligence.

The result: 3.4x cost reduction for equivalent or better output. For a team running thousands of API calls per day, that's the difference between an experiment and a production tool. For solo developers, it's the difference between using AI coding help occasionally and using it on every commit.

The Broader Pattern

This isn't the first time better retrieval has beaten bigger models. Anthropic's research team has shown that targeted context injection outperforms longer context windows. Google's Gemini experiments proved that structured knowledge graphs improve reasoning accuracy. The pattern holds: precision beats volume.

What makes this result significant is the benchmark itself. SWE-bench Verified is deliberately resistant to prompt hacking. It filters out tasks where models can pattern-match their way to success. The remaining problems require actual code comprehension - understanding dependencies, spotting edge cases, maintaining consistency across files. These are the tasks where developers spend most of their time.

If a $0.02 model with good context beats a $0.07 model with narrow context on those tasks, the implication is clear: we've been optimising the wrong thing. Scaling model parameters has diminishing returns once you hit a competence threshold. Scaling context quality - what the model knows about the specific problem - compounds indefinitely.

What This Means for Builders

For developers already using AI coding tools, this shifts the question from "which model should I use?" to "what context am I giving it?" The best model with bad context loses to a decent model with full system awareness. That's liberating - you're not locked into the most expensive API to get the best results.

For companies building on AI coding assistance, the economics just changed. You can deliver better output at lower cost by investing in retrieval infrastructure instead of chasing the latest flagship model. The tooling to build these context graphs already exists - MCP is open, and the techniques generalise beyond coding tasks.

The harder question is whether this advantage holds as models continue to scale. Will GPT-5 or Claude 5 with narrow context beat MiniMax M2.5 with full context? Maybe. But if both have full context, the cheaper model still wins on value. And right now, most implementations aren't even trying to provide that context.

There's a window here. The teams that figure out intelligent retrieval before the next model generation arrives will have tools that punch above their weight class. The rest will keep paying for compute they don't need.