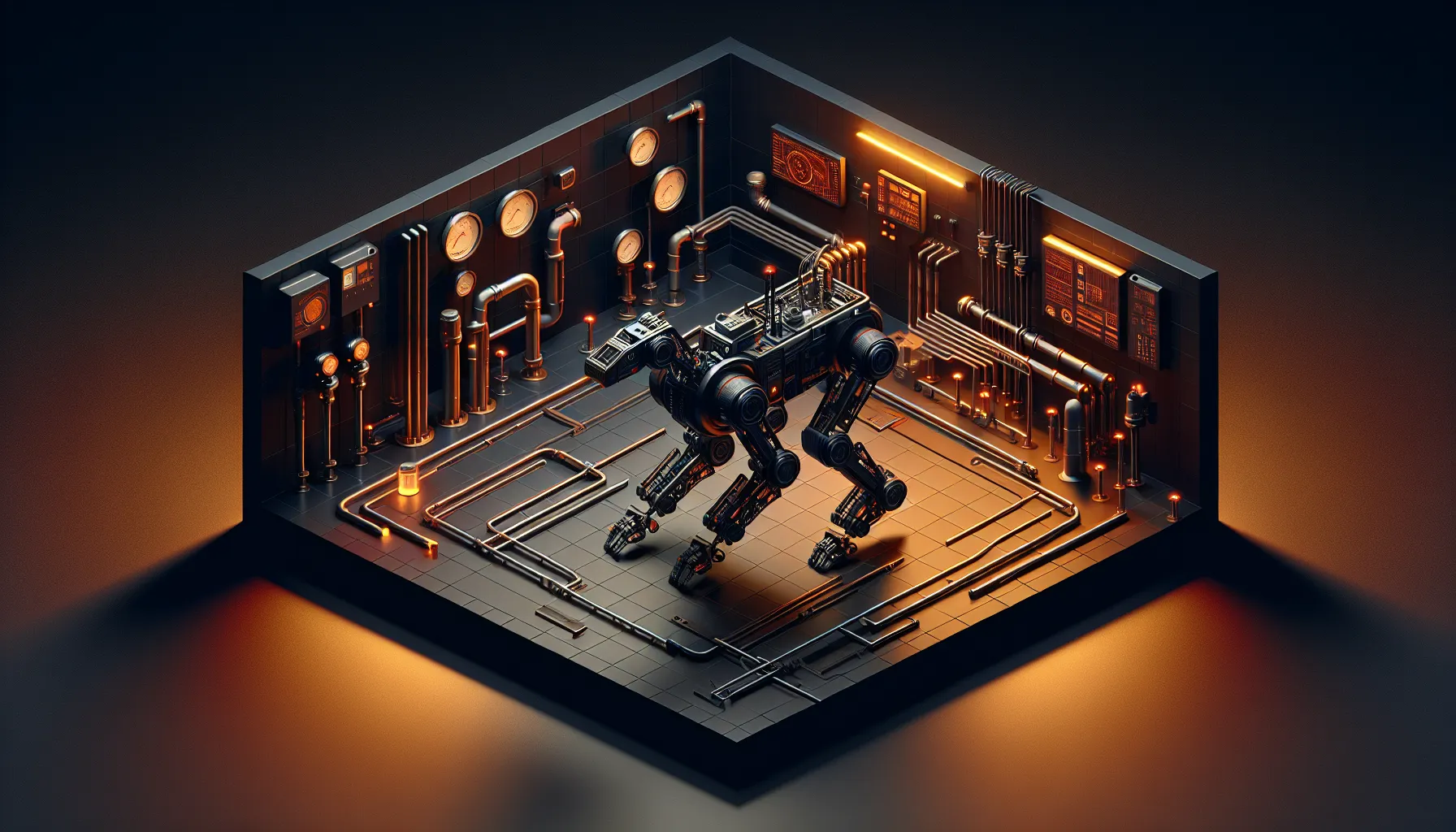

Boston Dynamics just gave Spot a brain upgrade that changes what industrial robots can actually do. The four-legged robot - the one you've seen doing backflips in viral videos - can now inspect factory floors, read instrument gauges, and flag safety violations without being explicitly programmed for each task.

The upgrade comes from integrating Google's Gemini Robotics-ER 1.6 into Spot's existing AIVI-Learning system. What that means in practice: Spot can now understand what it's looking at, not just see it.

From Seeing to Understanding

Previous versions of Spot could navigate environments and avoid obstacles. Impressive, but limited. The robot needed human operators to interpret what its cameras captured. If you wanted Spot to check whether a valve was open or closed, someone had to watch the footage and make the call.

Gemini Robotics-ER changes that equation. The model handles visual reasoning on-device during industrial inspections. Spot can now read analogue gauges - the kind with needles and numbers that humans squint at - and determine whether readings fall within safe parameters. It can spot safety violations like blocked exits or missing warning signs. It can even assess 5S compliance, the workplace organisation methodology that keeps factories running smoothly.

The interesting bit isn't just what Spot can do now. It's that the robot gets better at these tasks over time through cloud-based continuous improvement. Each inspection feeds data back to refine the model. The robot that inspects your facility next month will be smarter than the one that walked through this week.

The Industrial Application

For factory managers and facility operators, this solves a real problem. Industrial inspections are tedious, repetitive, and critical. Miss a gauge reading trending out of spec and you've got downtime. Miss a safety violation and you've got liability. Human inspectors get tired, distracted, or simply miss things in sprawling facilities.

Spot doesn't get tired. It follows the same route every time, checks the same points, applies the same standards. But unlike previous automation attempts, it can handle variation. A gauge partially obscured by shadow? Gemini's visual reasoning sorts it out. New equipment installed since the last inspection? The model adapts.

The cloud-based improvement loop is where this gets genuinely useful. Traditional industrial robots need reprogramming when environments change. New layout? New equipment? Someone has to update the robot's instructions. With continuous learning feeding back through the cloud, Spot's inspection capabilities evolve without manual intervention.

What This Means for Robotics

The broader pattern here matters. We're watching the gap close between what robots can sense and what they can understand. Computer vision has been good enough to see things for years. The missing piece was semantic understanding - knowing what those things mean and what to do about them.

Large language models, extended into visual reasoning, are filling that gap faster than most people expected. Gemini Robotics-ER is optimised specifically for robotic applications - lower latency, better handling of industrial environments, designed to run on the kind of hardware that can be bolted onto a mobile platform.

Boston Dynamics chose cloud-based continuous improvement rather than purely local processing. That's a trade-off. Cloud connectivity means inspection data leaves the robot, which some facilities won't accept for security or proprietary reasons. But it also means every Spot benefits from the collective learning of the entire fleet. One robot encounters a new type of gauge in a chemical plant in Texas, and within days, every Spot in the network knows how to read it.

For developers and builders watching this space, the architecture is worth noting. Boston Dynamics didn't build their own vision model from scratch. They integrated Google's specialised robotics model into their existing platform. That's the emerging pattern across robotics - companies with hardware expertise partnering with AI labs that have model expertise. The integration layer is where the real engineering happens.

Industrial robotics has been stuck in structured environments for decades. Robots that could only operate in carefully controlled spaces, following predetermined paths, handling known objects. What we're seeing now is the beginning of robots that can operate in the messy, variable, unpredictable environments where humans currently work. Spot reading gauges in a factory is just the visible application. The underlying capability - visual reasoning applied to real-world tasks - unlocks a much wider range of automation.

The question for facility managers isn't whether to automate inspections. It's how quickly they can afford to. The robots are ready. The models work. The gap between deployment and ROI is shrinking fast.