Enterprise AI agents have a trust problem. Companies want the productivity boost, but nobody wants to hand autonomous systems the keys to sensitive data without serious safeguards. Nvidia's answer is NemoClaw - an enterprise version of OpenClaw that treats security as infrastructure, not an afterthought.

This matters because the usual approach to AI agent security is bolting protection on later. Build the agent first, patch the holes second. NemoClaw flips that logic. Security is baked into the architecture from the start, which means enterprises can actually deploy these systems without lying awake at night wondering what their AI is accessing.

Why Enterprise AI Agents Need Different Security

The challenge with autonomous AI systems is not just what they can do - it's what they can reach. An agent that can access your CRM, your email system, your customer database, and your financial records has enormous potential to help. It also has enormous potential to leak, misuse, or mishandle sensitive information.

Traditional security models assume a human is making decisions. AI agents operate differently. They make thousands of micro-decisions per task, often accessing multiple systems in sequence. That creates a vastly larger attack surface than a single human login.

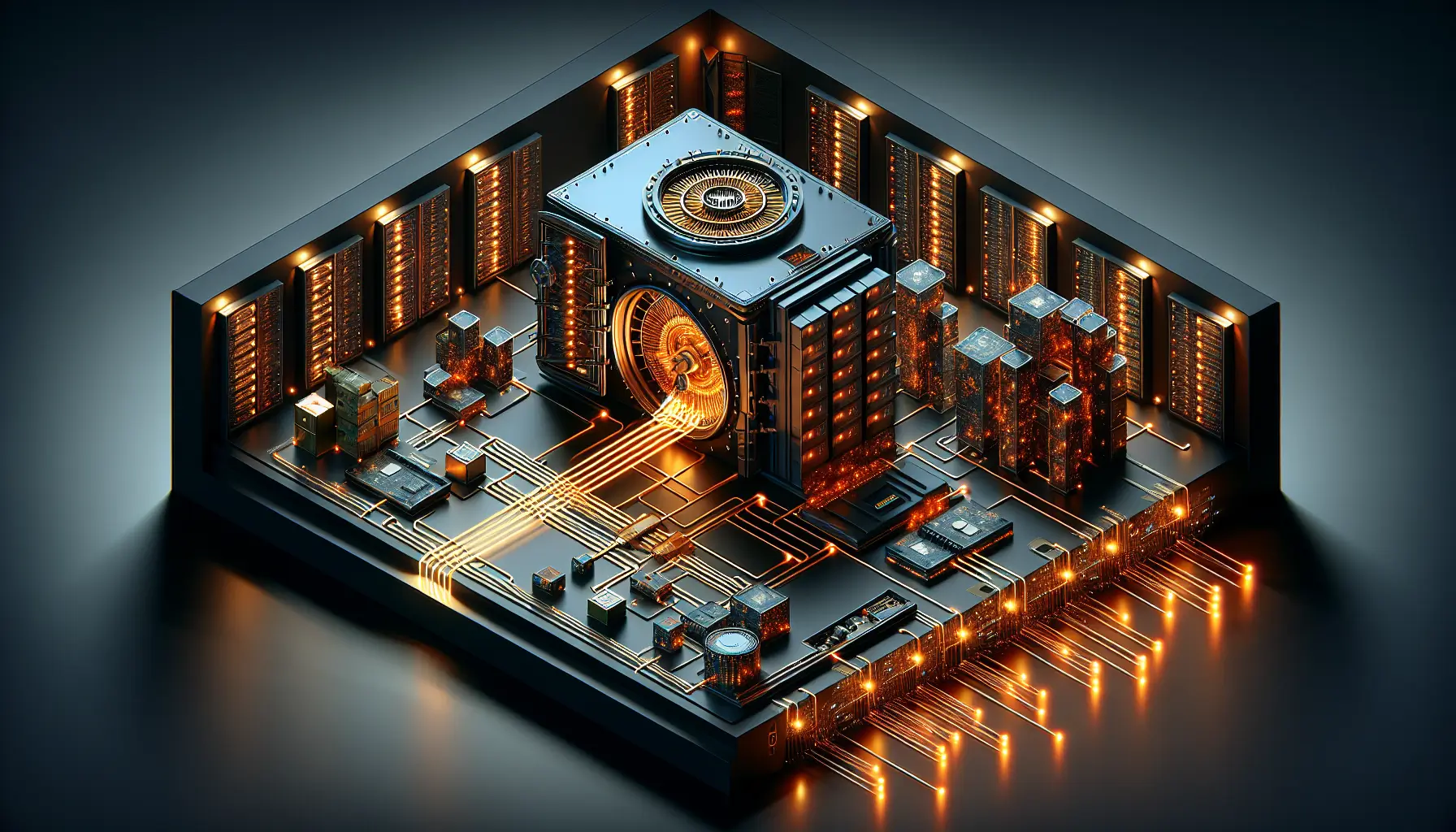

Nvidia's approach with NemoClaw addresses this by embedding security controls at the agent's core rather than wrapping them around the outside. Think of it like designing a vault door into a building's foundations instead of adding a padlock to a regular door later. The protection is structural, not cosmetic.

What NemoClaw Actually Does

NemoClaw builds on OpenClaw's framework but adds enterprise-grade access controls, audit logging, and permission boundaries that make sense in regulated industries. An agent can execute tasks across systems, but it operates within defined rails. If it tries to access something outside its approved scope, the request fails - and the attempt gets logged.

This is not just about preventing malicious use. It's about preventing accidental misuse. An agent optimising for speed might pull data from a restricted source if no boundaries exist. With NemoClaw's architecture, those boundaries are hard-coded into the agent's decision-making process.

For businesses in finance, healthcare, or any sector handling personal data, this changes the calculus. The question shifts from "Can we trust this agent?" to "What specific tasks can this agent perform safely?" That is a much easier question to answer.

The Bigger Shift in AI Agent Design

What Nvidia is doing here signals a broader change in how the industry thinks about deploying AI systems at scale. The early wave of AI tools prioritised capability - what can this model do? The next wave prioritises deployment readiness - how do we put this into production without creating new risks?

Security-first design is not glamorous, but it is what separates experimental AI from enterprise AI. Companies will not deploy agents that feel like a gamble. They need systems that integrate into existing compliance frameworks, respect access controls, and provide clear audit trails when something goes wrong.

NemoClaw gives them that foundation. Whether it becomes the standard remains to be seen, but the approach - treating security as core infrastructure rather than optional protection - is the right direction.

The promise of AI agents has always been about removing friction from workflows. The challenge has been doing that without introducing new vulnerabilities. Nvidia's bet is that enterprises will choose agents they can control over agents that are simply powerful. In regulated industries, that is almost certainly the right call.