Most developers encounter network debugging backwards. You're troubleshooting a Docker container that can't reach an API. Or a load balancer that's dropping connections. Or a microservice that works locally but fails in production. You search Stack Overflow for "connection refused" and get answers involving subnet masks, routing tables, and MAC addresses - concepts that weren't in your bootcamp curriculum.

This guide fills that gap by explaining network devices through the lens of actual problems developers face when deploying applications. Not a bottom-up theoretical treatment. A practical map of which device handles what and why it matters when your API call times out.

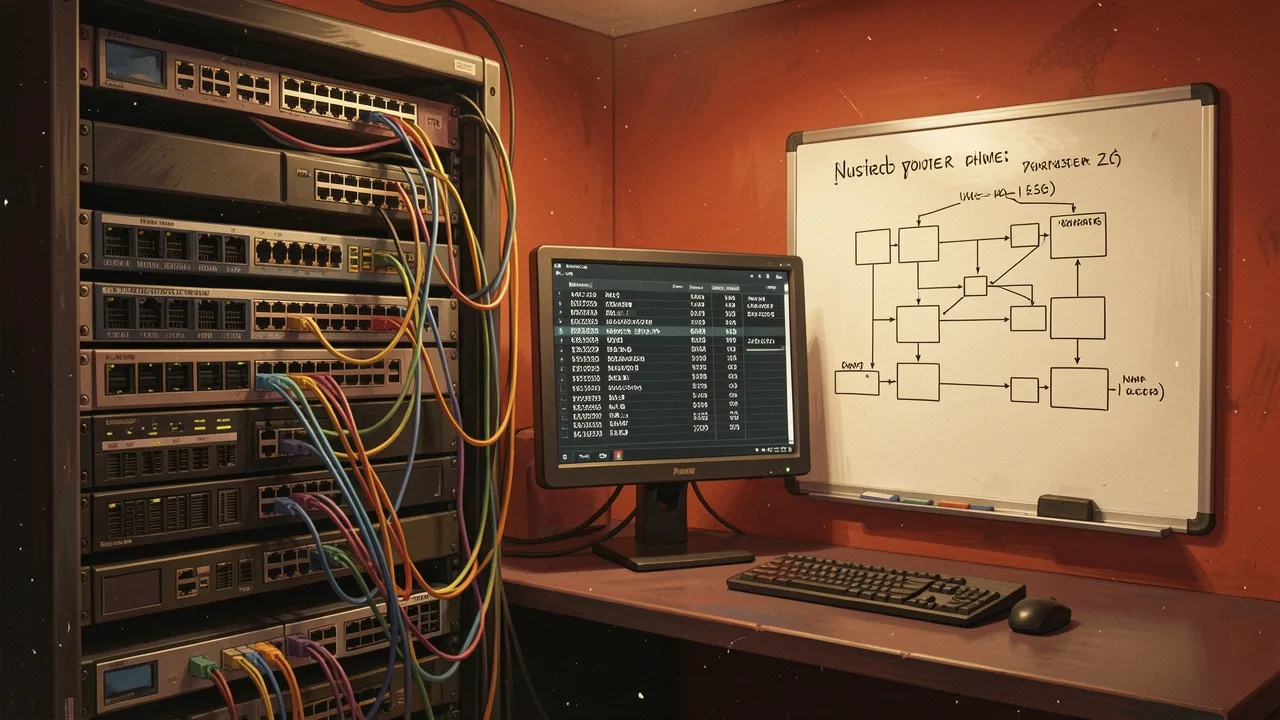

Hubs, Switches, and Why Your Docker Network Works Differently

The foundational concept is network layers - specifically, the difference between Layer 2 (data link) and Layer 3 (network). A hub operates at Layer 1, broadcasting every packet to every connected device. Inefficient but simple. A switch operates at Layer 2, learning which MAC addresses are on which ports and forwarding packets selectively. A router operates at Layer 3, making decisions based on IP addresses and subnet boundaries.

This matters because Docker's default bridge network behaves like a virtual switch. Containers on the same bridge can find each other by container name because Docker runs an internal DNS service that resolves names to the bridge network's private IP addresses. But containers on different bridges can't communicate unless you explicitly route between them. That's a Layer 3 problem - you need routing rules, not just switching.

Understanding the distinction explains why certain fixes work. If containers can't talk across Docker networks, adding a route solves it. If they can't talk on the same network, you're debugging name resolution or firewall rules - different layer, different tools.

MAC Address Tables and Why Switches Know Where to Send Packets

Switches maintain MAC address tables - mappings between MAC addresses and physical ports. When a packet arrives, the switch checks the destination MAC address against its table. If it knows which port leads to that address, it forwards the packet there. If it doesn't, it broadcasts to all ports except the source.

This learning process is dynamic. Every time a device sends a packet, the switch records "this MAC address is reachable through this port". Over time, the table fills in, and broadcast traffic decreases. But the table has limits - both in size and in how long entries persist. If a device goes offline and returns with a different port assignment, the switch must relearn its location.

For developers, this explains intermittent connectivity issues in environments where devices move between network segments - laptops reconnecting to WiFi, containers rescheduled to different hosts, virtual machines migrating between hypervisors. The switch's table might be stale, causing packets to go to the wrong port until the next broadcast cycle forces a relearn.

Subnet Masks, CIDR Notation, and Why IP Address Math Matters

Subnets divide IP address space into segments. The subnet mask determines which portion of an IP address identifies the network and which identifies the host. A /24 network (255.255.255.0) means the first 24 bits are the network portion, leaving 8 bits for host addresses - 256 possible addresses, minus network and broadcast, gives 254 usable hosts.

CIDR notation (10.0.1.0/24) is shorthand for the same concept. The /24 means "the first 24 bits are fixed, the rest are variable". This determines routing behaviour. If two IP addresses share the same network portion, they're on the same subnet and can communicate via Layer 2 switching. If they differ, they need Layer 3 routing.

This matters when configuring cloud VPCs or container networks. If you assign a microservice an IP address outside your subnet's range, it can't communicate with other services even if firewalls are open. The routing table doesn't have a rule for how to reach it. The packet gets dropped before any application-layer logic runs.

Routing Decisions and Why Your API Call Hits the Wrong Server

Routers maintain routing tables - rules for where to send packets based on destination IP address. The table contains network prefixes and next-hop addresses. When a packet arrives, the router finds the most specific matching prefix and forwards the packet to that next hop.

"Most specific" matters. If you have routes for both 10.0.0.0/8 and 10.0.1.0/24, a packet destined for 10.0.1.50 matches both. The router chooses the /24 route because it's more specific - longer prefix, narrower match. This is how traffic gets directed to the right subnet even when multiple routes overlap.

For developers deploying to Kubernetes or multi-region cloud environments, this explains routing behaviour that looks arbitrary. If your service calls another service by IP and gets routed to an unexpected instance, check your routing tables. You might have overlapping CIDR blocks, or a more specific route pointing to an old deployment, or a default gateway override that's sending traffic to the wrong cluster.

Translating Theory to Debugging

The guide's strength is connecting these concepts to real troubleshooting workflows. If a container can't reach an external API, you check routing tables to confirm a path exists. If it can reach the API but gets no response, you check firewall rules and NAT configurations. If it works intermittently, you suspect MAC address table timeouts or ARP cache issues.

Each layer of the network stack has its own failure modes. Layer 2 failures look like intermittent connectivity on the same subnet. Layer 3 failures look like "no route to host" errors. Layer 4 failures look like connection timeouts. Knowing which layer is failing determines which tools you reach for - tcpdump for packet inspection, traceroute for routing analysis, arp for MAC address resolution.

For developers who learned networking through trial and error rather than formal study, this creates a mental model for how packets move from application code to the wire and back. Not exhaustive, but sufficient to debug the majority of connectivity issues that arise when deploying applications to containerised or cloud infrastructure. The theory exists to support the practice, not the other way around.