Most large language models spend a surprising amount of their training time... waiting. Processors sit idle between tasks, and that downtime adds up. MIT researchers spotted this pattern and built something clever around it.

The method is called 'Taming the Long Tail', and it works by training smaller 'drafter' models during those idle moments. These drafters learn to predict what the larger model will output next. When they're right, the system skips ahead. When they're wrong, the main model corrects them. The result? Training speeds double without losing accuracy.

The Efficiency Problem Nobody Talks About

Training large language models is expensive. Not just in money, but in time and energy. The bigger the model, the longer it takes, and the more compute resources you need. Most efficiency work focuses on making the main model faster or smaller. MIT's approach is different - it doesn't touch the main model at all.

Instead, it looks at the gaps. During training, processors often wait for data to arrive, for synchronisation between nodes, or for other tasks to complete. These gaps are tiny individually, but across millions of training steps, they compound into significant wasted time.

The MIT team asked a simple question: what if we used that idle time to train something useful?

How Drafter Models Work

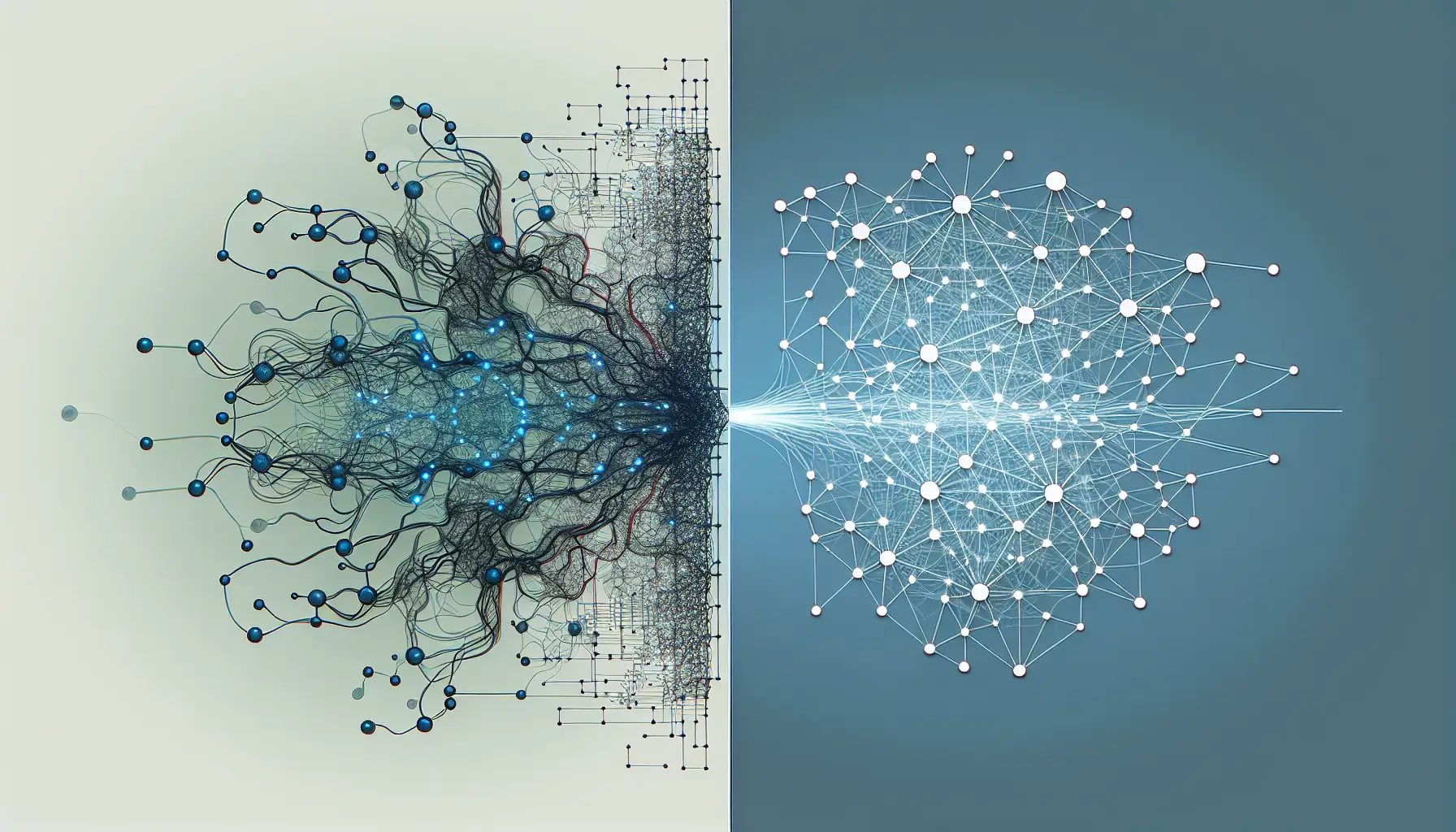

The drafter model is much smaller than the main model - think of it as a quick sketch versus a detailed painting. During training, when the main model is idle, the drafter steps in and attempts to predict the next output. If the drafter's prediction matches what the main model would have produced, the system accepts it and moves on. If not, the main model takes over and the drafter learns from its mistake.

This creates a feedback loop. The drafter gets better at predicting the main model's behaviour over time, which means more of its predictions are accepted, which means more idle time is reclaimed.

The clever part is that this happens during training, not after. Traditional speculative decoding (where a small model drafts and a large model verifies) is used during inference - when the model is actually being used. MIT's method applies the same principle to training itself, which is where the real computational cost sits.

What This Means for Model Development

Doubling training speed has direct implications. For researchers, it means faster experimentation. For companies, it means lower costs. For the broader field, it means models that would have taken weeks to train can now be ready in days.

But there's something more interesting here. This method doesn't require changing model architectures or rewriting training algorithms. It works alongside existing methods, which means it stacks with other efficiency improvements. A research lab that's already using quantisation or mixed-precision training can add this on top and see compounding gains.

It also opens up training possibilities for organisations with tighter budgets. If you can train a competitive model in half the time, the barrier to entry drops. That's not just good for innovation - it's good for competition.

The Accuracy Question

Speed improvements often come with a trade-off. Faster training usually means lower accuracy, or at least more careful tuning to maintain it. MIT's method claims to preserve accuracy, which is significant.

The reason this works is that the drafter isn't making final decisions. It's proposing outputs, which the main model can reject. The main model remains the source of truth. This means the quality of the final model is unchanged - the drafter is simply helping it get there faster.

There's still a cost, of course. Training the drafter model itself requires compute, and verifying its predictions isn't free. But because the drafter is so much smaller, and because it runs during otherwise-idle time, the net gain is substantial.

What's Next?

This isn't a finished product yet. It's a research method that works in controlled conditions. The real test will be how it performs across different model architectures, training datasets, and hardware setups. Some models might see bigger gains than others. Some types of training tasks might be better suited to this approach.

But the core insight is sound. There's wasted time in training pipelines, and reclaiming it makes everything faster without making anything worse. That's the kind of efficiency improvement that compounds across the entire field.

If this method becomes standard practice, the next generation of models could arrive sooner, cost less, and be accessible to more teams. Not revolutionary - just sensible engineering. And sometimes, that's exactly what matters.