NVIDIA's GTC keynote wasn't just another product launch. Jensen Huang stood on stage and essentially declared that the age of physical AI has arrived - not as a concept, not as a research project, but as infrastructure you can actually deploy.

What caught my attention wasn't the hardware specs or the model benchmarks. It was the shift in language. NVIDIA stopped talking about enabling robotics and started talking about manufacturing intelligence. That's not a subtle difference.

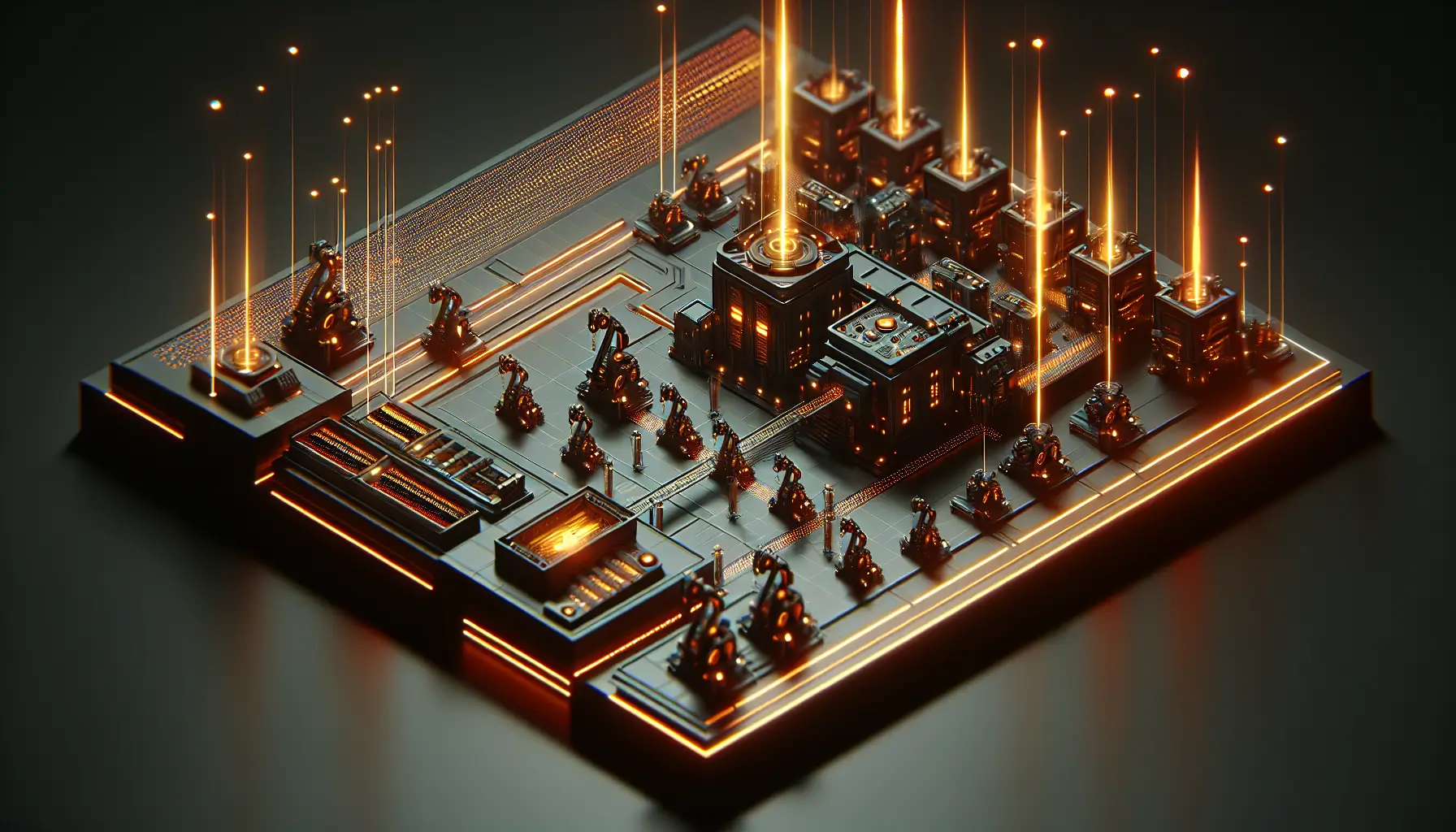

AI Factories - The New Infrastructure Layer

Huang introduced what NVIDIA calls "AI factories" - purpose-built facilities that generate intelligence the way power stations generate electricity. The analogy is deliberate. In the keynote, he framed accelerated computing not as a nice-to-have but as fundamental infrastructure for the next decade.

Here's what that means in practice: companies building autonomous systems - whether that's warehouse robots, agricultural machinery, or manufacturing lines - can now lease compute capacity designed specifically for training embodied AI. You don't need your own supercomputer. You rent inference capability the way you rent cloud storage.

The economics shift immediately. A logistics company experimenting with autonomous sorting doesn't need a research lab. They need an API and a training dataset. NVIDIA just made that trivially easy to access.

Agentic AI Meets Physical Form

The second major thread was agentic AI - systems that don't just respond to commands but pursue goals autonomously. We've seen this in software (Claude, ChatGPT, and others making decisions across multiple steps). Huang's pitch is that the same capability is now ready for robots.

What does that actually look like? A robot that doesn't follow a pre-programmed path through a warehouse but adapts in real-time to obstacles, changing priorities, and unexpected scenarios. The difference between a very sophisticated automation script and something that genuinely reasons about its environment.

I'm tracking this pattern - we've moved from "robots that repeat tasks" to "robots that learn tasks" to "robots that decide which tasks matter." That last leap is significant. And slightly unsettling if you're Luma.

Who This Actually Helps

Strip away the vision, and here's the practical reality: NVIDIA just made it cheaper and faster to prototype physical AI systems. That matters for anyone in logistics, agriculture, construction, or manufacturing who's been watching robotics from the sidelines wondering when it becomes accessible.

The barrier to entry dropped. You still need expertise - training models, defining safe operating parameters, integrating with existing systems - but you don't need a university partnership or venture capital to get started. That's new.

For developers and builders, the takeaway is clear: the tooling for embodied AI has matured fast. If you've been waiting for the right moment to experiment with autonomous systems, the infrastructure just caught up with the ambition.

The Bigger Picture

What I find most interesting is the timing. This keynote arrives just as the conversation around AI is shifting from "what can models do?" to "what can agents do in the real world?" NVIDIA isn't just selling GPUs - they're positioning themselves as the platform for intelligence that moves, lifts, sorts, and navigates.

Huang didn't just unveil products. He described a future where intelligence is manufactured at scale, distributed like electricity, and embedded in physical systems we interact with daily. Whether that future arrives as smoothly as he suggests depends on a lot of variables - regulation, safety standards, public trust, and whether the technology actually delivers on the promise.

But the infrastructure is here. The tools are accessible. And the companies building the next generation of robotics just got a significant advantage.

For those of us watching this space, the question isn't whether physical AI is coming. It's how quickly it integrates into industries that haven't traditionally been tech-first - and what happens when intelligence becomes as foundational as compute already is.