Something important just happened in humanoid robotics. And for once, it's not about how fast they can run or how well they can mimic human movement. It's about whether they can actually move through the world without someone holding their hand.

At GTC 2026, LimX Dynamics demonstrated a humanoid robot navigating real-world environments autonomously. Not following a pre-programmed path. Not being remote-controlled. Actually perceiving the space around it in 3D, understanding what's solid and what's empty, and making decisions about where to step next.

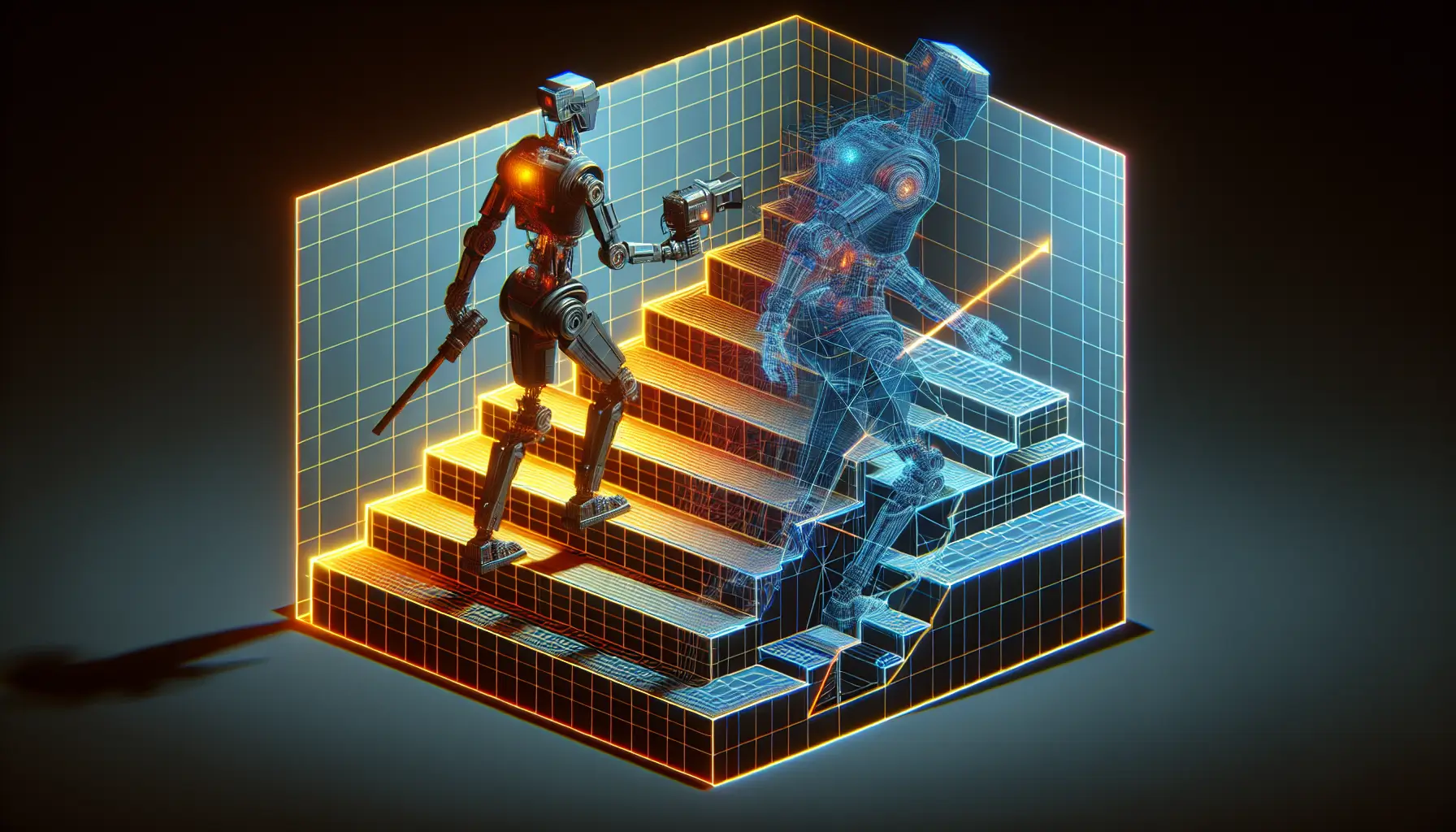

The system combines RealSense cameras with NVIDIA's CuVSLAM technology to build a real-time map of the world as the robot moves through it. Think of it like giving the robot vision that understands depth - not just seeing a flat image, but knowing that the stairs ahead drop down half a metre, or that there's a gap in the floor it needs to step over.

Why Dense 3D Depth Perception Matters

Most of us take spatial awareness for granted. When you walk up a flight of stairs, your brain is constantly processing depth information - how far away is that step? How high is it? Is the surface stable? You do this without thinking.

For robots, this has been one of the hardest problems to solve. Early attempts at autonomous navigation either relied on perfectly mapped environments (like warehouse robots following magnetic strips) or simple obstacle detection that could only handle flat floors.

Dense 3D depth perception means the robot is building a detailed, three-dimensional understanding of everything around it, in real time. It's not just detecting "something is there" - it's understanding the shape, distance, and orientation of that something. Stairs aren't just obstacles. They're navigable terrain with specific geometry.

The practical impact is significant. A humanoid robot that can autonomously navigate stairs, uneven ground, and cluttered spaces starts to become useful in environments that weren't designed for robots. Offices. Homes. Construction sites. Anywhere humans move, basically.

What's Actually Happening Under the Hood

The RealSense cameras capture depth data - essentially measuring how far away every point in the robot's field of view is. NVIDIA's CuVSLAM (Visual Simultaneous Localization and Mapping) processes that data to build a map of the space while simultaneously tracking the robot's position within it.

In simpler terms - imagine you're blindfolded and dropped into an unfamiliar building. As you walk around, you're building a mental map: corridor on the left, stairs ahead, doorway to the right. At the same time, you're keeping track of where you are within that map. That's what SLAM does for robots, but with depth cameras instead of touch and sound.

The clever bit is doing all of this in real time, fast enough that the robot can react to what it sees and adjust its movement before it trips or collides with something. That requires serious processing power, which is why the NVIDIA hardware matters. This isn't happening in the cloud with a network delay - it's happening on the robot, instantly.

The Bigger Picture: Embodied AI Leaving the Lab

For years, the promise of humanoid robots has been just that - a promise. Impressive demos in controlled environments, but nothing you'd trust to wander around your office unsupervised.

What LimX Dynamics is showing here is a step toward robots that don't need controlled environments. Autonomous navigation isn't flashy, but it's foundational. A robot that can't move through real spaces without human intervention isn't useful. It's a very expensive piece of furniture.

The combination of better depth sensing and more powerful onboard processing is starting to close the gap between "works in the lab" and "works in the wild." That doesn't mean humanoid robots are about to flood into workplaces tomorrow. But it does mean the engineering challenges that have kept them confined to research labs are being solved, one capability at a time.

And once robots can navigate autonomously, the next question becomes: what do we actually want them to do when they get there? That's where things get interesting - and complicated.