Most AI workflows fail in production for the same reason: they treat everything like an AI problem. Then the model hallucinates a date, formats output incorrectly, or routes to the wrong endpoint, and the entire pipeline breaks.

The fix isn't better prompts. It's knowing when NOT to use AI at all.

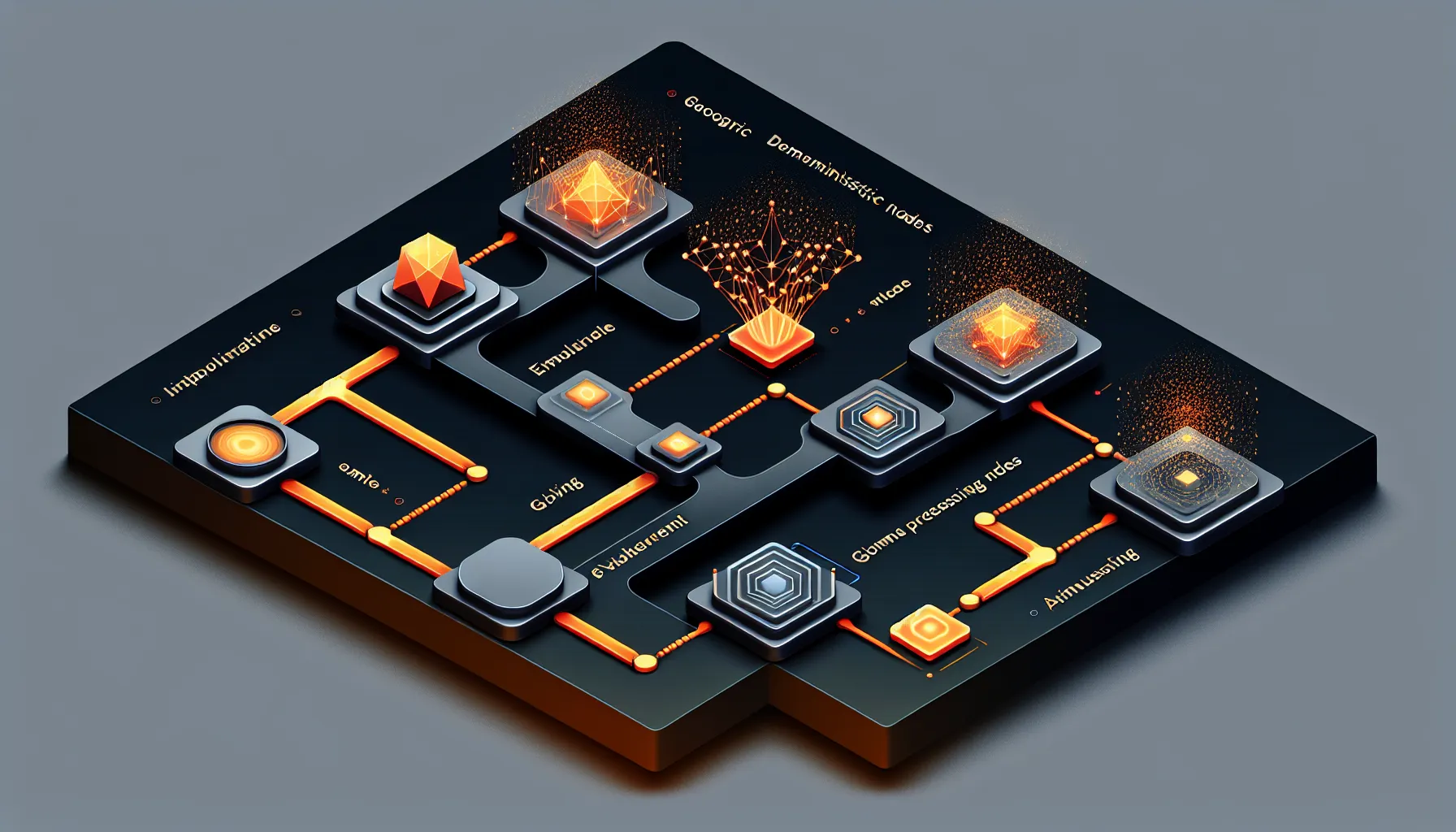

Deterministic Steps Handle Structure, AI Steps Handle Interpretation

n8n's production AI playbook breaks workflows into two categories: deterministic steps and AI steps. Deterministic steps do the work that has a single correct answer - validation, routing, data cleaning, formatting. AI steps handle interpretation, generation, and nuanced decision-making.

Here's a practical example. You're building a customer support triage system. When an email arrives, you need to extract the customer ID, check if they're a premium user, route to the right team, and generate a response.

Bad approach: throw the entire email at an AI model and ask it to do everything. It might work 80% of the time. The other 20%, it formats the customer ID wrong, misroutes the ticket, or hallucinates a policy that doesn't exist.

Good approach: deterministic step extracts the customer ID with regex, another deterministic step queries your database for user tier, a routing step uses boolean logic to assign the team, and THEN an AI step generates the response with guardrails around what it can promise.

The AI only touches the part that actually needs interpretation. Everything else runs on predictable, testable logic.

Pre-Processing Wins You Reliability

Before AI sees your data, clean it deterministically. Strip HTML tags, normalise whitespace, remove PII if needed, validate required fields. This is boring work that saves you from debugging hallucinations later.

If you're processing invoices, don't ask the AI to extract dates from messy PDFs and then calculate payment terms. Extract text deterministically, use regex to find date patterns, validate formats, THEN use AI only if the date format is ambiguous. For calculation, use actual arithmetic - not a model that might round incorrectly.

The rule: if a deterministic function can do it reliably, don't use AI. AI is the tool for the parts that NEED interpretation, not the parts that need precision.

Structured Outputs Prevent Downstream Failures

When AI generates output, force it into a schema. Not freeform text that you parse later - actual structured JSON with required fields and type validation.

Most AI platforms now support structured output natively. Define your schema: this field is a string, this one is a number, this one is an enum from this list. The model either returns valid JSON matching your schema, or it fails explicitly. No guessing. No post-processing.

This prevents the classic failure mode where the AI returns something like "approximately 50" when you need an integer. With structured outputs, it either returns 50 or it errors. You handle the error case deterministically - retry, use a default, log and alert - instead of discovering the problem three steps downstream when "approximately 50" breaks your calculation.

Conditional Routing Keeps AI Focused

Not every input needs AI processing. Use deterministic routing to decide when to invoke the model at all.

Simple support queries with keywords like "reset password" or "billing question" can route deterministically to knowledge base articles or specific teams. Only the ambiguous cases - the ones that need interpretation - should hit the AI step. This saves costs, reduces latency, and limits your exposure to hallucination risk.

The pattern: deterministic classifier first. If confidence is high, route deterministically. If confidence is low or the case is genuinely ambiguous, invoke AI with clear constraints on what it can decide.

Guardrails Before and After AI Steps

Before the AI step: validate inputs, enforce length limits, strip malicious content. Don't trust user input. Clean it deterministically before it touches the model.

After the AI step: validate outputs. Does the generated email address match a regex? Does the extracted number fall within expected bounds? Does the routed category exist in your system? Check deterministically, and handle failures explicitly.

Guardrails aren't about distrusting AI. They're about building systems that fail gracefully. When the AI produces something unexpected, you want to catch it immediately and handle it, not discover it when a customer gets a nonsense email.

Reusable Patterns Save You From Reinventing

The playbook includes templates for common patterns: data extraction with validation, classification with fallback routing, generation with structured outputs, multi-step workflows with error handling. These aren't theoretical - they're production patterns from systems that actually run.

Start with a template that matches your use case. Customise the deterministic steps for your data sources and schemas. Configure the AI step with your model and prompt. Add guardrails specific to your domain. Test the failure cases explicitly - what happens when the input is malformed, when the AI refuses, when downstream systems are unavailable.

The goal isn't to eliminate AI failures. It's to make them visible, contained, and recoverable. Deterministic steps create the structure that makes that possible.

Building for Production Means Planning for Failure

Prototypes can be pure AI. Production systems need structure. The hybrid approach - deterministic steps for predictability, AI steps for interpretation - is how you get from demo to deployed.

The reliability doesn't come from better prompts. It comes from narrowing what the AI is responsible for, validating everything before and after, and handling failures explicitly. That's the difference between a workflow that works 80% of the time and one you can actually run in production.